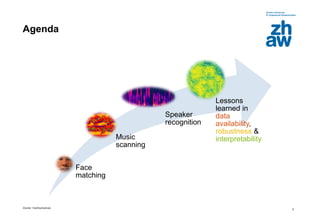

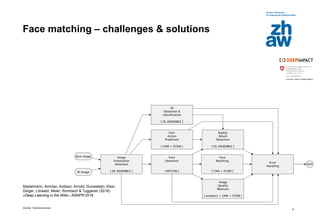

Das Dokument diskutiert den Einsatz von Deep Learning zur Mustererkennung in den Bereichen Gesichtserkennung, Musikverarbeitung und Sprecher-Clustering. Es werden Herausforderungen und Lösungen bei der Implementierung sowie wichtige Erkenntnisse zu Datenverfügbarkeit, Robustheit und Modellinterpretierbarkeit vorgestellt. Abschließend wird betont, dass Deep Learning in normalen Geschäftsbereichen angewendet werden kann, ohne dass große Datenmengen erforderlich sind.

![Zürcher Fachhochschule

36

Suche der Parameter einer Funktion?

• Unser Neuronales Netz: 𝑓 𝑾 𝑥 = 𝑦

mit Bild 𝑥, echtem Resultat 𝑦 und Parametern 𝑾

(𝑾 = {𝑤1, 𝑤2} anfangs zufällig gewählt)

• Fehlermass: 𝑙 𝑾 =

1

𝑁

σ𝑖=1

𝑁

𝑓 𝑾 𝑥𝑖 − 𝑦𝑖

2

Durchschnitt der quadratischen Abweichungen

über alle Bilder (Loss)

Fehlerlandschaft

𝑤2

𝑤1

𝑙(𝑤1, 𝑤2)

Wahrscheinlichkeit [%] für bestimmtes Ergebnis](https://image.slidesharecdn.com/bat4001zhawstadelmanndl-based-pattern-recognition-in-business-180804152850/85/BAT40-ZHAW-Stadelmann-Deep-Learning-based-Pattern-Recognition-in-Business-36-320.jpg)

![Zürcher Fachhochschule

37

Suche der Parameter einer Funktion?

• Unser Neuronales Netz: 𝑓 𝑾 𝑥 = 𝑦

mit Bild 𝑥, echtem Resultat 𝑦 und Parametern 𝑾

(𝑾 = {𝑤1, 𝑤2} anfangs zufällig gewählt)

• Fehlermass: 𝑙 𝑾 =

1

𝑁

σ𝑖=1

𝑁

𝑓 𝑾 𝑥𝑖 − 𝑦𝑖

2

Durchschnitt der quadratischen Abweichungen

über alle Bilder (Loss)

Fehlerlandschaft

𝑤2

𝑤1

𝑙(𝑤1, 𝑤2)

Methode: Anpassung der Gewichte

von 𝑓 in Richtung der steilsten

Steigung (abwärts) von 𝐽

Wahrscheinlichkeit [%] für bestimmtes Ergebnis](https://image.slidesharecdn.com/bat4001zhawstadelmanndl-based-pattern-recognition-in-business-180804152850/85/BAT40-ZHAW-Stadelmann-Deep-Learning-based-Pattern-Recognition-in-Business-37-320.jpg)

![Zürcher Fachhochschule

38

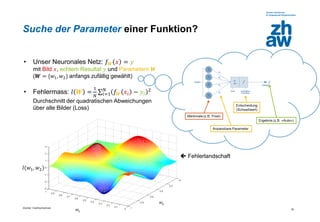

Suche der Parameter einer Funktion?

• Unser Neuronales Netz: 𝑓 𝑾 𝑥 = 𝑦

mit Bild 𝑥, echtem Resultat 𝑦 und Parametern 𝑾

(𝑾 = {𝑤1, 𝑤2} anfangs zufällig gewählt)

• Fehlermass: 𝑙 𝑾 =

1

𝑁

σ𝑖=1

𝑁

𝑓 𝑾 𝑥𝑖 − 𝑦𝑖

2

Durchschnitt der quadratischen Abweichungen

über alle Bilder (Loss)

Fehlerlandschaft

𝑤2

𝑤1

𝑙(𝑤1, 𝑤2)

Methode: Anpassung der Gewichte

von 𝑓 in Richtung der steilsten

Steigung (abwärts) von 𝐽

Wahrscheinlichkeit [%] für bestimmtes Ergebnis](https://image.slidesharecdn.com/bat4001zhawstadelmanndl-based-pattern-recognition-in-business-180804152850/85/BAT40-ZHAW-Stadelmann-Deep-Learning-based-Pattern-Recognition-in-Business-38-320.jpg)

![Zürcher Fachhochschule

39

Suche der Parameter einer Funktion?

• Unser Neuronales Netz: 𝑓 𝑾 𝑥 = 𝑦

mit Bild 𝑥, echtem Resultat 𝑦 und Parametern 𝑾

(𝑾 = {𝑤1, 𝑤2} anfangs zufällig gewählt)

• Fehlermass: 𝑙 𝑾 =

1

𝑁

σ𝑖=1

𝑁

𝑓 𝑾 𝑥𝑖 − 𝑦𝑖

2

Durchschnitt der quadratischen Abweichungen

über alle Bilder (Loss)

Fehlerlandschaft

𝑤2

𝑤1

𝑙(𝑤1, 𝑤2)

Methode: Anpassung der Gewichte

von 𝑓 in Richtung der steilsten

Steigung (abwärts) von 𝐽

Wahrscheinlichkeit [%] für bestimmtes Ergebnis](https://image.slidesharecdn.com/bat4001zhawstadelmanndl-based-pattern-recognition-in-business-180804152850/85/BAT40-ZHAW-Stadelmann-Deep-Learning-based-Pattern-Recognition-in-Business-39-320.jpg)

![Zürcher Fachhochschule

40

Suche der Parameter einer Funktion?

• Unser Neuronales Netz: 𝑓 𝑾 𝑥 = 𝑦

mit Bild 𝑥, echtem Resultat 𝑦 und Parametern 𝑾

(𝑾 = {𝑤1, 𝑤2} anfangs zufällig gewählt)

• Fehlermass: 𝑙 𝑾 =

1

𝑁

σ𝑖=1

𝑁

𝑓 𝑾 𝑥𝑖 − 𝑦𝑖

2

Durchschnitt der quadratischen Abweichungen

über alle Bilder (Loss)

Fehlerlandschaft

𝑤2

𝑤1

𝑙(𝑤1, 𝑤2)

Methode: Anpassung der Gewichte

von 𝑓 in Richtung der steilsten

Steigung (abwärts) von 𝐽

Wahrscheinlichkeit [%] für bestimmtes Ergebnis](https://image.slidesharecdn.com/bat4001zhawstadelmanndl-based-pattern-recognition-in-business-180804152850/85/BAT40-ZHAW-Stadelmann-Deep-Learning-based-Pattern-Recognition-in-Business-40-320.jpg)

![Zürcher Fachhochschule

41

Suche der Parameter einer Funktion?

• Unser Neuronales Netz: 𝑓 𝑾 𝑥 = 𝑦

mit Bild 𝑥, echtem Resultat 𝑦 und Parametern 𝑾

(𝑾 = {𝑤1, 𝑤2} anfangs zufällig gewählt)

• Fehlermass: 𝑙 𝑾 =

1

𝑁

σ𝑖=1

𝑁

𝑓 𝑾 𝑥𝑖 − 𝑦𝑖

2

Durchschnitt der quadratischen Abweichungen

über alle Bilder (Loss)

Fehlerlandschaft

𝑤2

𝑤1

𝑙(𝑤1, 𝑤2)

Methode: Anpassung der Gewichte

von 𝑓 in Richtung der steilsten

Steigung (abwärts) von 𝐽

Wahrscheinlichkeit [%] für bestimmtes Ergebnis](https://image.slidesharecdn.com/bat4001zhawstadelmanndl-based-pattern-recognition-in-business-180804152850/85/BAT40-ZHAW-Stadelmann-Deep-Learning-based-Pattern-Recognition-in-Business-41-320.jpg)

![Zürcher Fachhochschule

42

Suche der Parameter einer Funktion?

• Unser Neuronales Netz: 𝑓 𝑾 𝑥 = 𝑦

mit Bild 𝑥, echtem Resultat 𝑦 und Parametern 𝑾

(𝑾 = {𝑤1, 𝑤2} anfangs zufällig gewählt)

• Fehlermass: 𝑙 𝑾 =

1

𝑁

σ𝑖=1

𝑁

𝑓 𝑾 𝑥𝑖 − 𝑦𝑖

2

Durchschnitt der quadratischen Abweichungen

über alle Bilder (Loss)

Fehlerlandschaft

𝑤2

𝑤1

𝑙(𝑤1, 𝑤2)

Methode: Anpassung der Gewichte

von 𝑓 in Richtung der steilsten

Steigung (abwärts) von 𝐽

Wahrscheinlichkeit [%] für bestimmtes Ergebnis](https://image.slidesharecdn.com/bat4001zhawstadelmanndl-based-pattern-recognition-in-business-180804152850/85/BAT40-ZHAW-Stadelmann-Deep-Learning-based-Pattern-Recognition-in-Business-42-320.jpg)