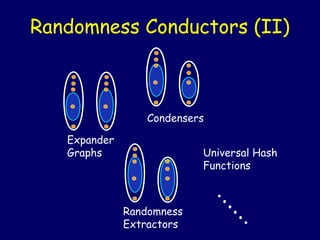

Randomness conductors

- 1. Randomness Conductors (II) Condensers Expander Graphs Universal Hash Functions .. ... Randomness Extractors .

- 2. Randomness Conductors – Motivation • Various relations between expanders, extractors, condensers & universal hash functions. • Unifying all of these as instances of a more general combinatorial object: – Useful in constructions. – Possible to study new phenomena not captured by either individual object.

- 3. Randomness Conductors Meta-Definition N M Prob. dist. X Prob. dist. X’ D x x’ An R-conductor if for every (k,k’) ∈ R, X has ≥ k bits of “entropy” ⇒ X’ has ≥ k’ bits of “entropy”.

- 4. Measures of Entropy • A naïve measure - support size • Collision(X) = Pr[X(1)=X(2)] = ||X||2 • Min-entropy(X) ≥ k if ∀x, Pr[x] ≤ 2-k • X and Y are ε-close if maxT | Pr[X∈T] - Pr[Y∈T] | = ½ ||X-Y||1 ≤ ε • X’ is ε-close Y of min-entropy k ⇒ | Support(X’)|≥ (1-ε) 2k

- 5. Vertex Expansion N N |Support(X’)| |Support(X)|≤ K D ≥ A |Support(X)| (A > 1) Lossless expanders: A > (1-ε) D (for ε < ½)

- 6. 2nd Eigenvalue Expansion N N X D X’ λ < β < 1, collision(X’) –1/N ≤ λ2 (collision(X) –1/N)

- 7. Unbalanced Expanders / Condensers N M≪N X X’ D • Farewell constant degree (for any non-trivial task |Support(X)|= N0.99, |Support(X’)|≥ 10D) • Requiring small collision(X’) too strong (same for large min-entropy(X’)).

- 8. Dispersers and Extractors [Sipser 88,NZ 93] N M≪N X X’ D • (k,ε)-disperser if |Support(X)| ≥ 2k ⇒ |Support(X’)|≥ (1-ε) M • (k,ε)-extractor if Min-entropy(X) ≥ k ⇒ X’ ε-close to uniform

- 9. Randomness Conductors • Expanders, extractors, condensers & universal hash functions are all functions, f : [N] × [D] → [M], that transform: X “of entropy” k ⇒ X’ = f (X,Uniform) “of entropy” k’ Randomness conductors: • Many flavors: – Measure of entropy. As in extractors. – Balanced vs. unbalanced. – Lossless vs. lossy. Allows the entire – Lower vs. upper bound on k. spectrum. – Is X’ close to uniform? – …

- 10. Conductors: Broad Spectrum Approach N M≪N X X’ D • An ε-conductor, ε:[0, log N]×[0, log M]→[0,1], if: ∀ k, k’, min-entropy(X’) ≥ k ⇒ X’ ε (k,k’)-close to some Y of min-entropy k’

- 11. Constructions Most applications need explicit expanders. Could mean: • Should be easy to build G (in time poly N). • When N is huge (e.g. 260) need: – Given vertex name x and edge label i easy to find the ith neighbor of x (in time poly log N).

- 12. [CRVW 02]: Const. Degree, Lossless Expanders … N N ∀S, |S|≤ K |Γ(S)| ≥ (1-ε) D |S| D (K=Ω (N))

- 13. … That Can Even Be Slightly Unbalanced N M=δ N ∀S, |S|≤ K |Γ(S)| ≥ (1-ε) D |S| D 0<ε,δ≤ 1 are constants ⇒ D is constant & K=Ω (N) For the curious: K=Ω (ε M/D) & D= poly (1/ε, log (1/δ)) (fully explicit: D= quasi poly (1/ε, log (1/δ)).

- 14. History • Explicit construction of constant-degree expanders was difficult. • Celebrated sequence of algebraic constructions [Mar73 ,GG80,JM85,LPS86,AGM87,Mar88,Mor94]. • Achieved optimal 2nd eigenvalue (Ramanujan graphs), but this only implies expansion ≤ D/2 [Kah95]. • “Combinatorial” constructions: Ajtai [Ajt87], more explicit and very simple: [RVW00]. • “Lossless objects”: [Alo95,RR99,TUZ01] • Unique neighbor, constant degree expanders [Cap01,AC02].

- 15. The Lossless Expanders • Starting point [RVW00]: A combinatorial construction of constant-degree expanders with simple analysis. • Heart of construction – New Zig-Zag Graph Product: Compose large graph w/ small graph to obtain a new graph which (roughly) inherits – Size of large graph. – Degree from the small graph. – Expansion from both.

- 16. The Zigzag Product z “Theorem”: Expansion (G1 z G2) ≈ min {Expansion (G1), Expansion (G2)}

- 17. Zigzag Intuition (Case I) Conditional distributions within “clouds” far from uniform – The first “small step” adds entropy. – Next two steps can’t lose entropy.

- 18. Zigzag Intuition (Case II) Conditional distributions within clouds uniform • First small step does nothing. • Step on big graph “scatters” among clouds (shifts entropy) • Second small step adds entropy.

- 19. Reducing to the Two Cases • Need to show: the transition prob. matrix M of G1 z 2 shrinks every vector π∈ℜND that is G perp. to uniform. 1 2 … … D • Write π as N×D Matrix: 1 π ⊥ uniform ⇒ sum of … entries is 0. u .4 -.3 … … 0 – RowSums(x) = “distribution” … on clouds themselves N • Can decompose π = π|| + π⊥ , where π|| is constant on rows, and all rows of π⊥ are perp. to uniform. • Suffices to show M shrinks π|| and π⊥ individually!

- 20. Results & Extensions [RVW00] • Simple analysis in terms of second eigenvalue mimics the intuition. • Can obtain degree 3 ! • Additional results (high min-entropy extractors and their applications). • Subsequent work [ALW01,MW01] relates to semidirect product of groups ⇒ new results on expanding Cayley graphs.

- 21. Closer Look: Rotation Maps • Expanders normally viewed as maps (vertex)×(edge label) → (vertex). X,i Y,j • Here: (vertex)×(edge label) → (vertex)×(edge label). Permutation ⇒ The big step never lose. (X,i) → (Y,j) if (X, i ) and (Y, j ) Inspired by ideas from the setting of correspond to “extractors” [RR99]. same edge of G1

- 22. Inherent Entropy Loss – In each case, only one of two small steps “works” – But paid for both in degree.

- 23. Trying to improve ??? ???

- 24. Zigzag for Unbalanced Graphs • The zig-zag product for conductors can produce constant degree, lossless expanders. • Previous constructions and composition techniques from the extractor literature extend to (useful) explicit constructions of conductors.

- 25. Some Open Problems • Being lossless from both sides (the non-bipartite case). • Better expansion yet? • Further study of randomness conductors.