SF Women in eDiscovery Sept 2011

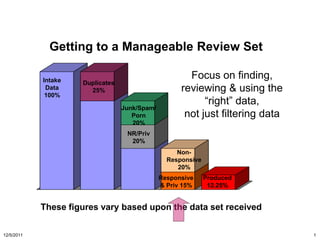

- 1. Getting to a Manageable Review Set Intake Focus on finding, Duplicates Data 25% reviewing & using the 100% “right” data, Junk/Spam/ Porn not just filtering data 20% NR/Priv 20% Non- Responsive 20% Responsive Produced & Priv 15% 12.25% These figures vary based upon the data set received 12/5/2011 1

- 2. Review risks Failure to collect the right data Failure to find responsive documents Failure to recognize responsive documents Failure to recognize privileged documents Inconsistent treatment of documents (e.g., duplicates) Failure to complete project in a timely manner Sophisticated Tools – Understand What They Do and Don’t Do Well – Inform Yourself, Speak to References, Consultants 12/5/2011 2

- 3. Search Methodologies Visualization Measurement Relationship Analysis documents with causal or sequential relationship Context Social Network Analysis relationships among relevant people relationships among relevant people Clustering Clustering Ontology Ontology Concept similarity of similarity of generalized generalized salient features salient features words or phrases words or phrases specific exact words, Content Keyword Keyword specific exact words specific exact words proximity searches, stemming 12/5/2011 3

- 4. Myth Keyword Searching is the Way to Go If I agree to keyword terms, I am OK Missing in Action (Under-inclusive) Unwanted Extras (Over-inclusive) Multiple subject/persons (Disambiguate) Reality: Keyword Search is one tool among many! 12/5/2011 4

- 5. "simple keyword searches end up being both over- and under- inclusive." Judge Paul Grimm, Victor Stanley, Inc. v. Creative Pipe, Inc., No. MJG-06-2662, 2008 U.S. Dist. LEXIS 42025 (D. Md. May 29, 2008). Keyword culling

- 6. Keyword Accuracy Example Keyword search reduced the document set by only 47% And 88% of the documents returned by keyword search were not responsive (Over-inclusive) 8,553 responsive documents missed by keyword search (Almost 8% of responsive documents missed by keyword search - Under-inclusive) 12/5/2011 6

- 7. Under Inclusive - Missing in Action Missing abbreviations / acronyms / clippings: – incentive stock option but not ISO Missing inflectional variants: – grant but not grants, granted, granting Missing spellings or common misspellings: – gray but not grey – privileged but not priviliged, priviledged, privilidged, priveliged, privelidged, priveledged, … Missing syntactic variants: • board of directors meetingbut not meeting of the board of directors, BOD meeting, board meeting, BOD mtg… Missing Synonyms/Paraphrases: • Hire date but not start date 12/5/2011 7

- 8. Over-Inclusive - Unwanted Extras (a) Options Target: Sheila was granted 100,000 options at $10 Match: What are our options for lunch? Match in a signature line: Amanda Wacz Acme Stock Options Administrator Destroy Target:destroyevidence Match in a disclaimer: The information in this email, and any attachments, may contain confidential and/or privileged information and is intended solely for the use of the named recipient(s). Any disclosure or dissemination in whatever form, by anyone other than the recipient is strictly prohibited. If you have received this transmission in error, please contact the sender and destroy this message and any attachments. Thank you. 12/5/2011 8

- 9. Over-Inclusive - Unwanted Extras (b) alter* Target: alter, alters, altered, altering Matches: alternate, alternative, alternation, altercate, altercation, alterably, … grant Target:stock optiongrant Matches names:Grant Woods, Howard Grant 12/5/2011 9

- 10. Failure to Disambiguate Words that Relate to Multiple Subjects Example: refund is used to refer to: – FERC-ordered refunds owed by Enron for overcharging – Tax refunds (both corporate and personal) – Mundane business matters In a given matter, one might be of interest while the others are not 12/5/2011 10

- 11. Technology Enhanced Review: Speed, Predictable Costs, and Accuracy Automate any portion of the review Source Eliminate Data Duplicates & System Files 100% Non-Responsive 30% Isolation Example from a real case ontologies NR by 30% Technology Responsive Enhanced by Technology Review Enhanced (removed Review Priv by another 18%) (removed High-Speed another 7%) Manual Review 22% 3% 15% 12/5/2011 11

- 12. Example: “priv” ontology Valuable, re-usable work product Combines classifiers into concepts, into bigger concepts 12/5/2011 12

- 13. Disclaimer Detection Disclaimers can throw off attempts to detect privileged communications Prevalent throughout many companies, even on trivial communications Detect them automatically, and exclude them from searches 12/5/2011 13

- 14. Privileged by Actor Only Responsive Privileged by Actor and Term D omain of D isclaimer D etection Privileged by Term Only Privileged by D isclaimer Only 12/5/2011 14

- 15. Priv Logs Expensive - But Do NOT Have to Be In re Vioxx Products Liability Litigation (E.D. La 2007) Merck’s Priv Log had 30,000 items on it – How to Make a Judge Angry – How to Waste Client Money – How to Attract Sanctions 12/5/2011 15

- 16. Transparency of Process Discussing Review Protocols – Provide transparent, defensible, sophisticated search based on document content – Clustering, Ontologies, Analytics, and yes, sometimes Keywords too Develop search methodologies for each case – Use technology experts in consultation with case / legal experts Results verifiable by Quality Control – Defensible sampling Sophisticated Tools – Understand What They Do and Don’t Do Well – Inform Yourself, Speak to References, Consultants 12/5/2011 16

- 17. Blair &Maron: Keyword search is incomplete What the lawyers thought 100% they were finding 90% Responsive documents 80% 70% 60% 50% What they 40% actually found 30% 20% 10% 0% Predicted Obtained Blair and Maron, Communications of the ACM, 28, 1985, 289-299

- 18. Blair and Maron “It is impossibly difficult for users to predict the exact words, word combinations, and phrases that are used by all (or most) relevant documents and only (or primarily) by those documents.” Blair & Maron Study: 20% recall Lawyers picked 3 key terms, B & M found 26 more Defense: “Unfortunate incident” Plaintiff: “Disaster” Blair and Maron, Communications of the ACM, 28, 1985, 289-299

- 20. Document categorization in Legal Discovery: Computer Classification vs. Manual Review Herbert L. Roitblat, Anne Kershaw, & Patrick Oot

- 21. 1 0.95 0.9 Agreement with original 0.85 0.8 0.75 0.7 0.65 0.6 0.55 0.5 Team A Team B System C System D Manual Computer review classification 2010, JASIST Roitblat, Kershaw, &Oot,

- 22. Gold Standard

- 23. Turing test Alan Turing, 1912-1954

- 24. Substantial disagreement between Team A & Team B 28% 629 580 858 A Both B 0 500 1000 1500 2000 Responsive Documents Roitblat, Kershaw, &Oot, 2010, JASIST

- 25. Conclusion The computer systems yielded comparable level of performance relative to manual review Fewer people, less time, less cost Measure performance to evaluate

- 26. Will lawyers lose control? Computer system amplifies the intelligence of the Expert

- 27. Will lawyers lose their jobs?

- 28. Tap into the mind of an expert

- 29. Technology-Enhanced or Automated Review 12/5/2011 29

- 30. Setup Sample Responsive Non- Expert judges responsive sample Repeat as needed Model learns Model predicts Responsive Non-responsive Model categorizes all remaining documents

- 31. Predictive coding achieves much higher accuracy (Jaccard) Team A Only Team A and Team B Team B 0.304 0.281 0.415 Humans Humans and Predictive Coding Predictive Coding 0.186 0.688 0.126 Responsive Documents Data from Roitblat, et al. and an Internal OrcaTec Case Study

- 32. Why doesn’t everyone use it? • Attorneys don’t understand the technology • May not be aware of the accuracy data • May not understand how to fit into their work flow • Not in everyone’s economic interest • Acceptable to judges?

- 33. Defensible? Measure TREC Roitblat, e Roitblat Predictiv 2008 t al. Team et al. e A Team B Coding* Precision 0.210 0.197 0.183 0.899 Recall 0.555 0.488 0.539 0.873 *OrcaTec internal Result

- 34. Thank you! Herb Roitblat Sonya Sigler 770-650-7706x229 650-281-8325 herb@orcatec.com sonya@sigler.name 12/5/2011 34

Hinweis der Redaktion

- Keyword and Boolean selection / searching yielded only 20% of the responsive documents.

- OrcaTec’s performance compares very favorably to similar measures observed using teams of human reviewers and other predictive coding systems. In the TREC 2008 ad hoc task, the highest recall achieved by a system was 0.555 (i.e., 55.5% of the documents identified as relevant were retrieved; Run “wat7fuse”). The precision corresponding to that level of recall was 0.210, meaning that 21% of the retrieved documents were determined to be relevant.Roitblat, Kershaw, and Oot measured precision and recall for two human teams. Team A yielded precision of 0.197 and recall of 0.488. Team B yielded precision of 0.183 and recall of 0.539.