Alex Nauda [Nobl9] | How Not to Build an SLO Platform | InfluxDays NA 2021

•

0 gefällt mir•161 views

Nobl9 is a Service Level Objective Platform for measuring and monitoring reliability. We will look under the hood of an SLO platform using InfluxDB as part of the core architecture. We’ll talk about the project, the decisions we took, the challenges we faced, the mistakes we made, and the lessons learned.

Melden

Teilen

Melden

Teilen

Downloaden Sie, um offline zu lesen

Empfohlen

Empfohlen

Weitere ähnliche Inhalte

Was ist angesagt?

Was ist angesagt? (20)

Ana-Maria Calin [InfluxData] | Migrating from OSS to InfluxDB Cloud | InfluxD...![Ana-Maria Calin [InfluxData] | Migrating from OSS to InfluxDB Cloud | InfluxD...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Ana-Maria Calin [InfluxData] | Migrating from OSS to InfluxDB Cloud | InfluxD...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Ana-Maria Calin [InfluxData] | Migrating from OSS to InfluxDB Cloud | InfluxD...

InfluxDB + Telegraf Operator: Easy Kubernetes Monitoring

InfluxDB + Telegraf Operator: Easy Kubernetes Monitoring

How a Time Series Database Contributes to a Decentralized Cloud Object Storag...

How a Time Series Database Contributes to a Decentralized Cloud Object Storag...

How to Deliver a Critical and Actionable Customer-Facing Metrics Product with...

How to Deliver a Critical and Actionable Customer-Facing Metrics Product with...

Tim Hall [InfluxData] | InfluxDB Roadmap | InfluxDays Virtual Experience Lond...![Tim Hall [InfluxData] | InfluxDB Roadmap | InfluxDays Virtual Experience Lond...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Tim Hall [InfluxData] | InfluxDB Roadmap | InfluxDays Virtual Experience Lond...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Tim Hall [InfluxData] | InfluxDB Roadmap | InfluxDays Virtual Experience Lond...

Paul Dix [InfluxData] | InfluxDays Opening Keynote | InfluxDays EMEA 2021![Paul Dix [InfluxData] | InfluxDays Opening Keynote | InfluxDays EMEA 2021](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Paul Dix [InfluxData] | InfluxDays Opening Keynote | InfluxDays EMEA 2021](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Paul Dix [InfluxData] | InfluxDays Opening Keynote | InfluxDays EMEA 2021

Brian Gilmore [InfluxData] | InfluxDB in an IoT Application Architecture | In...![Brian Gilmore [InfluxData] | InfluxDB in an IoT Application Architecture | In...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Brian Gilmore [InfluxData] | InfluxDB in an IoT Application Architecture | In...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Brian Gilmore [InfluxData] | InfluxDB in an IoT Application Architecture | In...

Dominik Obermaier and Anja Helmbrecht-Schaar [HiveMQ] | IIoT Monitoring with ...![Dominik Obermaier and Anja Helmbrecht-Schaar [HiveMQ] | IIoT Monitoring with ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Dominik Obermaier and Anja Helmbrecht-Schaar [HiveMQ] | IIoT Monitoring with ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Dominik Obermaier and Anja Helmbrecht-Schaar [HiveMQ] | IIoT Monitoring with ...

Andy Charlton [InfluxData] | Managing Your Dashboards, Tasks and Alerts Made ...![Andy Charlton [InfluxData] | Managing Your Dashboards, Tasks and Alerts Made ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Andy Charlton [InfluxData] | Managing Your Dashboards, Tasks and Alerts Made ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Andy Charlton [InfluxData] | Managing Your Dashboards, Tasks and Alerts Made ...

How to Improve Data Labels and Feedback Loops Through High-Frequency Sensor A...

How to Improve Data Labels and Feedback Loops Through High-Frequency Sensor A...

Sam Dillard [InfluxData] | Performance Optimization in InfluxDB | InfluxDays...![Sam Dillard [InfluxData] | Performance Optimization in InfluxDB | InfluxDays...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Sam Dillard [InfluxData] | Performance Optimization in InfluxDB | InfluxDays...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Sam Dillard [InfluxData] | Performance Optimization in InfluxDB | InfluxDays...

Jess Ingrassellino [InfluxData] | How to Get Data Into InfluxDB | InfluxDays ...![Jess Ingrassellino [InfluxData] | How to Get Data Into InfluxDB | InfluxDays ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Jess Ingrassellino [InfluxData] | How to Get Data Into InfluxDB | InfluxDays ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Jess Ingrassellino [InfluxData] | How to Get Data Into InfluxDB | InfluxDays ...

Upgrading Made Easy: Moving to InfluxDB 2.x or InfluxDB Cloud with Cribl LogS...

Upgrading Made Easy: Moving to InfluxDB 2.x or InfluxDB Cloud with Cribl LogS...

How to Gain Real-Time Visibility into Your IaaS with vBridge, InfluxDB, Grafana

How to Gain Real-Time Visibility into Your IaaS with vBridge, InfluxDB, Grafana

Evan Kaplan [InfluxData] | InfluxDays Opening Remarks | InfluxDays EMEA 2021![Evan Kaplan [InfluxData] | InfluxDays Opening Remarks | InfluxDays EMEA 2021](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Evan Kaplan [InfluxData] | InfluxDays Opening Remarks | InfluxDays EMEA 2021](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Evan Kaplan [InfluxData] | InfluxDays Opening Remarks | InfluxDays EMEA 2021

Sensor Data in InfluxDB by David Simmons, IoT Developer Evangelist | InfluxData

Sensor Data in InfluxDB by David Simmons, IoT Developer Evangelist | InfluxData

How Crosser Built a Modern Industrial Data Historian with InfluxDB and Grafana

How Crosser Built a Modern Industrial Data Historian with InfluxDB and Grafana

Tim Hall [InfluxData] | InfluxDays Keynote: InfluxDB Roadmap | InfluxDays NA ...![Tim Hall [InfluxData] | InfluxDays Keynote: InfluxDB Roadmap | InfluxDays NA ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Tim Hall [InfluxData] | InfluxDays Keynote: InfluxDB Roadmap | InfluxDays NA ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Tim Hall [InfluxData] | InfluxDays Keynote: InfluxDB Roadmap | InfluxDays NA ...

Ähnlich wie Alex Nauda [Nobl9] | How Not to Build an SLO Platform | InfluxDays NA 2021

Ähnlich wie Alex Nauda [Nobl9] | How Not to Build an SLO Platform | InfluxDays NA 2021 (20)

Data Con LA 2018 - Enabling real-time exploration and analytics at scale at H...

Data Con LA 2018 - Enabling real-time exploration and analytics at scale at H...

Building a Next-gen Data Platform and Leveraging the OSS Ecosystem for Easy W...

Building a Next-gen Data Platform and Leveraging the OSS Ecosystem for Easy W...

Lessons from Building Large-Scale, Multi-Cloud, SaaS Software at Databricks

Lessons from Building Large-Scale, Multi-Cloud, SaaS Software at Databricks

OSMC 2023 | What’s new with Grafana Labs’s Open Source Observability stack by...

OSMC 2023 | What’s new with Grafana Labs’s Open Source Observability stack by...

How Netflix Monitors Applications in Near Real-time w Amazon Kinesis - ABD401...

How Netflix Monitors Applications in Near Real-time w Amazon Kinesis - ABD401...

Scalling through Couchbase at Sky Deutschland (Couchbase Live France 2015)

Scalling through Couchbase at Sky Deutschland (Couchbase Live France 2015)

Streaming Time Series Data With Kenny Gorman and Elena Cuevas | Current 2022

Streaming Time Series Data With Kenny Gorman and Elena Cuevas | Current 2022

Delivering the power of data using Spring Cloud DataFlow and DataStax Enterpr...

Delivering the power of data using Spring Cloud DataFlow and DataStax Enterpr...

Mehr von InfluxData

Mehr von InfluxData (20)

Best Practices for Leveraging the Apache Arrow Ecosystem

Best Practices for Leveraging the Apache Arrow Ecosystem

How Bevi Uses InfluxDB and Grafana to Improve Predictive Maintenance and Redu...

How Bevi Uses InfluxDB and Grafana to Improve Predictive Maintenance and Redu...

How Teréga Replaces Legacy Data Historians with InfluxDB, AWS and IO-Base

How Teréga Replaces Legacy Data Historians with InfluxDB, AWS and IO-Base

Build an Edge-to-Cloud Solution with the MING Stack

Build an Edge-to-Cloud Solution with the MING Stack

Meet the Founders: An Open Discussion About Rewriting Using Rust

Meet the Founders: An Open Discussion About Rewriting Using Rust

Gain Better Observability with OpenTelemetry and InfluxDB

Gain Better Observability with OpenTelemetry and InfluxDB

How a Heat Treating Plant Ensures Tight Process Control and Exceptional Quali...

How a Heat Treating Plant Ensures Tight Process Control and Exceptional Quali...

How Delft University's Engineering Students Make Their EV Formula-Style Race ...

How Delft University's Engineering Students Make Their EV Formula-Style Race ...

Introducing InfluxDB’s New Time Series Database Storage Engine

Introducing InfluxDB’s New Time Series Database Storage Engine

Start Automating InfluxDB Deployments at the Edge with balena

Start Automating InfluxDB Deployments at the Edge with balena

Streamline and Scale Out Data Pipelines with Kubernetes, Telegraf, and InfluxDB

Streamline and Scale Out Data Pipelines with Kubernetes, Telegraf, and InfluxDB

Ward Bowman [PTC] | ThingWorx Long-Term Data Storage with InfluxDB | InfluxDa...![Ward Bowman [PTC] | ThingWorx Long-Term Data Storage with InfluxDB | InfluxDa...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Ward Bowman [PTC] | ThingWorx Long-Term Data Storage with InfluxDB | InfluxDa...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Ward Bowman [PTC] | ThingWorx Long-Term Data Storage with InfluxDB | InfluxDa...

Scott Anderson [InfluxData] | New & Upcoming Flux Features | InfluxDays 2022![Scott Anderson [InfluxData] | New & Upcoming Flux Features | InfluxDays 2022](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Scott Anderson [InfluxData] | New & Upcoming Flux Features | InfluxDays 2022](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Scott Anderson [InfluxData] | New & Upcoming Flux Features | InfluxDays 2022

Steinkamp, Clifford [InfluxData] | Closing Thoughts | InfluxDays 2022![Steinkamp, Clifford [InfluxData] | Closing Thoughts | InfluxDays 2022](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Steinkamp, Clifford [InfluxData] | Closing Thoughts | InfluxDays 2022](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Steinkamp, Clifford [InfluxData] | Closing Thoughts | InfluxDays 2022

Steinkamp, Clifford [InfluxData] | Welcome to InfluxDays 2022 - Day 2 | Influ...![Steinkamp, Clifford [InfluxData] | Welcome to InfluxDays 2022 - Day 2 | Influ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Steinkamp, Clifford [InfluxData] | Welcome to InfluxDays 2022 - Day 2 | Influ...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Steinkamp, Clifford [InfluxData] | Welcome to InfluxDays 2022 - Day 2 | Influ...

Steinkamp, Clifford [InfluxData] | Closing Thoughts Day 1 | InfluxDays 2022![Steinkamp, Clifford [InfluxData] | Closing Thoughts Day 1 | InfluxDays 2022](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Steinkamp, Clifford [InfluxData] | Closing Thoughts Day 1 | InfluxDays 2022](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Steinkamp, Clifford [InfluxData] | Closing Thoughts Day 1 | InfluxDays 2022

Kürzlich hochgeladen

Enterprise Knowledge’s Urmi Majumder, Principal Data Architecture Consultant, and Fernando Aguilar Islas, Senior Data Science Consultant, presented "Driving Behavioral Change for Information Management through Data-Driven Green Strategy" on March 27, 2024 at Enterprise Data World (EDW) in Orlando, Florida.

In this presentation, Urmi and Fernando discussed a case study describing how the information management division in a large supply chain organization drove user behavior change through awareness of the carbon footprint of their duplicated and near-duplicated content, identified via advanced data analytics. Check out their presentation to gain valuable perspectives on utilizing data-driven strategies to influence positive behavioral shifts and support sustainability initiatives within your organization.

In this session, participants gained answers to the following questions:

- What is a Green Information Management (IM) Strategy, and why should you have one?

- How can Artificial Intelligence (AI) and Machine Learning (ML) support your Green IM Strategy through content deduplication?

- How can an organization use insights into their data to influence employee behavior for IM?

- How can you reap additional benefits from content reduction that go beyond Green IM?

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...Enterprise Knowledge

Kürzlich hochgeladen (20)

How to Troubleshoot Apps for the Modern Connected Worker

How to Troubleshoot Apps for the Modern Connected Worker

08448380779 Call Girls In Greater Kailash - I Women Seeking Men

08448380779 Call Girls In Greater Kailash - I Women Seeking Men

Scaling API-first – The story of a global engineering organization

Scaling API-first – The story of a global engineering organization

Raspberry Pi 5: Challenges and Solutions in Bringing up an OpenGL/Vulkan Driv...

Raspberry Pi 5: Challenges and Solutions in Bringing up an OpenGL/Vulkan Driv...

Boost PC performance: How more available memory can improve productivity

Boost PC performance: How more available memory can improve productivity

Bajaj Allianz Life Insurance Company - Insurer Innovation Award 2024

Bajaj Allianz Life Insurance Company - Insurer Innovation Award 2024

Factors to Consider When Choosing Accounts Payable Services Providers.pptx

Factors to Consider When Choosing Accounts Payable Services Providers.pptx

Understanding Discord NSFW Servers A Guide for Responsible Users.pdf

Understanding Discord NSFW Servers A Guide for Responsible Users.pdf

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...

08448380779 Call Girls In Diplomatic Enclave Women Seeking Men

08448380779 Call Girls In Diplomatic Enclave Women Seeking Men

The 7 Things I Know About Cyber Security After 25 Years | April 2024

The 7 Things I Know About Cyber Security After 25 Years | April 2024

Powerful Google developer tools for immediate impact! (2023-24 C)

Powerful Google developer tools for immediate impact! (2023-24 C)

08448380779 Call Girls In Friends Colony Women Seeking Men

08448380779 Call Girls In Friends Colony Women Seeking Men

Strategies for Unlocking Knowledge Management in Microsoft 365 in the Copilot...

Strategies for Unlocking Knowledge Management in Microsoft 365 in the Copilot...

Advantages of Hiring UIUX Design Service Providers for Your Business

Advantages of Hiring UIUX Design Service Providers for Your Business

Boost Fertility New Invention Ups Success Rates.pdf

Boost Fertility New Invention Ups Success Rates.pdf

Alex Nauda [Nobl9] | How Not to Build an SLO Platform | InfluxDays NA 2021

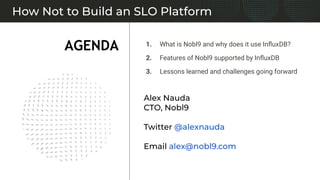

- 1. 1. What is Nobl9 and why does it use InfluxDB? 2. Features of Nobl9 supported by InfluxDB 3. Lessons learned and challenges going forward How Not to Build an SLO Platform AGENDA Alex Nauda CTO, Nobl9 Twitter @alexnauda Email alex@nobl9.com

- 2. nobl9.com / @nobl9inc / hello@nobl9.com

- 3. Nobl9 Architecture - Black Box View Error Budgets Web App API InfluxDB PM & Business Stakeholders YAML GUI A l e r t P r i o r i t i z e Raw SLIs SLO Config Ops/SREs & Application Leads Govern Align New Relic Prometheus Datadog Calculations Customer Platforms and Services App CI/CD Web Services Data GitOps SLO Based Events & Alerts Graphs Reports Review & Align Review & Align

- 4. Why did we consider InfluxDB in the first place? Query-friendly Time Series Database: Flexible query capabilities to drive all our SLO charts and graphs Deployment options: Cloud offering for our SaaS platform; Enterprise for self-hosting customers; OSS for dev env… with good query compatibility across them all Commercial support: Firm requirement both for us (managing our SaaS offering) and our customers (self-hosting and managing Nobl9 including InfluxDB)

- 5. InfluxDB is useful across many of the core features of Nobl9 Calculation of SLO time series Alerting on SLO time series Data Intake Data Export Graphs and Reports Nobl9 Feature Set Receiving telemetry data from a variety of sources Processing of telemetry data (SLIs) and math A variety of visualizations, real-time and historical Notify various integrations based on configuration Real-time and batch exports to other tools

- 6. Data Intake Telemetry Requirements Receiving telemetry data from a variety of sources ● Support a wide variety of data sources -- 15 and counting ○ Metrics systems ○ APM ○ RUM ○ Cloud platform built-in metrics ○ Log aggregation ○ Synthetics ○ Data warehouses ● Integrate via agent (self-hosted sidecar) as well as direct connection (SaaS-to-SaaS) ● Adapt to a wide variety of integration paradigms ○ API, Query, push or pull, various authentication mechanisms ● Be robust in the face of connectivity issues and operation across the internet and other networks ● Conform to various security models at large companies (for example, support web proxies BTF) ● Configuration-based telemetry ○ Integrate well with our SLOs-as-code paradigm ○ Customers apply changes using our web UI, CLI, terraform provider, k8s operator

- 7. Coming Soon

- 8. Prometheus Server Public Internet Customer’s Environment AWS WAF Nobl9 Intake Service Nobl9 / AWS Cloud m2m Authentication Nobl9 Agent Prometheus does not support authentication directly. Some users put Prom behind NGINX with HTTP Basic Auth or Client Cert Auth. N9 Agent doesn’t support any authentication. Data source credentials are proved by customer to the N9 Agent as environment variables. Credentials are not sent to Nobl9 N9 Agent executes queries against metric data sources on defined interval using the environment credentials N9 Agent pools the N9 Intake service to receive the latest configuration. N9 Agent pushes data to N9. N9 Intake service can only handle numeric float data types.(N9 Intake cannot receive or store PII) Direct Nobl9 Agent and Direct Connection Architecture

- 11. Data Intake Telemetry Architecture Receiving telemetry data from a variety of sources ● Based on Telegraf ○ High quality data pipeline utility ○ Widely adopted, strong community ● Extended in-house to meet our specific requirements ○ Proprietary input and output plugins ○ Doesn't send directly to InfluxDB ○ Reports data to our Data Intake REST API ○ Dynamically reloads configuration after phoning home ● Direct connection (SaaS-to-SaaS) is special and a bit different ○ But still Telegraf is a component of it

- 12. Calculation of SLO time series Calculation Requirements Processing of telemetry data (SLIs) and math ● Calculate up-to-the-minute SLOs as data arrives ● Support a wide variety of SLO features ○ Rolling windows and Calendar-aligned windows ○ Ratio metrics as well as Threshold metrics ○ Occurrences-based calculation vs time slice-based calculation

- 13. Calculation Design ● Original version was built in InfluxDB ○ Huge prototyping win! ○ Used InfluxQL (but could have been done in Flux) ○ Queries were really intense ● Would have to scale vertically ○ Calculating SLOs repeatedly, on the fly, is intense ○ This would be a massive database ■ Calculations are memory intensive ■ Longer SLO time windows cost more ○ Add in requirements for HA, DR… a vertically scaled database solution is not ideal When Your Architecture Requires a Vertically Scaled Database

- 14. Calculation of SLO time series Calculation Architecture Processing of telemetry data (SLIs) and math ● Rearchitected into custom code and Kafka ○ FIFO calculation approach ○ Maintains state, uses object storage as a backing store ○ Scales horizontally

- 15. Graphs and Reports Query Requirements A variety of visualizations, real-time and historical ● Display up-to-the-minute data as values change (as new telemetry data arrives) ● Report over longer time scales as well -- over a year ● Allow users to seek through the data with a time window selector ● Support a multitude of SLOs running at once, and chart them ● Provide a wide variety of visualizations ○ SLO detail view ○ SLO grid view (list) ○ Various historical reports ○ Summaries such as Service Health Dashboard

- 20. Graphs and Reports Query Architecture A variety of visualizations, real-time and historical ● InfluxDB underlies all of this ○ All in Flux now ○ Flexibility is sufficient for a wide variety of creative uses ● Data granularity (resolution) is sometimes challenging in our use cases ○ We downsample data to hourly to display on longer time range graphs ○ We retain all the data in addition to the downsampled summary ○ Downsampling is done with InfluxDB Tasks ■ Requires some consideration for compatibility across InfluxDB codebases

- 21. Alerting on SLO time series Alerting Requirements Notify various integrations based on configuration ● Alert on configurable conditions based on SLO time series ○ Burn rate conditions ○ Error budget exhausted or partly exhausted ● Support a wide variety of alert methods and destinations

- 22. Alerting on SLO time series Alerting Architecture Notify various integrations based on configuration ● Similar architecture to calculations ○ Custom Go code ○ Hanging off the same Kafka bus as calculations ● Requirements on the alert method integration side are the big driver ○ Integrate with APIs of integrated alert methods ○ Webhooks both tool-specific and a rich custom webhook

- 23. Data Export Data Export Requirements Real-time and batch exports to other tools ● Batch data export ○ Export delimited files to cloud object storage or fs ○ Import to popular data lake tooling ■ Snowflake ■ Big Query ● Real-time data export ○ Display SLO time series alongside other metrics ○ Incorporate SLO data in existing dashboards and visualizations

- 24. Data Export Data Export Architecture Real-time and batch exports to other tools ● Batch data export: custom ○ Manage authentication within and across clouds/hosting ○ Manage timing and performance of export jobs ○ Integrate with import to preferred query system ● Real-time requirements ○ Self-hosted customers ■ Use their dedicated InfluxDB ■ Use Chronograf ■ Wire InfluxDB up to something else if they want ○ SaaS customers ■ Real-time data feed in development now ■ Will support popular destinations (another InfluxDB)

- 25. Faceted SLOs ● Present a global SLO for a given SLI ● Provide the ability to drill down into that SLO along various dimensions Higher Cardinality ● This data looks more like observability platform data ● Might consider a columnar database or other similar data stores Possible InfluxDB option? ● We will be watching IOx closely to see if it could meet our needs here Network / ASN Region / Data Center Individual User Geographic Location Feature / User Journey Client Platform & Version B2C Customer AZ / subnet Challenges Going Forward SLI Data Faceting High Cardinality

- 26. Thank You Alex Nauda CTO, Nobl9 Twitter @alexnauda Email alex@nobl9.com