Real Time Computer Vision for Lane Level Vehicle Navigation

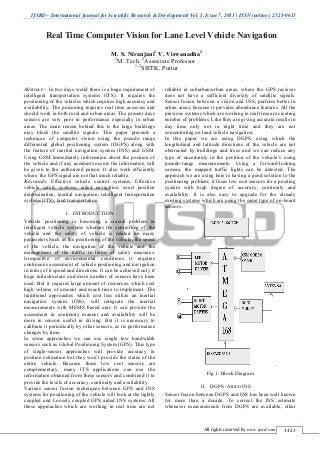

- 1. IJSRD - International Journal for Scientific Research & Development| Vol. 1, Issue 7, 2013 | ISSN (online): 2321-0613 All rights reserved by www.ijsrd.com 1423 Abstract— In two days world there is a huge requirement of intelligent transportation systems (ITS). It requires the positioning of the vehicles which requires high accuracy and availability. The poisoning requires real time accesses and should work in both rural and urban areas. The present days sensors are very poor in performance especially in urban areas. The main reason behind this is the large buildings may block the satellite signals. This paper presents a technique of computer vision using the pseudo range differential global positioning system (DGPS) along with the feature of inertial navigation system (INS) and GSM. Using GSM immediately information about the position of the vehicle and if any accidents occurs the information will be given to the authorized person .It also work efficiently where the GPS signal are not that much reliable. Keywords; Effective vehicle control systems, Effective vehicle safety systems, aided navigation, exact position determination, inertial navigation, intelligent transportation systems (ITS), land transportation. INTRODUCTIONI. Vehicle positioning is becoming a crucial problem in intelligent vehicle systems whereas the controlling of the vehicle and the safety of vehicle is related on many parameters Such as the positioning of the vehicle, the speed of the vehicle, the navigation of the vehicle and the management of the traffic in terms of safety measures. Irrespective of environmental conditions it requires continuous assessment of vehicle positioning and navigation in terms of it speed and directions. It can be achieved only if huge infrastructure and more number of sensors have been used. But it requires large amount of resources, which cost high volume of amount and much time to implement. The traditional approaches which cost less utilize an inertial navigation system (INS), will integrate the inertial measurements with MEMS based unit. It can provide the assessment in continuity manner and availability will be more in sensors useful in driving. But it is necessary to calibrate it periodically by other sensors, as its performance changes by time. In some approaches we can use single low bandwidth sensors such as Global Positioning System (GPS). This type of single-sensor approaches will provide accuracy in position estimation but they won’t provide the status of the entire vehicle. Because these low cost sensors are complementary, many ITS applications can use the information obtained from these sensors and combined it to provide the levels of accuracy, continuity and availability. Various sensor fusion techniques between GPS and INS systems for positioning of the vehicle will look at the tightly coupled and Loosely coupled GPS aided INS systems. All these approaches which are working in real time are not reliable in suburban/urban areas, where the GPS receiver does not have a sufficient diversity of satellite signals. Sensor fusion between a vision and INS, perform better in urban areas, because it provides abundance features. All the previous systems which are working in real time are creating number of problems. Like they are giving accurate results in day time only not in night time and they are not concentrating on land vehicle navigation. In this paper we are using DGPS, using which the longitudinal and latitude directions of the vehicle are not obstructed by buildings and trees and we can reduce any type of uncertainty in the position of the vehicle’s using pseudo-range measurements. Using a forward-looking camera, the mapped traffic lights can be detected. The approach we are using here is having a good solution to the positioning problem; it fuses low cost sensors for a positing system with high degree of accuracy, continuity and availability. It is also easy to upgrade for the already existing systems which are using the same type of on-board sensors. Fig 1: Block Diagram DGPS -AIDED INSII. Sensor fusion between DGPS and INS has been well known for more than a decade. To correct the INS estimate whenever measurements from DGPS are available, other Real Time Computer Vision for Lane Level Vehicle Navigation M. S. Niranjani1 V. Viswanadha2 1 M. Tech. 2 Associate Professor 1,2 SIETK, Puttur

- 2. Real Time Computer Vision for Lane Level Vehicle Navigation (IJSRD/Vol. 1/Issue 7/2013/0012) All rights reserved by www.ijsrd.com 1424 Bayesian estimation frameworks have also been suggested; such as the unscented Kalman filter the particle filter estimated position and orientation are computed in a global coordinate reference frame such as Earth-centered Earth- fixed (ECEF) or a fixed local tangent frame. These fusion techniques are divided into the following two types: 1) loosely coupled and 2) tightly coupled. A. Loosely Coupled DGPS-Aided INS For loosely coupled sensor integration, the DGPS receiver computes the antenna’s position and velocity in an absolute reference frame, and the measurements used by the correction framework include the processed absolute position and velocity of the DGPS receiver. When the number of visible DGPS satellites is less than four, the correction step cannot be carried out, because the DGPS receiver needs to track at least four satellites to compute the absolute position and velocity. This solution is considered less robust compared to the tightly coupled solution, because it requires the line-of-sight to at least four satellites. This approach also yields suboptimal state estimation, because the DGPS receiver does not provide the cross correlation between the estimated positions. B. Tightly Coupled DGPS-Aided INS In the tightly coupled arrangement, raw DGPS measurements (pseudo range, Doppler shift, and carrier phase) from the receiver, for each satellite, are used to correct the INS. The correction step is useful whenever the receiver tracks at least two satellites. Although the position is not observable when the number of tracked satellites is less than four, the DGPS pseudo range, Doppler, and carrier-phase (if available) measurements will provide information to correct certain portions of the state vector whenever such measurement information is available. The solution computed from the tightly coupled framework provides a comparable or better result than the loosely coupled framework. PROTOTYPE OF REALTIME NAVIGATIONIII. SYSTEM This section describes our sensor setup, and frame definitions. It will give us a detail description of the vehicle navigation. The position of the vehicle and the state of the vehicle can be get to know using the real time navigation system, which also gives alerts to the appropriate authority about the abnormal conditions with exact location of the vehicle A. Sensor Setup The sensor shape up on the roof of the vehicle consists of the following sensors: 1 a six- degree-of- freedom Micro Electro Mechanical Systems IMU . 2 DGPS receiver which utilizes pseudo range values, Doppler and camera-phase values for measurements; and also 3 A colour camera that can be of both hardware/ software triggered, this entire setting of sensors is shown in Fig.3. The arrangements also include accelerometers and gyroscope accelerations and rotational rates including the orthogonal axes u, v and w .These three orthogonal axes coincide with the IMU orthogonal axes which are inside the black box. Fig. 2: Real Time Navigation System The IMU position is close to the DGPS receiver antenna’s phase centre to interpret the inertial measurements from the IMU, as it from the inertial measurement readings from the origin of {B}, here we are ignoring the lever arm effect. The colour camera used here is a standard digital camera to communicate over IEEE 1394 or USB2.0. To trigger the colour camera we can use either external transistor or transistor logic signal. In another way it can be triggered using software, which is by the application programming interface. Fig. 3: Sensors are mounted on the top of the vehicle. The DGPS receiver and IMU is on the middle of the black box. B. Frame Definitions The following frames are illustrated in Fig. 4. 1 A navigation frame denoted by {N } is defined to coincide with a local tangent frame, with the axes pointing along the north, east, and down directions. 2 The body frame {B} is defined at the GPS antenna’s phase centre, which maps the IMU’s u-, v-, and w-axes along the X, Y and z –axes

- 3. Real Time Computer Vision for Lane Level Vehicle Navigation (IJSRD/Vol. 1/Issue 7/2013/0012) All rights reserved by www.ijsrd.com 1425 Fig. 4: Relationship between frames illustrated. 3 A camera calibration object that could be a 3-D object or a planar pattern, with frame {G} attached to the object, where identifiable features on the object are defined with respect {G}’origin EVALUATION OF MESUREMENTSIV. This section describes the evaluation of real time which has been measured from the DGPS and AOA measurements from a camera. In addition measurements can be combined to resolve the state estimation problem in urban areas. A. DGPS measurements This section summarizes the DGPS observables. The measurement equations for each satellite vehicle are described, and the pseudo range measurement is illustrated to correct the rover’s longitudinal position in an urban setting. Satellite geometry can affect the quality of GPS signals and accuracy of receiver trilateration. Fig. 5: Measurements of Real Time Differential GPS Dilution of Precision (DOP) reflects each satellite’s position relative to the other satellites being accessed by a receiver. Position Dilution of Precision (PDOP) is the DOP value used most commonly in GPS to determine the quality of a receiver’s position. It’s usually up to the GPS receiver to pick satellites which provide the best position triangulation. The basic working principle here is the finding the vehicle positioning and coordinates. The distance and direction between any two way points , or a position and waypoint. Travel progress reports. So that accurate time measurements can be known using the true coordinates, by doing corrections using DGPS. We will get true coordinates by comparing values with the reference station. So that we can reduce the calculation time and achieve accurate results. EXPERIMENTAL RESULTSV. This section will give in detail results, to support the concepts which have been discussed in previous sections. The proto type which is placed on the roof of the vehicles is shown in fig 2. The u-axis shows the front panel of the vehicle. The DGPS receiver along with the IMU is placed inside the black box which is in the middle. It will receives the signal from the satellite, measures the position of the vehicle and according to the root given by the persons it will change it position and navigate towards the path. If any obstacles occurs the IR Sensors will automatically detects that obstacles and take a back step for two seconds and automatically takes a right movement. We have used IR sensors in three directions, so that in those directions it will navigate according to the obstacle free route. Suddenly if the vehicle stops because of any abnormal condition occurs or if any accidents taken place then immediately message will be given to the concerned authority. It is pre requisite to give the details of the authority, so that the longitude and latitude position of the vehicle will be messaged to that authority. It gives minute meters accurate results. A. DGPS Aided INS Results This section emphasizes the location of the vehicle using the INS/DGPS state estimate during the time when both of the values from the GPS and camera visuals are available. In the first phase we are using only the pseudo range measurements not entire output part of the vehicle. The pictures are drawn for the traffic light images, are shown in Fig 5. The first column in it shows maximized pictures in both the rows and columns. The pictures were not having the mean of zero before implementing the kalman’s filter. When the vehicle is stationary some of the drift errors have occurred. The position of the vehicle and the standard deviation which is computed using EKF are shown in Fig 6.with the top of the three rows with blue plots. These plots shows the minute uncertainty results while using only DGPS aided INS in calculating the position of the vehicle in Lane Level navigation. But it is tolerable error which is less than 1.2m. If we observe carefully the accuracy of error in down component is less when compared to east and north component. This phenomenon is quite normal in DGPS measurements. There is one more approach while using only pseudo range of measurements in DGPS to make error free with Inertial Navigation System is that when vehicle is in steady state we cannot observe yaw. This thing is shown in Fig 6 in the

- 4. Real Time Computer Vision for Lane Level Vehicle Navigation (IJSRD/Vol. 1/Issue 7/2013/0012) All rights reserved by www.ijsrd.com 1426 fourth row. The angle of yaw with the DGPS aided solution drifts and when the vehicle suddenly halts at red lights the value of standard deviation raises for both the pseudo range and carrier aiding. Fig.5: .Results in the first column shown are only using DGPS with INS methodology. Next column shows images y using vision/DGPS aided INS that is visually pseudo range Fig.6. In the beginning three rows of the begging columns shows the positional errors between DGPS and because of vision/DGPS aiding where CDGPS aiding used as the reference path. The beginning three rows of the second columns show the positional standard deviation calculations. The last row shows the comparative results of angle of yaw and standard deviation calculations CONCLUSIONVI. In real time vehicle navigation it requires the exact positioning of the vehicles and the availability of the system

- 5. Real Time Computer Vision for Lane Level Vehicle Navigation (IJSRD/Vol. 1/Issue 7/2013/0012) All rights reserved by www.ijsrd.com 1427 should be high in major of the ITS applications. In particular applications like controlling and safety and also traffic managements. Many research works have been done on these type of applications by using Global Navigation Satellites systems to aid an INS. The results are also appreciable when we apply it in rural areas and in day time and in clear weather but not in urban areas. Some applications have been available in urban areas too but in aircraft/spacecraft applications. They are not implemented it on road transportations. This paper has taken the application for vehicle navigation on surface in urban areas in real time environments. For this we have used tightly coupled DGPS aided INS. It combines the DGPS measurements with the visuals from the traffic lights so that the exact positions can be computed by getting longitude and latitude values. So the navigation can be done with resolving positional errors and with extreme accuracy. REFERENCES [1] M. McDonald, R. Hall, H. Keller, C. Hecht, O.Fakler, J. Klijinhout, V. Mouro, and A. Spence, Intelligent Transportation Systems in Europe: Opportunities for Future Research. London, U.K.: World Scientific,2006. [2] J. Njord, J. Peters, M. Freitas, B. Warner, K. C. Allred, R. Bertini, R. Bryant, R. Callan, M. Knopp, L. Knowlton, C. Lopez, and T. Warne, ―Safety applications of intelligent transportation systems in Europe and Japan,‖ Fed. Highway Admin., U.S. Dept. Transp., Washington, DC, Tech. Rep., 2006. [3] I. Skog and P. Handel, ―In-car positioning and navigation technologies—A survey,‖ IEEE Trans. Intell. Transp. Syst., vol. 10, no. 1, pp. 4–21, Mar. 2009. [4] A. Fitzgibbon and A. Zisserman, ―Automatic camera recovery for closed or open image sequences,‖ in Proc. Eur. Conf. Comput. Vis., Jun. 1998, pp. 311– 326. [5] M. Pollefeys, R. Koch, and L. Gool, ―Self-calibration and metric reconstruction in spite of varying and unknown internal camera parameters,‖ in Proc. 6th Int. Conf. Compute. Vis., 1998, pp. 90–95. [6] J. Kim and S. Sukkarieh, ―Autonomous airborne navigation in unknown terrain environments,‖ IEEE Trans. Aerosp. Electron. Syst., vol. 40, no. 3, pp. 1031–1045, Jul. 2004. [7] M. Bryson and S. Sukkarieh, ―Bearing-only SLAM for an airborne vehicle,‖ in Proc. Australian Conf. Robot. Autom., Sydney, NSW, Australia, 2005. [8] D. Strelow, ―Motion estimation from image and inertial measurement,‖ Ph.D. dissertation, Carnegie Mellon Univ., Pittsburgh, PA, 2004. [9] A. Davison, I. Reid, N. Molton, and O. Stasse, ―MonoSLAM: Real-time single camera SLAM,‖ IEEE Trans. Pattern Anal. Mach. Intell., vol. 29, no. 6, pp. 1052–1067, Jun. 2007. [10]. J. Civera, A. Davison, and J. Martinez-Montiel, ―Inverse-depth parameterization for monocular SLAM,‖ IEEE Trans. Robot., vol. 24, no. 5, pp. 932–945, Oct. 2008. [11] G. Andrews, ―Implementation considerations for Vision-aided inertial navigation,‖ M.S. thesis, Northeastern Univ., Boston, MA, 2008. [12] R. Hartley and A. Zisserman, Multiple-View Geometry in Computer Vision. Cambridge, U.K.: Cambridge Univ. Press, 2000. [13] J. A. Farrell, T. Givargis, and M. Barth, ―Real-time differential carrierphase GPS-aided INS,‖ IEEE Trans.Control Syst. Technol., vol. 8, no. 4, pp. 709– 721, Jul. 2000. [14] J. A. Farrell and M. Barth, The Global Positioning System and Inertial Navigation. New York: McGraw– Hill, 1999. [15] J. A. Farrell, Aided Navigation: GPS with High Rate Sensors. NewYork: McGraw–Hill, 2008. [16] S. Moon, J. Kim, D. Hwang, S. Ra, and S. Lee, ―Implementation of a loosely coupled GPS/INS integration system,‖ in Proc. 4th Int. Symp. Satellite Navig. Technol. Appl., Brisbane, Qld., Australia, 1999. [17] R. Van Der Merwe and E. A. Wan, ―Sigma-point Kalman filters for integrated navigation,‖ in Proc. 60th Annu. Meeting ION, 2004, pp. 641–654. [18] A. Giremus, A. Doucet, V. Calmettes, and J. Y. Tourneret, ―ARao–Blackwellized particle filter for INS/GPS integration,‖ in Proc. IEEE Int. Conf. Acoust., Speech, Signal Process., 2004, pp. III-964– III-967. [19] G. Conte and P. Doherty, ―Vision-based unmanned aerial vehicle navigation using geo referenced information,‖ EURASIP J. Adv. Signal Process.,vol. 2009, p. 10, Jan. 009. .