Improving Language Modeling using Densely Connected Recurrent Neural Networks

•

0 gefällt mir•162 views

Poster presented at the Workshop on Representation Learning at ACL 2017

Melden

Teilen

Melden

Teilen

Downloaden Sie, um offline zu lesen

Empfohlen

DataXDay - The wonders of deep learning: how to leverage it for natural langu...

DataXDay - The wonders of deep learning: how to leverage it for natural langu...DataXDay Conference by Xebia

Transfer Learning (D2L4 Insight@DCU Machine Learning Workshop 2017)

Transfer Learning (D2L4 Insight@DCU Machine Learning Workshop 2017)Universitat Politècnica de Catalunya

Weitere ähnliche Inhalte

Ähnlich wie Improving Language Modeling using Densely Connected Recurrent Neural Networks

DataXDay - The wonders of deep learning: how to leverage it for natural langu...

DataXDay - The wonders of deep learning: how to leverage it for natural langu...DataXDay Conference by Xebia

Transfer Learning (D2L4 Insight@DCU Machine Learning Workshop 2017)

Transfer Learning (D2L4 Insight@DCU Machine Learning Workshop 2017)Universitat Politècnica de Catalunya

Ähnlich wie Improving Language Modeling using Densely Connected Recurrent Neural Networks (20)

DataXDay - The wonders of deep learning: how to leverage it for natural langu...

DataXDay - The wonders of deep learning: how to leverage it for natural langu...

Incremental Sense Weight Training for In-depth Interpretation of Contextualiz...

Incremental Sense Weight Training for In-depth Interpretation of Contextualiz...

Deep Learning for NLP (without Magic) - Richard Socher and Christopher Manning

Deep Learning for NLP (without Magic) - Richard Socher and Christopher Manning

STREAMING PUNCTUATION: A NOVEL PUNCTUATION TECHNIQUE LEVERAGING BIDIRECTIONAL...

STREAMING PUNCTUATION: A NOVEL PUNCTUATION TECHNIQUE LEVERAGING BIDIRECTIONAL...

Streaming Punctuation: A Novel Punctuation Technique Leveraging Bidirectional...

Streaming Punctuation: A Novel Punctuation Technique Leveraging Bidirectional...

Georgia Tech cse6242 - Intro to Deep Learning and DL4J

Georgia Tech cse6242 - Intro to Deep Learning and DL4J

PR12-179 M3D-GAN: Multi-Modal Multi-Domain Translation with Universal Attention

PR12-179 M3D-GAN: Multi-Modal Multi-Domain Translation with Universal Attention

Issues in AI product development and practices in audio applications

Issues in AI product development and practices in audio applications

Video-Language Pre-training based on Transformer Models

Video-Language Pre-training based on Transformer Models

Deep Learning for Machine Translation: a paradigm shift - Alberto Massidda - ...

Deep Learning for Machine Translation: a paradigm shift - Alberto Massidda - ...

[Paper Reading] Unsupervised Learning of Sentence Embeddings using Compositi...![[Paper Reading] Unsupervised Learning of Sentence Embeddings using Compositi...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Paper Reading] Unsupervised Learning of Sentence Embeddings using Compositi...](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Paper Reading] Unsupervised Learning of Sentence Embeddings using Compositi...

Transfer Learning (D2L4 Insight@DCU Machine Learning Workshop 2017)

Transfer Learning (D2L4 Insight@DCU Machine Learning Workshop 2017)

Kürzlich hochgeladen

Abortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Abortion pills in Doha Qatar (+966572737505 ! Get CytotecAbortion pills in Riyadh +966572737505 get cytotec

Kürzlich hochgeladen (20)

Market Analysis in the 5 Largest Economic Countries in Southeast Asia.pdf

Market Analysis in the 5 Largest Economic Countries in Southeast Asia.pdf

Cheap Rate Call girls Sarita Vihar Delhi 9205541914 shot 1500 night

Cheap Rate Call girls Sarita Vihar Delhi 9205541914 shot 1500 night

Abortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Abortion pills in Doha Qatar (+966572737505 ! Get Cytotec

Call Girls in Sarai Kale Khan Delhi 💯 Call Us 🔝9205541914 🔝( Delhi) Escorts S...

Call Girls in Sarai Kale Khan Delhi 💯 Call Us 🔝9205541914 🔝( Delhi) Escorts S...

Call me @ 9892124323 Cheap Rate Call Girls in Vashi with Real Photo 100% Secure

Call me @ 9892124323 Cheap Rate Call Girls in Vashi with Real Photo 100% Secure

{Pooja: 9892124323 } Call Girl in Mumbai | Jas Kaur Rate 4500 Free Hotel Del...

{Pooja: 9892124323 } Call Girl in Mumbai | Jas Kaur Rate 4500 Free Hotel Del...

Al Barsha Escorts $#$ O565212860 $#$ Escort Service In Al Barsha

Al Barsha Escorts $#$ O565212860 $#$ Escort Service In Al Barsha

Call Girls 🫤 Dwarka ➡️ 9711199171 ➡️ Delhi 🫦 Two shot with one girl

Call Girls 🫤 Dwarka ➡️ 9711199171 ➡️ Delhi 🫦 Two shot with one girl

Delhi Call Girls CP 9711199171 ☎✔👌✔ Whatsapp Hard And Sexy Vip Call

Delhi Call Girls CP 9711199171 ☎✔👌✔ Whatsapp Hard And Sexy Vip Call

Best VIP Call Girls Noida Sector 22 Call Me: 8448380779

Best VIP Call Girls Noida Sector 22 Call Me: 8448380779

Improving Language Modeling using Densely Connected Recurrent Neural Networks

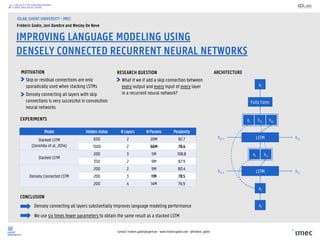

- 1. Contact: Frederic.godin@ugent.be – www.fredericgodin.com - @frederic_godin IMPROVING LANGUAGE MODELING USING DENSELY CONNECTED RECURRENT NEURAL NETWORKS IDLAB, GHENT UNIVERSITY - IMEC Fréderic Godin, Joni Dambre and Wesley De Neve MOTIVATION Model Hidden states # Layers # Params Perplexity Stacked LSTM (Zaremba et al., 2014) 650 2 20M 82.7 1500 2 66M 78.4 Stacked LSTM 200 3 5M 108.8 350 2 9M 87.9 Densely Connected LSTM 200 2 9M 80.4 200 3 11M 78.5 200 4 14M 76.9 EXPERIMENTS ARCHITECTURE CONCLUSION Densely connecting all layers substantially improves language modeling performance We use six times fewer parameters to obtain the same result as a stacked LSTM Skip or residual connections are only sporadically used when stacking LSTMs RESEARCH QUESTION What if we if add a skip connection between every output and every input of every layer in a recurrent neural network?Densely connecting all layers with skip connections is very successful in convolution neural networks LSTM LSTM et et h1,t et h1,t h2,t Fully Conn. yt h1,t-1 h2,th2,t-1 h1,t xt