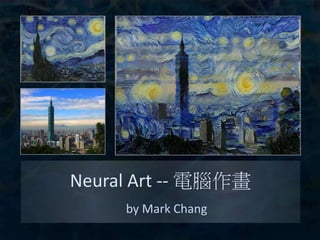

NeuralArt 電腦作畫

•

63 gefällt mir•13,285 views

Introduction of "A Neural Algorithm of Artistic Style" 口頭講解:https://www.youtube.com/watch?v=qzGuYuCpy1M

Melden

Teilen

Melden

Teilen

Downloaden Sie, um offline zu lesen

Empfohlen

Empfohlen

https://telecombcn-dl.github.io/2017-dlai/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks or Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles of deep learning from both an algorithmic and computational perspectives.The Perceptron (DLAI D1L2 2017 UPC Deep Learning for Artificial Intelligence)

The Perceptron (DLAI D1L2 2017 UPC Deep Learning for Artificial Intelligence)Universitat Politècnica de Catalunya

Weitere ähnliche Inhalte

Was ist angesagt?

https://telecombcn-dl.github.io/2017-dlai/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks or Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles of deep learning from both an algorithmic and computational perspectives.The Perceptron (DLAI D1L2 2017 UPC Deep Learning for Artificial Intelligence)

The Perceptron (DLAI D1L2 2017 UPC Deep Learning for Artificial Intelligence)Universitat Politècnica de Catalunya

Was ist angesagt? (20)

LSGAN - SIMPle(Simple Idea Meaningful Performance Level up)

LSGAN - SIMPle(Simple Idea Meaningful Performance Level up)

Anti-differentiating approximation algorithms: A case study with min-cuts, sp...

Anti-differentiating approximation algorithms: A case study with min-cuts, sp...

[MIRU2018] Global Average Poolingの特性を用いたAttention Branch Network![[MIRU2018] Global Average Poolingの特性を用いたAttention Branch Network](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[MIRU2018] Global Average Poolingの特性を用いたAttention Branch Network](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[MIRU2018] Global Average Poolingの特性を用いたAttention Branch Network

Big data matrix factorizations and Overlapping community detection in graphs

Big data matrix factorizations and Overlapping community detection in graphs

A non-stiff boundary integral method for internal waves

A non-stiff boundary integral method for internal waves

[Vldb 2013] skyline operator on anti correlated distributions![[Vldb 2013] skyline operator on anti correlated distributions](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Vldb 2013] skyline operator on anti correlated distributions](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Vldb 2013] skyline operator on anti correlated distributions

Introduction to CNN with Application to Object Recognition

Introduction to CNN with Application to Object Recognition

The Perceptron (DLAI D1L2 2017 UPC Deep Learning for Artificial Intelligence)

The Perceptron (DLAI D1L2 2017 UPC Deep Learning for Artificial Intelligence)

InfoGAN : Interpretable Representation Learning by Information Maximizing Gen...

InfoGAN : Interpretable Representation Learning by Information Maximizing Gen...

Andere mochten auch

Andere mochten auch (20)

Ähnlich wie NeuralArt 電腦作畫

https://telecombcn-dl.github.io/2018-dlai/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks or Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles of deep learning from both an algorithmic and computational perspectives.Convolutional Neural Networks - Veronica Vilaplana - UPC Barcelona 2018

Convolutional Neural Networks - Veronica Vilaplana - UPC Barcelona 2018Universitat Politècnica de Catalunya

https://telecombcn-dl.github.io/2018-dlai/

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of large-scale annotated datasets and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which were previously addressed with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks or Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles of deep learning from both an algorithmic and computational perspectives.PixelCNN, Wavenet, Normalizing Flows - Santiago Pascual - UPC Barcelona 2018

PixelCNN, Wavenet, Normalizing Flows - Santiago Pascual - UPC Barcelona 2018Universitat Politècnica de Catalunya

https://github.com/telecombcn-dl/dlmm-2017-dcu

Deep learning technologies are at the core of the current revolution in artificial intelligence for multimedia data analysis. The convergence of big annotated data and affordable GPU hardware has allowed the training of neural networks for data analysis tasks which had been addressed until now with hand-crafted features. Architectures such as convolutional neural networks, recurrent neural networks and Q-nets for reinforcement learning have shaped a brand new scenario in signal processing. This course will cover the basic principles and applications of deep learning to computer vision problems, such as image classification, object detection or text captioning.Deep Neural Networks (D1L2 Insight@DCU Machine Learning Workshop 2017)

Deep Neural Networks (D1L2 Insight@DCU Machine Learning Workshop 2017)Universitat Politècnica de Catalunya

Ähnlich wie NeuralArt 電腦作畫 (20)

Neural network basic and introduction of Deep learning

Neural network basic and introduction of Deep learning

Deep Learning for Computer Vision: Deep Networks (UPC 2016)

Deep Learning for Computer Vision: Deep Networks (UPC 2016)

DeepXplore: Automated Whitebox Testing of Deep Learning

DeepXplore: Automated Whitebox Testing of Deep Learning

Convolutional Neural Networks - Veronica Vilaplana - UPC Barcelona 2018

Convolutional Neural Networks - Veronica Vilaplana - UPC Barcelona 2018

PixelCNN, Wavenet, Normalizing Flows - Santiago Pascual - UPC Barcelona 2018

PixelCNN, Wavenet, Normalizing Flows - Santiago Pascual - UPC Barcelona 2018

Deep Neural Networks (D1L2 Insight@DCU Machine Learning Workshop 2017)

Deep Neural Networks (D1L2 Insight@DCU Machine Learning Workshop 2017)

Mehr von Mark Chang

Mehr von Mark Chang (20)

Modeling the Dynamics of SGD by Stochastic Differential Equation

Modeling the Dynamics of SGD by Stochastic Differential Equation

Modeling the Dynamics of SGD by Stochastic Differential Equation

Modeling the Dynamics of SGD by Stochastic Differential Equation

Language Understanding for Text-based Games using Deep Reinforcement Learning

Language Understanding for Text-based Games using Deep Reinforcement Learning

Kürzlich hochgeladen

Enterprise Knowledge’s Urmi Majumder, Principal Data Architecture Consultant, and Fernando Aguilar Islas, Senior Data Science Consultant, presented "Driving Behavioral Change for Information Management through Data-Driven Green Strategy" on March 27, 2024 at Enterprise Data World (EDW) in Orlando, Florida.

In this presentation, Urmi and Fernando discussed a case study describing how the information management division in a large supply chain organization drove user behavior change through awareness of the carbon footprint of their duplicated and near-duplicated content, identified via advanced data analytics. Check out their presentation to gain valuable perspectives on utilizing data-driven strategies to influence positive behavioral shifts and support sustainability initiatives within your organization.

In this session, participants gained answers to the following questions:

- What is a Green Information Management (IM) Strategy, and why should you have one?

- How can Artificial Intelligence (AI) and Machine Learning (ML) support your Green IM Strategy through content deduplication?

- How can an organization use insights into their data to influence employee behavior for IM?

- How can you reap additional benefits from content reduction that go beyond Green IM?

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...Enterprise Knowledge

Kürzlich hochgeladen (20)

Tech Trends Report 2024 Future Today Institute.pdf

Tech Trends Report 2024 Future Today Institute.pdf

Apidays Singapore 2024 - Building Digital Trust in a Digital Economy by Veron...

Apidays Singapore 2024 - Building Digital Trust in a Digital Economy by Veron...

[2024]Digital Global Overview Report 2024 Meltwater.pdf![[2024]Digital Global Overview Report 2024 Meltwater.pdf](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[2024]Digital Global Overview Report 2024 Meltwater.pdf](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[2024]Digital Global Overview Report 2024 Meltwater.pdf

08448380779 Call Girls In Civil Lines Women Seeking Men

08448380779 Call Girls In Civil Lines Women Seeking Men

What Are The Drone Anti-jamming Systems Technology?

What Are The Drone Anti-jamming Systems Technology?

TrustArc Webinar - Stay Ahead of US State Data Privacy Law Developments

TrustArc Webinar - Stay Ahead of US State Data Privacy Law Developments

08448380779 Call Girls In Greater Kailash - I Women Seeking Men

08448380779 Call Girls In Greater Kailash - I Women Seeking Men

08448380779 Call Girls In Friends Colony Women Seeking Men

08448380779 Call Girls In Friends Colony Women Seeking Men

Axa Assurance Maroc - Insurer Innovation Award 2024

Axa Assurance Maroc - Insurer Innovation Award 2024

Workshop - Best of Both Worlds_ Combine KG and Vector search for enhanced R...

Workshop - Best of Both Worlds_ Combine KG and Vector search for enhanced R...

Understanding Discord NSFW Servers A Guide for Responsible Users.pdf

Understanding Discord NSFW Servers A Guide for Responsible Users.pdf

Mastering MySQL Database Architecture: Deep Dive into MySQL Shell and MySQL R...

Mastering MySQL Database Architecture: Deep Dive into MySQL Shell and MySQL R...

How to Troubleshoot Apps for the Modern Connected Worker

How to Troubleshoot Apps for the Modern Connected Worker

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...

08448380779 Call Girls In Diplomatic Enclave Women Seeking Men

08448380779 Call Girls In Diplomatic Enclave Women Seeking Men

IAC 2024 - IA Fast Track to Search Focused AI Solutions

IAC 2024 - IA Fast Track to Search Focused AI Solutions

NeuralArt 電腦作畫

- 1. Neural Art -‐-‐ 電腦作畫 by Mark Chang

- 2. A Neural Algorithm of Ar7s7c Style • 作者: – Leon A. Gatys. – Alexander S. Ecker. – MaAhias Bethge • 所屬單位: – Werner Reichardt Centre for Integra7ve Neuroscience and Ins7tute of Theore7cal Physics, University of Tubingen, Germany. – Bernstein Center for Computa7onal Neuroscience, Tubingen, Germany.

- 3. 如何作畫? 大腦 畫家 景物 畫風 畫作 電腦 類神經網路

- 4. 大綱 • 人類視覺 • 電腦視覺 • 電腦作畫 • 作品展示

- 5. 人類視覺 • 神經元 • 視覺傳遞途徑 • 錯覺

- 6. 神經元 • Neuron • Ac7on Poten7al Dendrite Axon Cell Body Time Voltage Threshold

- 7. 視覺傳遞途徑 Re7na Visual Area V1 Visual Area V4 Inferior Temporal Gyrus (IT)

- 8. 視覺傳遞途徑 Visual Area V1 Inferior Temporal Gyrus (IT) Recep7ve Fields Visual Area V4

- 9. 錯覺

- 10. 電腦視覺 • Neural Networks • Convolu7onal Neural Networks • VGG 19

- 11. Neural Networks n W1 W2 x1 x2 b Wb y nin = w1x1 + w2x2 + wb nout = 1 1 + e nin Sigmoid Rec7fied Linear nout = ⇢ nin if nin > 0 0 otherwise

- 12. Neural Networks x y n11 n12 n21 n22 b b z1 z2 Input Layer Hidden Layer Output Layer W12,y W12,x W11,y W11,b W12,b W11,x W21,11 W22,12 W21,12 W22,11 W21,b W22,b

- 13. Convolu7onal Neural Networks • Convolu7onal Layer depth width width depth weights weights height shared weight

- 14. Convolu7onal Neural Networks • Stride • Padding Stride = 1 Stride = 2 Padding = 0 Padding = 1

- 15. Convolu7onal Neural Networks • Pooling Layer 1 3 2 4 5 7 6 8 0 0 4 4 6 6 0 0 4 5 3 2 no overlap no padding no weights depth = 1 7 8 6 4 Maximum Pooling Average Pooling

- 16. Convolu7onal Neural Networks Convolu7onal Layer Convolu7onal Layer Pooling Layer Pooling Layer Recep7ve Fields Recep7ve Fields Input Layer

- 17. Convolu7onal Neural Networks Input Layer Convolu7onal Layer with Recep7ve Fields: Max-‐pooling Layer with Width =3, Height = 3 Filter Responses Filter Responses Input Image

- 18. VGG 19 • Karen Simonyan & Andrew Zisserman. Very Deep Convolu7onal Networks for Large-‐scale Image Recogni7on. • ImageNet Challenge 2014 • 19 (+5) layers – 16 Convolu7onal layers (width=3, height=3) – 5 Max-‐pooling layers (width=2, height=2) – 3 Fully-‐connected layers

- 19. VGG 19 depth=64 conv1_1 conv1_2 maxpool depth=128 conv2_1 conv2_2 maxpool depth=256 conv3_1 conv3_2 conv3_3 conv3_4 depth=512 conv4_1 conv4_2 conv4_3 conv4_4 depth=512 conv5_1 conv5_2 conv5_3 conv5_4 maxpool maxpool maxpool size=4096 FC1 FC2 size=1000 sogmax

- 20. 電腦作畫 • 內容產生 • 畫風產生 • 作品產生

- 21. 內容產生 大腦 畫家 景物 畫布 計算 兩者 差異 神經反應 補上線條和顏色

- 22. 內容產生 計算 兩者 差異 Filter Responses VGG19 修 正 差 異 相片 畫布 修正後 Width*Height Depth

- 23. 內容產生 Layer l’s Filter l Responses: ent(Pl , Cl ) = 1 2 X i,j (Cl i,j Pl i,j)2 Layer l’s Filter Responses: Lcontent(P l , Cl ) = 1 2 X i,j (Cl i,j P l i,j)2 Input Photo: Lcontent(p, c, l) = 1 2 X i,j (Cl i,j Pl i,j)2 Lcontent(p, x, l) = 1 2 X i,j (Xl i,j Pl i,j)2 @Lcontent(p, x, l) @Xl i,j = Xl i,j Pl i,j Xl Xl i,j Input Canvas: x Width*Height (j) Depth (i) Width*Height (j) Depth (i)

- 24. 內容產生 • Backward Propaga7on Layer l’s Filter l Responses: Xl Input Canvas: x VGG19 @Lcontent @x = @Lcontent @Xl @Xl x x x ⌘ @Lcontent @x Update Canvas Learning Rate

- 25. 內容產生

- 26. 內容產生 VGG19 conv1_2 conv2_2 conv3_4 conv4_4 conv5_2 conv5_1

- 27. 畫風產生 • ”Style” is posi7on-‐independent style extrac7on

- 28. 畫風產生 VGG19 畫作 G G Filter Responses Gram Matrix Width*Height Depth Depth Depth Posi7on-‐ dependent Posi7on-‐ independent

- 29. 畫風產生 1. .5 .5 .5 1. 1. .5 .25 1. .5 .25 .5 .25 .25 1. .5 1. Width*Height Depth k1 k2 k1 k2 Depth Depth Layer l’s Filter Responses Gram Matrix Fl 1 Fl 2 Fl 3 Fl 4 Fl 1 Fl 2 Fl 3 Fl 4 G Gl i,j = Fl i · Fl j Gl 4,1 = Fl 4 · Fl 1 = 1 ⇥ 1 + 0 ⇥ 0.5 + 0 ⇥ 0 + ... = 1

- 30. 畫風產生 VGG19 Filter Responses Gram Matrix 計算 兩者 差異 G G 畫風 畫布 修 正 差 異 修正後

- 31. 畫風產生 Lstyle(a, x, l) = 1 2 X i,j (Xl i,j Al i,j)2 @Lstyle(a, x, l) @Fl i,j = ((Fl )T (Xl Al ))j,i Layer l’s Filter Responses Layer l’s Gram Matrix Layer l’s Gram Matrix Fl i,j Al i,j Xl i,j Input Artwork: Input Canvas: a x

- 32. 畫風產生

- 33. 畫風產生 VGG19 Conv1_1 Conv1_1 Conv2_1 Conv1_1 Conv2_1 Conv3_1 Conv1_1 Conv2_1 Conv3_1 Conv4_1 Conv1_1 Conv2_1 Conv3_1 Conv4_1 Conv5_1

- 34. 作品產生 Ltotal = ↵Lcontent + Lstyle a ent(p, c, l) = 1 2 X i,j (Cl i,j Pl i,j)2 x x x ⌘ @Ltotal @x x Filter Responses VGG19 Lcontent(p, x) Lstyle(a, x) Gram Matrix

- 35. 作品產生 VGG19 VGG19 Lcontent(p, x) Lstyle(a, x) Conv1_1 Conv2_1 Conv3_1 Conv4_1 Conv5_1 Conv4_2 Ltotal = ↵Lcontent + Lstyle

- 36. 作品產生

- 37. 作品展示 • 內容 v.s. 畫風 • 不同起始狀態 • 不同VGG Layers • 素描、水彩 • 詩中有畫、畫中有詩

- 38. 內容 v.s. 畫風 0.15 0.05 0.02 0.007 ↵

- 39. 不同起始狀態 noise 0.9 *noise + 0.1*photo photo

- 40. 不同VGG Layers Conv1_1 Conv2_1 Conv1_1 Conv2_1 Conv3_1 Conv1_1 Conv2_1 Conv3_1 Conv4_1 Conv1_1 Conv2_1 Conv3_1 Conv4_1 Conv5_1 ↵ = 0.002

- 41. 素描、水彩

- 42. 詩中有畫、畫中有詩

- 43. 延伸閱讀 • A Neural Algorithm of Ar7s7c Style – hAp://arxiv.org/abs/1508.06576 • Texture Synthesis Using Convolu7onal Neural Networks – hAp://arxiv.org/abs/1505.07376 • Convolu7onal Neural Network – hAp://cs231n.github.io/convolu7onal-‐networks/ • Neural Network Back Propaga7on – hAp://cpmarkchang.logdown.com/posts/277349-‐neural-‐ network-‐backward-‐propaga7on • 電腦賦詩: – hAp://www.slideshare.net/ckmarkohchang/computa7onal-‐ poetry

- 44. 程式碼 • Python Tensorflow – hAps://github.com/ckmarkoh/neuralart_tensorflow • Python Theano – hAps://github.com/woonketwong/ar7fy • Python Theano (ipython notebook) – hAps://github.com/Lasagne/Recipes/blob/master/ examples/styletransfer/Art%20Style %20Transfer.ipynb • Python deeppy – hAps://github.com/andersbll/neural_ar7s7c_style

- 45. 圖片來源 • hAp:// www.taipei-‐101.com.tw/ upload/news/ 201502/2015021711505431 705145.JPG • hAps://github.com/ andersbll/ neural_ar7s7c_style/blob/ master/images/ starry_night.jpg?raw=true

- 46. 特別感謝 • 臺大資工imlab

Hinweis der Redaktion

- n_{in} = w_{1} x_{1} + w_{2}x_{2}+w_{b} n_{out} = \frac{1}{1+e^{-n_{in}}}

- L_{content}(\textbf{p},\textbf{x}, l) = \frac{1}{2} \sum_{i,j}(X^{l}_{i,j}-P^{l}_{i,j})^2 \dfrac{\partial L_{content}(\textbf{p},\textbf{x},l) }{\partial X^{l}_{i,j}} = X^{l}_{i,j}-P^{l}_{i,j} X_^{l}

- \textbf{x} \leftarrow \textbf{x} - \eta \dfrac{\partial L_{content}}{\partial \textbf{x}} \textbf{x} \leftarrow \textbf{x} - \eta \dfrac{\partial L_{content}}{\partial \textbf{x}}

- G^{l}_{i,j} = F^{l}_{i} \cdot F^{l}_{j} & G^{l}_{4,1} = F^{l}_{4} \cdot F^{l}_{1} \\ & = 1 \times 1 + 0 \times 0.5 + 0\times 0 + ...\\ &= 1

- A^{l}_{i,j} L_{style}(\textbf{a},\textbf{x}, l) = \frac{1}{2} \sum_{i,j}(X^{l}_{i,j}-A^{l}_{i,j})^2 \dfrac{\partial L_{style}(\textbf{a},\textbf{x},l) }{\partial F^{l}_{i,j}} = ( (F^{l})^{T} (X^{l}-A^{l}) )_{j,i}

- L_{style} L_{total} = \alpha L_{content} + (1-\alpha) L_{style} \dfrac{\partial L_{total}}{\partial \textbf{x}} \textbf{x} \rightarrow \textbf{x} - \eta \dfrac{\partial L_{total}}{\partial \textbf{x}} L_{style}(\textbf{a},\textbf{x}) L_{content}(\textbf{p},\textbf{x})