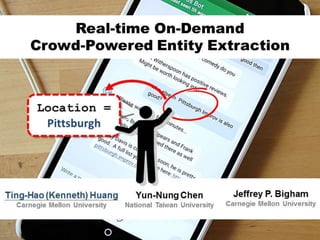

Real-time On-Demand Crowd-powered Entity Extraction

- 1. 1/20

- 2. 2/20 Chorus: A Crowd-powered Conversational Assistant "Is there anything else I can help you with?": Challenges in Deploying an On-Demand Crowd-Powered Conversational Agent Ting-Hao K. Huang, Walter S. Lasecki, Amos Azaria, Jeffrey P. Bigham. HCOMP’16

- 3. 3/20 Guardian: A Crowd-Powered Dialog System for Web APIs Guardian: A Crowd-Powered Spoken Dialog System for Web APIs Ting-Hao K. Huang, Walter S. Lasecki, Jeffrey P. Bigham. HCOMP’15

- 5. 5/20 Time-Limited Output-Agreement Mechanism Sunday flights from New York City to Las Vegas Answer Aggregate Destination: Las Vegas RecruitedPlayers Time Constraint

- 7. 7/20 We Want to Know More! How fast? How many players? How good? Trade-offs ?

- 8. 8/20 3 Variables Sunday flights from New York City to Las Vegas Answer Aggregate Destination: Las Vegas RecruitedPlayers Time Constraint 3. Answer Aggregate Method 2. Time Constraint 1. Number of Players

- 9. 9/20 Aggregate Method 1: ESP Only ESP Answers do NOT Match Empty Label ESP Answer Matches Time

- 10. 10/20 Aggregate Method 2: 1st Only ESP Answers do NOT Match ESP Answer Matches Time

- 11. 11/20 Aggregate Method 3: ESP + 1st ESP Answers do NOT Match ESP Answer Matches Time

- 12. 12/20 Experiment • Data – Airline Travel Information System (ATIS) • Class A: Context Independent • Class D: Context Dependent • Class X: Unevaluable • Settings – Focus on the toloc.city_name slot – Number of workers = 10 – Time constraint = 15 and 20 seconds – 3 aggregate methods – Using Amazon Mechanical Turk Simple Complex

- 13. 13/20 Simple Queries (Class A) ESP + 1st has the best quality 1st Only has the best speed 20 seconds has better quality & similar speed

- 14. 14/20 Trade-Offs on Simple Queries (Class A) 4 6 8 10 12 14 16 18 20 0 2 4 6 8 10 Avg.ResponseTime(sec) # Player ESP + First (20 sec) ESP + First (15 sec) First (20 sec) First (15 sec) 0.60 0.65 0.70 0.75 0.80 0.85 0.90 0.95 1.00 0 2 4 6 8 10 F1-score # Player ESP + 1st (20 sec) ESP + 1st (15 sec) 1st (20 sec) 1st (15 sec) 0.65 0.70 0.75 0.80 0.85 0.90 0.95 5 6 7 8 9 10 11 12 F1-score Avg. Response Time (sec) 10 Players 9 Player 8 players 7 Players 6 Players 5 Players ESP + 1st (20 sec) 1st Only (20 sec) More Players, Faster More Players, Better Result Faster, Worse Result

- 15. 15/20 0 1 2 3 4 5 6 7 8 9 1 2 3 Series1 Series2 0 0.2 0.4 0.6 0.8 1 1 2 3 Series1 Series2 Series3 F1-score Response Time (sec) On Complex Queries (Class D & X) Automatic F1-score = 0.8 (Class D) 5 to 8 seconds (1st / ESP+1st )

- 16. 16/20 Now we know… 5 to 8 seconds. 10 Players! (5 is also fine.) F1 = 0.8 in Class D. F1 = 0.9 in Class A. Yes. Trade-offs.

- 17. 17/20 Eatity System • Extracting food entities from user messages • Accuracy(Food) = 78.89% (In-lab study, 150 msgs) Accuracy(Drink) = 83.33%

- 18. 18/20 When to Use it? • As a backup / support for automated annotators – One player can be an automated annotator – Low-confidence or failed cases / Validation • Crowd-powered Systems – Deployed Chorus: TalkingToTheCrowd.org

- 19. 19/20 How about having humans do it?

- 20. 20/20 Thank you! @windx0303 Ting-Hao (Kenneth) Huang Carnegie Mellon University KennethHuang.cc Jeffrey P. Bigham Carnegie Mellon University www.JeffreyBigham.com Yun-Nung Chen National Taiwan University VivianChen.idv.tw

- 22. 22/20 How about having humans do it? Ling Tung University, 35th 2016 Young Designers Exhibition, Taiwan https://www.facebook.com/nownews/videos/10153864340447663/

- 23. 23/20 Why always pick the 1st? 0.60 0.65 0.70 0.75 0.80 0.85 0 1 2 3 4 5 6 7 F1-score Input Order (i) 10 Players 4 Players 2 Players Because they are better.