Kafka

•Download as PPTX, PDF•

0 likes•178 views

Kafka Introduction with Kafka topics, Kafka API, Kafka MySQL

Report

Share

Report

Share

Recommended

Recommended

More Related Content

What's hot

What's hot (20)

Kafka Tutorial - basics of the Kafka streaming platform

Kafka Tutorial - basics of the Kafka streaming platform

Apache Kafka: Next Generation Distributed Messaging System

Apache Kafka: Next Generation Distributed Messaging System

Kafka 0.8.0 Presentation to Atlanta Java User's Group March 2013

Kafka 0.8.0 Presentation to Atlanta Java User's Group March 2013

Similar to Kafka

Similar to Kafka (20)

OSMC 2016 - Monasca - Monitoring-as-a-Service (at-Scale) by Roland Hochmuth

OSMC 2016 - Monasca - Monitoring-as-a-Service (at-Scale) by Roland Hochmuth

OSMC 2016 | Monasca: Monitoring-as-a-Service (at-Scale) by Roland Hochmuth

OSMC 2016 | Monasca: Monitoring-as-a-Service (at-Scale) by Roland Hochmuth

AMIS SIG - Introducing Apache Kafka - Scalable, reliable Event Bus & Message ...

AMIS SIG - Introducing Apache Kafka - Scalable, reliable Event Bus & Message ...

Unlocking the Power of Apache Kafka: How Kafka Listeners Facilitate Real-time...

Unlocking the Power of Apache Kafka: How Kafka Listeners Facilitate Real-time...

Recently uploaded

Enterprise Knowledge’s Urmi Majumder, Principal Data Architecture Consultant, and Fernando Aguilar Islas, Senior Data Science Consultant, presented "Driving Behavioral Change for Information Management through Data-Driven Green Strategy" on March 27, 2024 at Enterprise Data World (EDW) in Orlando, Florida.

In this presentation, Urmi and Fernando discussed a case study describing how the information management division in a large supply chain organization drove user behavior change through awareness of the carbon footprint of their duplicated and near-duplicated content, identified via advanced data analytics. Check out their presentation to gain valuable perspectives on utilizing data-driven strategies to influence positive behavioral shifts and support sustainability initiatives within your organization.

In this session, participants gained answers to the following questions:

- What is a Green Information Management (IM) Strategy, and why should you have one?

- How can Artificial Intelligence (AI) and Machine Learning (ML) support your Green IM Strategy through content deduplication?

- How can an organization use insights into their data to influence employee behavior for IM?

- How can you reap additional benefits from content reduction that go beyond Green IM?

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...Enterprise Knowledge

Recently uploaded (20)

Strategies for Unlocking Knowledge Management in Microsoft 365 in the Copilot...

Strategies for Unlocking Knowledge Management in Microsoft 365 in the Copilot...

Scaling API-first – The story of a global engineering organization

Scaling API-first – The story of a global engineering organization

Workshop - Best of Both Worlds_ Combine KG and Vector search for enhanced R...

Workshop - Best of Both Worlds_ Combine KG and Vector search for enhanced R...

Apidays Singapore 2024 - Building Digital Trust in a Digital Economy by Veron...

Apidays Singapore 2024 - Building Digital Trust in a Digital Economy by Veron...

The Codex of Business Writing Software for Real-World Solutions 2.pptx

The Codex of Business Writing Software for Real-World Solutions 2.pptx

Breaking the Kubernetes Kill Chain: Host Path Mount

Breaking the Kubernetes Kill Chain: Host Path Mount

Driving Behavioral Change for Information Management through Data-Driven Gree...

Driving Behavioral Change for Information Management through Data-Driven Gree...

How to Troubleshoot Apps for the Modern Connected Worker

How to Troubleshoot Apps for the Modern Connected Worker

Factors to Consider When Choosing Accounts Payable Services Providers.pptx

Factors to Consider When Choosing Accounts Payable Services Providers.pptx

2024: Domino Containers - The Next Step. News from the Domino Container commu...

2024: Domino Containers - The Next Step. News from the Domino Container commu...

Automating Google Workspace (GWS) & more with Apps Script

Automating Google Workspace (GWS) & more with Apps Script

From Event to Action: Accelerate Your Decision Making with Real-Time Automation

From Event to Action: Accelerate Your Decision Making with Real-Time Automation

What Are The Drone Anti-jamming Systems Technology?

What Are The Drone Anti-jamming Systems Technology?

08448380779 Call Girls In Friends Colony Women Seeking Men

08448380779 Call Girls In Friends Colony Women Seeking Men

TrustArc Webinar - Stay Ahead of US State Data Privacy Law Developments

TrustArc Webinar - Stay Ahead of US State Data Privacy Law Developments

The Role of Taxonomy and Ontology in Semantic Layers - Heather Hedden.pdf

The Role of Taxonomy and Ontology in Semantic Layers - Heather Hedden.pdf

IAC 2024 - IA Fast Track to Search Focused AI Solutions

IAC 2024 - IA Fast Track to Search Focused AI Solutions

Kafka

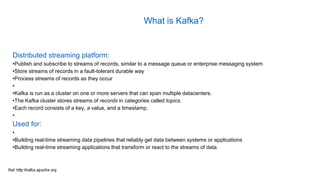

- 1. Distributed streaming platform: •Publish and subscribe to streams of records, similar to a message queue or enterprise messaging system •Store streams of records in a fault-tolerant durable way •Process streams of records as they occur • •Kafka is run as a cluster on one or more servers that can span multiple datacenters. •The Kafka cluster stores streams of records in categories called topics. •Each record consists of a key, a value, and a timestamp. • Used for: • •Building real-time streaming data pipelines that reliably get data between systems or applications •Building real-time streaming applications that transform or react to the streams of data What is Kafka?

- 2. Kafka API

- 3. Kafka API The Producer API: Allows an application to publish a stream of records to one or more Kafka topics. The Consumer API: Allows an application to subscribe to one or more topics and process the stream of records produced to them. The Streams API allows: An application to act as a stream processor, consuming an input stream from one or more topics and producing an output stream to one or more output topics, effectively transforming the input streams to output streams. The Connector API: Allows building and running reusable producers or consumers that connect Kafka topics to existing applications or data systems. For example, a connector to a relational database might capture every change to a table.

- 4. Kafka Communication Protocol: TCP Protocol Communication between client and server is done •simple •high-performance •language agnostic • Java client for Kafka is provided and available in following language C/C++, Python, Go (AKA golang), Erlang, .NET, Clojure, Ruby, Node.js, Proxy (HTTP REST, etc), Perl, stdin/stdout, PHP, Rust, Alternative Java Storm, Scala DSL , Clojure, Swift, Client Libraries Previously Supported

- 5. Kafka Topics •Topic is a category or feed name to which records are published •Topics in Kafka are always multi-subscriber •A topic can have zero, one, or many consumers that subscribe to the data written to it •For each topic, the Kafka cluster maintains a partitioned log that looks like this:

- 6. Kafka Partitions Each partition: •Is an ordered, •immutable sequence of records that is continually appended to—a structured commit log Offset: •The records in the partitions are each assigned a sequential id number called the offset that uniquely identifies each record within the partition Records: •Records configurable retention period •If the retention policy is set to two days, then for the two days after a record is published, it is available for consumption, after which it will be discarded to free up space. Performance: •Effectively constant with respect to data size so storing data for a long time is not a problem

- 7. Kafka Consumer Group •Each consumer in the group is assigned a set of partitions to consume from. They will receive messages from a different subset of the partitions in the topic. Kafka guarantees that a message is only read by a single consumer in the group. •The number of partitions impacts the maximum parallelism of consumers as you cannot have more consumers than partitions. •Kafka only provides a total order over records within a partition, not between different partitions in a topic. Per-partition ordering combined with the ability to partition data by key is sufficient for most applications. However, if you require a total order over records this can be achieved with a topic that has only one partition, though this will mean only one consumer process per consumer group.

- 8. Kafka At Yelp with MySQL

- 9. MySQL Kafka and Flat File