Ppt Open Mrs 1

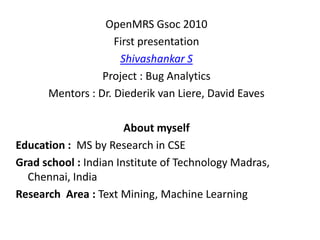

- 1. OpenMRS Gsoc 2010 First presentation Shivashankar S Project : Bug Analytics Mentors : Dr. Diederik van Liere, David Eaves About myself Education : MS by Research in CSE Grad school : Indian Institute of Technology Madras, Chennai, India Research Area : Text Mining, Machine Learning

- 2. Roadmap • Overview • Deliverables • Duplicate report identification • Expert identification • Current progress & Challenges • Results

- 3. Project Overview • Fixing bugs faster is very crucial to keep any open source project active and alive. • Higher level flow : When a new report comes, the report will be assigned to an expert, then the expert resolves the bug and fixes it. • Issue 1 : Organizations involve a triaging team to do assignment of reports manually to experts. • Issue 2 : Also if a report is resolved as duplicate, the time spent on the task by the expert goes in vain. • The aim of “Bug Analytics” project is to address the above mentioned issues using the text in reports. • Bug tracking tool of choice is JIRA.

- 4. Deliverables • Plug-in for JIRA that can do the following – Duplicate ticket identification – Automatically assigning reports to experts – Classifying a report as bug or not – Likelihood of a bug report being fixed Note : Since all tasks are dealt with individually in literature, the focus will be on tasks in the same order mentioned [last two depends on time availability].

- 5. Duplicate report identification Semi-automated approach Automated approach Predict top “K” similar reports for each Fix a threshold for similarity and call a report report and leave it to the administrator to as duplicate if its similarity with any of the call it as duplicate or not reports in DB exceeds the threshold Pros : No false alarms, also the similar Pros : Lesser human intervention reports returned can be used to improve the Cons : False alarms report description, analyzing similar bugs and come up with a fix etc., Cons : More human intervention compared to automated approach. Reference : Lyndon Hiew, Gail C. Reference : Per Runeson , Magnus Murphy. Assisted Detection of Duplicate Bug Alexandersson , Oskar Nyholm, Detection of Reports. Submitted to FSE 2006 Duplicate Defect Reports Using Natural Language Processing, Proceedings of the 29th international conference on Software Engineering, p.499-510, May 20-26, 2007 We should decide the final approach based on the experimental results

- 6. Expert identification Semi-automated approach Automated approach Predict top “K” experts for each report and Assign exactly to one expert. leave it to the administrator or the top “K” experts themselves to assign the report to one. Pros : Even if one expert is busy, others can Pros : If the prediction is good, leads to zero take it up. manual effort Cons : Some protocol or mechanism must be Cons : If incase the prediction was not put in place to assign one from “K” experts correct or if the assigned person is overloaded, then it requires manual triaging References : [1] Anvik, J., Hiew, L., and Murphy, G. C. 2006. Who should fix this bug?, In Proc. ICSE [2] Anvik, J. and Murphy, G. C. 2007. Determining Implementation Expertise from Bug Reports, In Proc. Fourth international Workshop on Mining Software Repositories We should decide the final approach based on the experimental results

- 7. Training set • Duplicate report prediction : This task needs a validation set to fix threshold in the case of automated approach, and for evaluation in both automated and semi-automated case. We built it using resolution field (resolution = “Duplicate”) • Expert prediction : Here training set creation is not straight forward. Since “assigned-to” field is not the exact indicator of the expert for a report. – So a bunch of heuristics are employed, as given in http://www.cs.ubc.ca/labs/spl/projects/bugTriage/as signment/heuristics.html for other projects. It is given in the following slide.

- 8. Resolved, Closed Open, Report Reopened Won’t fix, Status ? Incomplete, Cannot reproduce Resolution Fixed Duplicate Use the labels of No Patch the duplicated submission report OR activity as comments If it is assigned, label the Add the resolver report with owner, else Yes as primary expert discard the report Add the person who has Add other patch submitted most number submitters ,commenter, of patches (ELSE) has and owner as additional commented most times as labels primary expert

- 9. Current Progress • Working code which does duplicate report identification and expert identification with results comparable to state of art approaches. • Next step will be improvising the results with closer analysis and working on the plug-in for JIRA. Challenges • Noisy text – short forms, spelling mistakes • Using stack traces, logs information properly • Similar terms usage, as it is not necessary for everyone to use same word every time.

- 10. Duplicate identification • Here TF, TF-IDF vectors are constructed using Summary, Description, Comments text. This is referred to as SDC and SD (with and without comments) • In the semi-automated approach top “K” similar reports are returned for each report. – Presence of the actual duplicate report in top “K” is considered as a hit. – The results are plotted for “K” Vs hits ratio. – From the results, TF-IDF on SD has the best results.

- 12. Automated approach • A report that has similarity greater than threshold with any other report in the DB is flagged as duplicate. • Else called as unique. • For those reports flagged as duplicate, top “K” similar reports greater than threshold are examined. – If the actual duplicated report is present in top “K” it’s a hit for duplicate case • On the other hand, if a report is correctly classified as unique, it’s a hit for unique case • Plots are drawn for “threshold Vs hit ratio” for both duplicates and unique cases

- 13. Automated approach – SDC

- 14. Automated approach – SD

- 15. Expert classification • The methods used are the following – Maximum Likelihood based prediction using BRKNN (Binary relevance KNN) – Maximum A posteriori prediction (MAP) using BRKNN – Component wise Maximum Likelihood based prediction using BRKNN – Component wise MAP using BRKNN (best results) • For smaller “K” and smaller number of experts returned, the precision is high. • Other way, for larger “K” and larger number of experts returned, the recall is high.

- 16. Precision value for 1 expert

- 17. Recall value for returning 1 expert

- 18. Precision value for returning 2 experts

- 19. Recall value for returning 2 experts

- 20. Precision value for returning 3 experts

- 21. Recall value for returning 3 experts