The myth of the scientific method

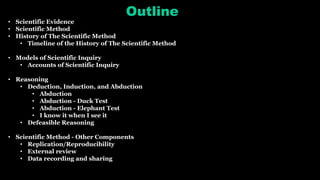

- 1. Outline • Scientific Evidence • Scientific Method • History of The Scientific Method • Timeline of the History of The Scientific Method • Models of Scientific Inquiry • Accounts of Scientific Inquiry • Reasoning • Deduction, Induction, and Abduction • Abduction • Abduction - Duck Test • Abduction - Elephant Test • I know it when I see it • Defeasible Reasoning • Scientific Method - Other Components • Replication/Reproducibility • External review • Data recording and sharing

- 2. Outline • Ad hoc hypothesis • Inflation (cosmology) • Ad hoc hypothesis current examples • Theory-ladenness • Duhem–Quine thesis • Confirmation Holism • Bundle up your Hypotheses • Bundle up your Hypotheses - example • Auxiliary Hypotheses • Auxiliary Hypotheses – example • Observational Equivalence • Observational Equivalence - Black Holes example • Underdetermination • Empirically Equivalent Theories • Beauty and Empirically Equivalent Theories

- 3. Outline • Commensurability • Commensurability (philosophy of science) • Meta-incommensurability • The Free Parameters of the Standard Model • Renormalization • Criticism • Regularization (physics) • Criticism

- 4. Outline • Bias • Confirmation Bias in Science • Confirmation Bias and General Relativity • Experimenter Bias • 6 Types of Experimenter Bias • Experimenter Bias - Examples • Four Basic Problems Cause All The Cognitive Biases • The Shadow of Bias • Bigotry, Prejudice, and Cultural Bias • Scientific Racism • Justification of Science - Dunning–Kruger Effect • The Anatomy of a Good Apology, According to Science

- 5. Outline • Observer Effect • Observer Effect (physics) • Look-Elsewhere Effect • Pessimistic Induction • But What If We're Wrong?: Thinking About the Present As If It Were the Past • Scientometrics - Half-life of Knowledge • Reproducibility Crisis • Scientific Misconduct • Criticism of Science • Discoveries in Science

- 6. Scientific Evidence Philosophic versus scientific views of scientific evidence Concept of "scientific proof While the phrase "scientific proof" is often used in the popular media, many scientists have argued that there is really no such thing. For example, Karl Popper once wrote that "In the empirical sciences, which alone can furnish us with information about the world we live in, proofs do not occur, if we mean by 'proof' an argument which establishes once and for ever the truth of a theory,".

- 7. Scientific Method • Some philosophers and scientists have argued that there is no scientific method, such as Lee Smolin and Paul Feyerabend (in his Against Method). • Nola and Sankey remark that "For some, the whole idea of a theory of scientific method is yester-year's debate".

- 8. History of The Scientific Method An Egyptian medical textbook, the Edwin Smith papyrus, (c. 1600 BCE), applies the following components: examination, diagnosis, treatment and prognosis, to the treatment of disease, which display strong parallels to the basic empirical method of science and according to G. E. R. Lloydplayed a significant role in the development of this methodology. Ibn al-Haytham (Alhazen), 965–1039 Iraq. A polymath, considered by some to be the father of modern scientific methodology, due to his emphasis on experimental data and reproducibility of its results.

- 9. Timeline of the History of The Scientific Method c. 1600 BC — The Edwin Smith Papyrus, an Egyptian surgical textbook, which applies: examination, diagnosis, treatment and prognosis, to injuries, paralleling rudimentary empirical methodology. c. 240 BC — Eratosthenes best known for being the first person to calculate the circumference of the Earth, which he did by applying a measuring system using stadia, which was a standard unit of measure during that time period. His calculation was remarkably accurate. c. 150 BC — The Book of Daniel describes a clinical trial proposed by Daniel in which he and his three companions eat vegetables and water for 10 days rather than the royal food and wine. 1021 — Alhazen introduces the experimental method and combines observations, experiments and rational arguments in his Book of Optics. c. 1025 — Abū Rayhān al-Bīrūnī, develops experimental methods for mineralogy and mechanics, and conducts elaborate experiments related to astronomical phenomena. 1027 — In The Book of Healing, Avicenna criticizes the Aristotelian method of induction, arguing that "it does not lead to the absolute, universal, and certain premises that it purports to provide", and in its place, develops examination and experimentation as a means for scientific inquiry

- 10. Models of Scientific Inquiry In the philosophy of science, models of scientific inquiry have two functions: first, to provide a descriptive account of how scientific inquiry is carried out in practice, and second, to provide an explanatory account of why scientific inquiry succeeds as well as it appears to do in arriving at genuine knowledge. The search for scientific knowledge extends far back into antiquity. At some point in the past, at least by the time of Aristotle, philosophers recognized that a fundamental distinction should be drawn between two kinds of scientific knowledge — roughly, knowledge that and knowledge why. It is one thing to know that each planet periodically reverses the direction of its motion with respect to the background of fixed stars; it is quite a different matter to know why. Knowledge of the former type is descriptive; knowledge of the latter type is explanatory. It is explanatory knowledge that provides scientific understanding of the world. (Salmon, 1990) "Scientific inquiry refers to the diverse ways in which scientists study the natural world and propose explanations based on the evidence derived from their work.

- 11. Accounts of Scientific Inquiry 1. Classical model 2. Pragmatic model 3. Logical empiricism Classical model The classical model of scientific inquiry derives from Aristotle, who distinguished the forms of approximate and exact reasoning, set out the threefold scheme of abductive, deductive, and inductive inference, and also treated the compound forms such as reasoning by analogy.

- 12. Deduction, Induction, and Abduction Deductive reasoning (deduction) Deductive reasoning allows deriving b from a only where b is a formal logical consequence of a . In other words, deduction derives the consequences of the assumed. Inductive reasoning (induction) Inductive reasoning allows inferring b from a, where b does not follow necessarily from a. a might give us very good reason to accept b, but it does not ensure b. For example, if all swans that we have observed so far are white, we may induce that the possibility that all swans are white is reasonable. We have good reason to believe the conclusion from the premise, but the truth of the conclusion is not guaranteed. (Indeed, it turns out that some swans are black.)

- 13. Abduction Abductive reasoning allows inferring a as an explanation of b. As a result of this inference, abduction allows the precondition a to be abduced from the consequence b. Abductive reasoning (also called abduction, abductive inference or retroduction) is a form of logical inference which goes from an observation to a theory which accounts for the observation, ideally seeking to find the simplest and most likely explanation. In abductive reasoning, unlike in deductive reasoning, the premises do not guarantee the conclusion. One can understand abductive reasoning as "inference to the best explanation". In the 1990s, as computing power grew, the fields of law, computer science, and artificial intelligence research spurred renewed interest in the subject of abduction. Diagnostic expert systems frequently employ abduction.

- 14. Abduction - Duck Test The Duck test is a humorous term for a form of abductive reasoning. This is its usual expression: If it looks like a duck, swims like a duck, and quacks like a duck, then it probably is a duck. The term was later popularized in the United States by Richard Cunningham Patterson Jr., United States ambassador to Guatemala during the Cold War in 1950, who used the phrase when he accused the Jacobo Arbenz Guzmán government of being Communist. Patterson explained his reasoning as follows: Suppose you see a bird walking around in a farm yard. This bird has no label that says 'duck'. But the bird certainly looks like a duck. Also, he goes to the pond and you notice that he swims like a duck. Then he opens his beak and quacks like a duck. Well, by this time you have probably reached the conclusion that the bird is a duck, whether he's wearing a label or not. Russian Minister of Foreign Affairs Sergey Lavrov used a version of the Duck Test in 2015 in response to allegations that Russian airstrikes in Syria were not targeting terrorist groups, primarily ISIS, but rather West-supported groups such as the Free Syrian Army. When asked to elaborate his definition of 'terrorist groups', he replied: If it looks like a terrorist, if it acts like a terrorist, if it walks like a terrorist, if it fights like a terrorist, it's a terrorist, right?

- 15. Abduction - Elephant Test Similarly, the term elephant test refers to situations in which an idea or thing, "is hard to describe, but instantly recognizable when spotted". The term is often used in legal cases when there is an issue which may be open to interpretation, such as in the case of Cadogan Estates Ltd v Morris, when Lord Justice Stuart-Smith referred to "the well known elephant test. It is difficult to describe, but you know it when you see it". A similar incantation (used however as a rule of exclusion) was invoked by the concurring opinion of Justice Potter Stewart in Jacobellis v. Ohio, 378 U.S. 184 (1964), an obscenity case. He stated that the Constitution protected all obscenity except "hard-core pornography." Stewart opined, "I shall not today attempt further to define the kinds of material I understand to be embraced within that shorthand description; and perhaps I could never succeed in intelligibly doing so. But I know it when I see it, and the motion picture involved in this case is not that."

- 16. I know it when I see it The phrase "I know it when I see it" is a colloquial expression by which a speaker attempts to categorize an observable fact or event, although the category is subjective or lacks clearly defined parameters. The phrase was famously used in 1964 by United States Supreme Court Justice Potter Stewart to describe his threshold test for obscenity in Jacobellis v. Ohio.[1][2][3] In explaining why the material at issue in the case was not obscene under the Roth test, and therefore was protected speech that could not be censored, Stewart wrote: I shall not today attempt further to define the kinds of material I understand to be embraced within that shorthand description ["hard-core pornography"], and perhaps I could never succeed in intelligibly doing so. But I know it when I see it, and the motion picture involved in this case is not that. The expression became one of the most famous phrases in the entire history of the Supreme Court.Though "I know it when I see it" is widely cited as Stewart's test for "obscenity", he never used the word "obscenity" himself in his short concurrence. He only stated that he knows what fits the "shorthand description" of "hard- core pornography" when he sees it. Stewart's "I know it when I see it" standard was praised as "realistic and gallant"and an example of candor.

- 17. Defeasible Reasoning In logic, defeasible reasoning is a kind of reasoning that is rationally compelling though not deductively valid. The distinction between defeasibility and indefeasibility may be seen in the context of this joke: During a train trip through the countryside, an engineer, a physicist, and a mathematician observe a flock of sheep. The engineer remarks, "I see that the sheep in this region are white." The physicist offers a correction, "Some sheep in this region are white." And the mathematician responds, "In this region there exist sheep that are white on at least one side." The engineer in this story has reasoned defeasibly; since engineering is a highly practical discipline, it is receptive to generalizations. In particular, engineers cannot and need not defer decisions until they have acquired perfect and complete knowledge. But mathematical reasoning, having different goals, inclines one to account for even the rare and special cases, and thus typically leads to a stance that is indefeasible. Defeasible reasoning is a particular kind of non-demonstrative reasoning, where the reasoning does not produce a full, complete, or final demonstration of a claim Other kinds of non-demonstrative reasoning are probabilistic reasoning, inductive reasoning, statistical reasoning, abductive reasoning, and paraconsistent reasoning. Defeasible reasoning is also a kind of ampliative reasoning because its conclusions reach beyond the pure meanings of the premises.

- 18. Reasoning The differences between these kinds of reasoning correspond to differences about the conditional that each kind of reasoning uses, and on what premise (or on what authority) the conditional is adopted: Deductive (from meaning postulate, axiom, or contingent assertion): if p then q (i.e., q or not-p) Defeasible (from authority): if p then (defeasibly) q Probabilistic (from combinatorics and indifference): if p then (probably) q Statistical (from data and presumption): the frequency of qs among ps is high (or inference from a model fit to data); hence, (in the right context) if p then (probably) q Inductive (theory formation; from data, coherence, simplicity, and confirmation): (inducibly) "if p then q"; hence, if p then (deducibly-but-revisably) q Abductive (from data and theory): p and q are correlated, and q is sufficient for p; hence, if p then (abducibly) q as cause Defeasible reasoning finds its fullest expression in jurisprudence, ethics and moral philosophy, epistemology, pragmatics and conversational conventions in linguistics, constructivist decision theories, and in knowledge representation and planning in artificial intelligence. It is also closely identified with prima facie (presumptive) reasoning (i.e., reasoning on the "face" of evidence), and ceteris paribus (default) reasoning (i.e., reasoning, all things "being equal"). • Commonsense reasoning • Case-based reasoning • Reasoning by default (Default Logic)

- 19. Scientific Method - Other Components • Replication • External review • Data recording and sharing

- 20. Ad hoc hypothesis • In science and philosophy, an ad hoc hypothesis is a hypothesis added to a theory in order to save it from being falsified. • Ad hoc hypothesizing is compensating for anomalies not anticipated by the theory in its unmodified form. Scientists are often skeptical of theories that rely on frequent, unsupported adjustments to sustain them. This is because, if a theorist so chooses, there is no limit to the number of ad hoc hypotheses that they could add. Thus the theory becomes more and more complex, but is never falsified. This is often at a cost to the theory's predictive power, however. Ad hoc hypotheses are often characteristic of pseudoscientific subjects. An ad hoc hypothesis is not necessarily incorrect; in some cases, a minor change to a theory was all that was necessary. For example, Albert Einstein's addition of the cosmological constant to general relativity in order to allow a static universe was ad hoc. Although he later referred to it as his "greatest blunder", it may correspond to theories of dark energy. Another example is the use of inflation in physical cosmology.

- 21. Ad hoc hypothesis Inflation (cosmology) • In physical cosmology, cosmic inflation, cosmological inflation, or just inflation is a theory of exponential expansion of space in the early universe. • The inflationary epoch lasted from 10−36 seconds after the conjectured Big Bang singularity to sometime between 10−33 and 10−32 seconds after the singularity. • Following the inflationary period, the Universe continues to expand, but at a less rapid rate. • Inflation resolves several problems in Big Bang cosmology that were discovered in the 1970s Explains 1. why the Universe appears to be the same in all directions (isotropic) and statistically homogeneous (Horizon problem). 2. why the cosmic microwave background radiation is distributed evenly 3. why the Universe is flat 4. why no magnetic monopoles have been observed

- 22. Ad hoc hypothesis Inflation (cosmology) Since its introduction by Alan Guth in 1980, the inflationary paradigm has become widely accepted. • Nevertheless, many physicists, mathematicians, and philosophers of science have voiced criticisms, claiming untestable predictions and a lack of serious empirical support.[ • In 1999, John Earman and Jesús Mosterín published a thorough critical review of inflationary cosmology, concluding, "we do not think that there are, as yet, good grounds for admitting any of the models of inflation into the standard core of cosmology.“ • In order to work, and as pointed out by Roger Penrose from 1986 on, inflation requires extremely specific initial conditions of its own, so that the problem (or pseudo-problem) of initial conditions is not solved: . . . At a conference in 2015, Penrose said that "inflation isn't falsifiable, it's falsified. […] BICEP did a wonderful service by bringing all the Inflation-ists out of their shell, and giving them a black eye.” • Penrose's shocking conclusion, though, was that obtaining a flat universe without inflation is much more likely than with inflation – by a factor of 10 to the googol (10 to the 100) power!" • A recurrent criticism of inflation is that the invoked inflation field does not correspond to any known physical field, and that its potential energy curve seems to be an ad hoc contrivance to accommodate almost any data obtainable • Paul Steinhardt, one of the founding fathers of inflationary cosmology, has recently become one of its sharpest critics. • Counter-arguments were presented by Alan Guth, David Kaiser, and Yasunori Nomura and by Andrei Linde,saying that "cosmic inflation is on a stronger footing than ever before".

- 23. Ad hoc hypothesis Giant ring of galaxies scattered during Andromeda flyby challenges Einstein's theory of gravity https://www.yahoo.com/news/giant-ring-galaxies-scattered-during-104035369.html Gravitational Waves May Defeat New Theories of Gravity https://arstechnica.com/science/2017/03/gravitational-waves-may-defeat-new-theories-of-gravity/ New Star System Challenges Einstein's Theory of Relativity http://www.ibtimes.co.uk/new-star-system-challenges-einsteins-theory-relativity-1431233

- 24. Theory-ladenness In the philosophy of science, observations are said to be "theory‐laden" when they are affected by the theoretical presuppositions held by the investigator. The thesis of theory‐ladenness is most strongly associated with the late 1950s and early 1960s work of Norwood Russell Hanson, Thomas Kuhn, and Paul Feyerabend, and was probably first put forth (at least implicitly) by Pierre Duhem about 50 years earlier. Forms Two forms of theory‐ladenness should be kept separate: 1. The semantic form: the meaning of observational terms is partially determined by theoretical presuppositions; 2. The perceptual form: the theories held by the investigator, at a very basic cognitive level, impinge on the perceptions of the investigator. The former may be referred to as semantic and the latter as perceptual theory‐ladenness. In a book showing the theory-ladenness of psychiatric evidences, Massimiliano Aragona (Il mito dei fatti, 2009) distinguished three forms of theory-ladenness. 1. The "weak form" was already affirmed by Popper (it is weak because he maintains the idea of a theoretical progress directed to the truth of scientific theories). 2. The "strong" form was sustained by Kuhn and Feyerabend, with their notion of incommensurability. 3. Feyerabend completely reversed the relationship between observations and theories, introducing an "extra- strong" form of theory-ladenness in which "anything goes

- 25. Duhem–Quine Thesis • The Duhem–Quine thesis, also called the Duhem–Quine problem, after Pierre Duhem and Willard Van Orman Quine, is that it is impossible to test a scientific hypothesis in isolation, because an empirical test of the hypothesis requires one or more background assumptions (also called auxiliary assumptions or auxiliary hypotheses). • In recent decades the set of associated assumptions supporting a thesis sometimes is called a bundle of hypotheses. The Duhem–Quine thesis argues that no scientific hypothesis is by itself capable of making predictions. Instead, deriving predictions from the hypothesis typically requires background assumptions that several other hypotheses are correct Although a bundle of hypotheses (i.e. a hypothesis and its background assumptions) as a whole can be tested against the empirical world and be falsified if it fails the test, the Duhem–Quine thesis says it is impossible to isolate a single hypothesis in the bundle In "Two Dogmas of Empiricism", presents a much stronger version of underdetermination in science. His theoretical group embraces all of human knowledge, including mathematics and logic. He contemplated the entirety of human knowledge as being one unit of empirical significance. Hence all our knowledge, for Quine, would be epistemologically no different from ancient Greek gods, which were posited in order to account for experience Quine even believed that logic and mathematics can also be revised in light of experience, and presented quantum logic as evidence for this. Years later he retracted this position; in his book Philosophy of Logic, he said that to revise logic would be essentially "changing the subject".

- 26. Confirmation Holism In the epistemology of science, confirmation holism, also called epistemological holism, is the view that no individual statement can be confirmed or disconfirmed by an empirical test, but only a set of statements (a whole theory). Duhem's idea was, roughly, that no theory of any type can be tested in isolation but only when embedded in a background of other hypotheses, e.g. hypotheses about initial conditions. Willard van Orman Quine who motivated his holism through extending Pierre Duhem's problem of underdetermination in physical theory to all knowledge claims Quine thought that this background involved not only such hypotheses but also our whole web-of-belief, which, among other things, includes our mathematical and logical theories and our scientific theories. This last claim is known as the Duhem–Quine thesis. A related claim made by Quine, though contested by some is that one can always protect one's theory against refutation by attributing failure to some other part of our web-of-belief. In his own words, "Any statement can be held true come what may, if we make drastic enough adjustments elsewhere in the system.". W. v. O. Quine. 'Two Dogmas of Empiricism.' The Philosophical Review, 60 (1951), pp. 20–43.

- 27. Confirmation Holism • Total holism vs Partial holism • Semantic holism and confirmational holism

- 28. Bundle up your Hypotheses • As a scientific ideal, we imagine scientists designing studies to test single hypotheses. • After all, this is the whole point of controlling variables in an experiment — to test one possible explanation at a time. • However, in reality, hypotheses are tested in bundles; there is simply no way to isolate a single idea for testing.

- 29. Bundle up your Hypotheses• Imagine using radiometric dating (based on the decay of uranium-238 into lead-206) to estimate the age of a rock. • The test suggests and supports the hypothesis that the rock is 3.8 billion years old. • This might seem to be cut-and-dried, but in fact, there are many other hypotheses hidden within this test, including that: • The half-life of uranium-238 is 4.5 billion years. • This half-life has been constant through time. • The sample rock has not been contaminated by external uranium or lead since the rock originally cooled from magma. • Neither uranium nor lead has leached out of the rock since it formed. • The equipment that we have accurately detects the amounts of uranium and lead in the rock. • If any one of those hypotheses turns out to be inaccurate, then we have a problem. • We could have gotten our test result for a reason other than that the rock is actually 3.8 billion years old. • Our test results depend on all of these hypotheses — not just on the actual age of the rock.

- 30. Bundle up your hypotheses • Such auxiliary hypotheses are sometimes called assumptions. • The assumptions of a particular test are all the hypotheses that are assumed to be accurate in order for the test to work as planned. • However, it's important to recognize that these assumptions are really hypotheses in disguise — ideas which themselves could turn out to be accurate or innaccurate. • All tests involve auxiliary hypotheses. • "Now, wait a minute," you might wonder, "If we can't ever isolate a single idea for testing — if all tests have auxiliary hypotheses — then how can the process of science ever give us much confidence in any hypothesis?" • This is not the problem that it might at first seem. • Our auxiliary hypotheses can be checked by independent testing. • Many other tests support the ideas that the half-life of uranium-238 is 4.5 billion years, that this decay rate is constant, etc. • Because those auxiliary hypotheses seem to be pretty accurate based on our other tests, we can have confidence that, in this test, our results really do support the idea that the rock is 3.8 billion years old. • Through continued testing of different groupings of hypotheses, the process of science can home in on the accuracy of individual hypotheses. Nevertheless, when examining the results of a particular test, it is important to recognize all of the auxiliary hypotheses that a test depends upon. • The history of heliocentrism (the idea of a sun-centered solar system) illustrates how incorrect auxiliary hypotheses have led to incorrect conclusions.

- 31. Auxiliary Hypotheses In 1543, Nicolaus Copernicus proposed the then revolutionary idea that the Earth orbits the sun instead of the other way around. With modern observational equipment, this seems patently obvious, but in the 16th century it was not. One way to test the idea was to search for parallax — the apparent shift in the relative position of objects based on the position of the observer. • Demonstrating parallax is easy: hold a pencil vertically at arms length. • Cover one eye to look at the pencil with just your right eye and then just your left eye. • As you do this, the pencil will seem to jump back and forth relative to the background.

- 32. Auxiliary Hypotheses • Copernicus' contemporaries reasoned that if the Earth were really moving around the sun, it would also be moving with respect to the stars — and so we should observe a stellar parallax. • In other words, the view of the stars from one extreme of Earth's orbit (e.g., the view during the summer solstice) should be a bit like looking at the pencil with your right eye, and the other extreme (e.g., the winter solstice) should be like the view with your left eye: just as the apparent positioning of the pencil and the background objects shift relative to one another, so too should the apparent positioning of near and far stars. • If the Earth were really orbiting the sun, the constellations should look different in the summer (one extreme of the Earth's orbit) than they do in the winter (the other extreme).

- 33. Auxiliary Hypotheses Copernicus' peers and astronomers who came after him looked for this parallax, but found none. The positions of the stars relative to one another seemed to be the same, no matter the time of year. Copernicus' idea was rejected by astronomers of the day for this and many other reasons. So, what went wrong? The astronomers had performed the right test (looking for parallax), but little did they know that two of their auxiliary hypotheses were inaccurate. The astronomers had assumed that: • The stars are not that far away • Their observational tools were sensitive enough to detect parallax

- 34. Auxiliary HypothesesThe stars are not that far away • When the observer is relatively close to the observed objects, the observed parallax is large and obvious. • But when the observed objects are relatively far away, the parallax is smaller. • You can demonstrate this for yourself. • Go outside and look for two far-off objects — perhaps two distant trees. • Cover your right eye and then your left, as you did for the pencil. • You won't notice much change in the appearance of the trees, though you noticed a big change in the appearance of the pencil and background objects. • The astronomers had assumed that the stars are relatively close to the Earth and hence, that the parallax would be obvious. • But in fact, the stars are quite distant — after our own sun, the nearest star is more than 24 trillion miles away! • The stars actually do exhibit parallax — but they are so far away that it is tiny.

- 35. Auxiliary HypothesesTheir observational tools were sensitive enough to detect parallax • The astronomers had assumed that if the parallax was there, they'd be able to observe it. • But the stellar parallax from Earth is so small, that it is only with extremely sensitive, 21st century equipment that we are able to detect it! • Because these two hypotheses turned out to be false, 16th century astronomers came to the wrong conclusion about the parallax test. • They saw their test results as strong evidence that Earth does not orbit the sun, but in fact, with the right equipment, the parallax test (along with many others) suggests that Copernicus was right — the Earth orbits the sun!

- 36. Observational Equivalence Observational equivalence is the property of two or more underlying entities being indistinguishable on the basis of their observable implications. Thus, for example, two scientific theories are observationally equivalent if all of their empirically testable predictions are identical, in which case empirical evidence cannot be used to distinguish which is closer to being correct; indeed, it may be that they are actually two different perspectives on one underlying theory.

- 37. Black Holes Observational equivalence is the property of two or more underlying entities being indistinguishable on the basis of their observable implications. Thus, for example, two scientific theories are observationally equivalent if all of their empirically testable predictions are identical, in which case empirical evidence cannot be used to distinguish which is closer to being correct; indeed, it may be that they are actually two different perspectives on one underlying theory. Black hole https://en.wikipedia.org/wiki/Black_hole Black star (semiclassical gravity) https://en.wikipedia.org/wiki/Black_star_(semiclassical_gravity) Dark-energy star https://en.wikipedia.org/wiki/Dark-energy_star Gravastar https://en.wikipedia.org/wiki/Gravastar Gravitational Condensate Stars: An Alternative to Black Holes https://arxiv.org/abs/gr-qc/0109035 Fuzzball (string theory) https://en.wikipedia.org/wiki/Fuzzball_(string_theory) Kugelblitz (astrophysics) https://en.wikipedia.org/wiki/Kugelblitz_(astrophysics) Black hole electron https://en.wikipedia.org/wiki/Black_hole_electron

- 38. Black Holes Black hole Black star Dark- energy star Gravastar (gravitational vacuum star) Gravitational Condensate Stars Fuzzball (string theory) Kugelblitz Black hole electron Light Cannot Escape Cannot Escape Cannot Escape Cannot Escape Cannot Escape Cannot Escape Cannot Escape Cannot Escape Singularity Yes No No No No No replaced with ball of strings Yes Yes Event Horizon Yes No Yes Yes but not well defined No Yes but on the order of a few Planck lengths, be very much like a mist: fuzzy, hence the name “fuzzball.” Yes No Hawking Radiation Yes Yes Possible Yes Yes Information Paradox Yes No No No No No Kugelblitz • In simpler terms, a kugelblitz is a black hole formed from radiation as opposed to matter. • According to Einstein's general theory of relativity, once an event horizon has formed, the type of energy that created it no longer matters. • A kugelblitz is so hot it surpasses the Planck temperature, the temperature of the universe 5.4×10−44 seconds after The Big Bang. Black hole electron • In physics, there is a speculative notion that if there were a black hole with the same mass, charge and angular momentum as an electron, it would share some of the properties of the electron.

- 39. Black Holes Physicist Claims to Have Proven Mathematically That Black Holes Do Not Exist https://arxiv.org/abs/1409.1837 • The University of North Carolina’s Laura Mersini-Houghton believes that the reason there is so much uncertainty is because black holes don’t exist. • Her paper has been submitted to ArXiv, but has not been subjected to peer review. • Earlier this year, she published a paper with approximate solutions in the journal Physics Letters B. However, not everyone is on board with Mersini-Houghton’s conclusions. William Unruh, a theoretical physicist from the University of British Columbia, pointed out some fatal flaws in the paper's argument. • “The [paper] is nonsense,” Unruh said in an email to IFLS. “Attempts like this to show that black holes never form have a very long history, and this is only the latest. They all misunderstand Hawking radiation, and assume that matter behaves in ways that are completely implausible.” • According to Unruh, black holes don’t emit enough Hawking radiation to shrink the mass of the black hole down to where Mersini-Houghton claims in a timely manner. Instead, “it would take 10^53 (1 followed by 53 zeros) times the age of the universe to evaporate,” he explains.

- 40. Black Holes What Hawking meant when he said ‘there are no black holes’ https://arxiv.org/pdf/1401.5761v1.pdf Those words come directly from Hawking’s latest paper, but they are contained within a larger point involving the mechanics of a black hole and its famous “event horizon.” (That’s the area thought to exist around a black hole from which nothing, not even light, can escape.) To be clear, Hawking was not claiming that black holes don’t exist. What Hawking did was propose an explanation to one of the most puzzling problems in theoretical physics. How can black holes exist when they seem to break two fundamental laws of physics — Einstein’s laws of relativity and quantum mechanics? We’ll explain. • This question of what ultimately happens to all the stuff drawn into the black hole has become known as “the information paradox.” • Then there’s the complicated “firewall paradox.” • Over the past 40 years, physicists have proposed multiple solutions, forcing the field to rethink black-hole behavior. • In 1992, for example, Leonard Susskind, Larus Thorlacius and John Uglom proposed an idea known as “complementarity.” If you’re confused, you’re not alone, said Matt Strassler, blogger and visiting theoretical physicist at Harvard University. Notably, Hawking’s work has not yet been peer-reviewed, and it contains no equations, so there’s no way to test his new ideas, Polchinski said. Because of that, he added, his statement about black holes can’t be considered a breakthrough in science — yet. The problems have everyone in the field confused, Polchinski said, but that confusion is thrilling for physicists. Solving a paradox is the way the field advances, he said. “It’s not so much that there’s a mistake, but somehow, some assumption that we believe about quantum mechanics and gravity is wrong, and we’re trying to figure out what it is,” Polchinski said. “It’s confusion, but it’s confusion that we hope makes us ripe for advance.” http://www.pbs.org/newshour/updates/hawking-meant-black-holes/

- 41. Underdetermination In the philosophy of science, underdetermination refers to situations where the evidence available is insufficient to identify which belief one should hold about that evidence. For example, if all that was known was that exactly $10 was spent on apples and oranges, and that apples cost $1 and oranges $2, then one would know enough to eliminate some possibilities (e.g., 6 oranges could not have been purchased), but one would not have enough evidence to know which specific combination of apples and oranges was purchased. In this example, one would say that belief in what combination was purchased is underdetermined by the available evidence.

- 42. Underdetermination Quine, one of the most prominent proponents of scientific underdetermination wrote a famous book that extends it to linguistics, Word and Object. His terms of choice are "inscrutability of reference", which means that parts of a sentence can change what they reference in such a way that the meaning of the sentence as a whole remains the same, and "indeterminacy of translation", which means that there exists no fact of the matter as to whether "radical" (from scratch) translation from one language into (never before encountered) another is correct. Indeed, Quine's ideas are the source Feyerabend's and Kuhn's "incommensurability" of paradigms, see Is Feyerabend confusing discovery and justification when he criticizes the scientific method? Together with the similarly minded Derrida's "deconstruction" on the continental side the idea was picked up and extended to cultures by post-modernists at large. If you are looking for such an expansive view Rorty's Philosophy and the Mirror of Nature should do nicely: "...thinking of the entire culture, from physics to poetry, as a single, continuous, seamless activity in which the divisions are merely institutional and pedagogical... we shall say that all inquiry is interpretation, that all thought is recontextualization... To say that something is better 'understood' in one vocabulary than another is always an ellipsis for the claim that a description in the preferred vocabulary is more useful for a certain purpose.“ A word of caution. The founding fathers of underdetermination, Quine and Kuhn, walked back their original polemically radical claims. And while social and cultural biases are there, exuberant post-modernistic quest for finding them everywhere ended up producing way too much inane nonsense, partly exposed by the Sokal hoax. Check out Zammito's Nice Derangement of Epistemes, which gives a philosophical history of post-modernism from underdetermination and incommensurability to "sociology of scientific knowledge" and ultra-feminism, and to its discreditation in 1990s. Here is his take on Rorty in particular: "Setting out from Quine, Kuhn, and Davidson, Rorty has executed several elegant turns through Gadamer and Heidegger to come more and more to partner with Derrida... What is left is language and the arbitrary "poetics" of conversation. Rorty dissolves too many distinctions; his new "pragmatism" entails a cavalier disdain for rational adjudication of dispute. There has been a derangement of epistemes. Philosophy of science pursued "semantic ascent" into a philosophy of language so "holistic" as to deny determinate purchase on the world of which we speak. History and sociology of science has become so "reflexive" that it has plunged "all the way down" into the abime of an almost absolute skepticism".

- 43. Empirically Equivalent Theories In The Scientific Image (1980), van Fraassen uses a now-classic example to illustrate the possibility that even our best scientific theories might have empirical equivalents: that is, alternative theories making the very same empirical predictions, and which therefore cannot be better or worse supported by any possible body of evidence. Consider Newton's cosmology, with its laws of motion and gravitational attraction. As Newton himself realized, van Fraassen points out, exactly the same predictions are made by the theory whether we assume that the entire universe is at rest or assume instead that it is moving with some constant velocity in any given direction: from our position within it, we have no way to detect constant, absolute motion by the universe as a whole. Thus, van Fraassen argues, we are here faced with empirically equivalent scientific theories: Newtonian mechanics and gravitation conjoined either with the fundamental assumption that the universe is at absolute rest (as Newton himself believed), or with any one of an infinite variety of alternative assumptions about the constant velocity with which the universe is moving in some particular direction. All of these theories make all and only the same empirical predictions, so no evidence will ever permit us to decide between them on empirical grounds. Van Fraassen constructive empiricism holds that the aim of science is not to find true theories, but only theories that are empirically adequate: that is, theories whose claims about observable phenomena are all true. Since the empirical adequacy of a theory is not threatened by the existence of another that is empirically equivalent to it, fulfilling this aim has nothing to fear from the possibility of such empirical equivalents.

- 44. Beauty and Empirically Equivalent Theories Beauty and Revolution in Science By James W. McAllister

- 45. Commensurability Commensurability commonly refers to Commensurability (mathematics). It may also refer to: Commensurability (astronomy) Commensurability (economics), whether economic value can always be measured by money Commensurability (ethics), the commensurability of values in ethics Commensurability (law) Commensurability (philosophy of science) Unit commensurability, a concept in dimensional analysis that concerns conversion of units of measurement

- 46. Commensurability (philosophy of science) The metaphorical application of this mathematical notion specifically to the relation between successive scientific theories became controversial in 1962 after it was popularised by two influential philosophers of science: Thomas Kuhn and Paul Feyerabend. They appeared to be challenging the rationality of natural science and were called in Nature, “the worst enemies of science”. Kuhn claimed that the Newtonian paradigm is incommensurable with its Cartesian and Aristotelian predecessors in the history of physics, just as Lavoisier's paradigm is incommensurable with that of Priestley's in chemistry Kuhn made the dramatic claim that history of science reveals proponents of competing paradigms failing to make complete contact with each other's views, so that they are always talking at least slightly at cross-purposes. He used incommensurability to attack the idea, prominent among logical positivists and logical empiricists, that comparing theories requires translating their consequences into a neutral observation language He repeatedly emphasizing that incommensurability neither means nor implies incomparability; nor does it make science irrational. • Taxonomic incommensurability • Methodological incommensurability

- 47. Commensurability (philosophy of science) Paul Feyerabend first used the term incommensurable in 1962 in “Explanation, Reduction and Empiricism” to describe the lack of logical relations between the concepts of fundamental theories in his critique of logical empiricists models of explanation and reduction. By calling two fundamental theories incommensurable, Feyerabend meant that they were conceptually incompatible: The main concepts of one could neither be defined on the basis of the primitive descriptive terms of the other, nor related to them via a correct empirical statement . For example, Feyerabend claimed that the concepts of temperature and entropy in kinetic theory are incommensurable with those of phenomenological thermodynamics (1962, 78); whereas the Newtonian concepts of mass, length and time are incommensurable with those of relativistic mechanics (1962, 80). Both Kuhn and Feyerabend have often been misread as advancing the view that incommensurability implies incomparability . In response to this misreading, Kuhn repeatedly emphasized that incommensurability does not imply incomparability Finally, there is one central, substantive point of agreement between Kuhn and Feyerabend. Both see incommensurability as precluding the possibility of interpreting scientific development as an approximation to truth (or as an “increase of verisimilitude”) . Consequently, neither Kuhn nor Feyerabend can correctly be characterized as scientific realists who believe that science makes progress toward the truth.

- 48. Meta-incommensurability A more general notion of incommensurability has been applied to the sciences at the meta-level in two significant ways. 1. Eric Oberheim and Paul Hoyningen-Huene argue that realist and anti-realist philosophies of science are also incommensurable, thus scientific theories themselves may be meta-incommensurable. 2. Similarly, Nicholas Best describes a different type of incommensurability between philosophical theories of meaning. He argues that if the meaning of a first-order scientific theory depends on its second-order theory of meaning, then two first order theories will be meta-incommensurable if they depend on substantially different theories of meaning. Whereas Kuhn and Feyerabend’s concepts of incommensurability do not imply complete incomparability of scientific concepts, this incommensurability of meaning does.

- 49. The Parameters of the Standard Model Has about 18 or 19 free parameters that are not predicted by the theory 1.The so-called fine structure constant, αα, which (depending on your point of view) defines either the coupling strength of electromagnetism or the magnitude of the electron charge; 2.The Weinberg angle or weak mixing angle θWθW that determines the relationship between the coupling constant of electromagnetism and that of the weak interaction; 3.The coupling constant g3g3 of the strong interaction; 4.The electroweak symmetry breaking energy scale (or the Higgs potential vacuum expectation value, v.e.v.) vv; 5.The Higgs potential coupling constant λλ or alternatively, the Higgs mass mHmH; 6.The three mixing angles θ12θ12, θ23θ23 and θ13θ13 and the CP-violating phase δ13δ13 of the Cabibbo-Kobayashi- Maskawa (CKM) matrix, which determines how quarks of various flavor can mix when they interact; 7.Nine Yukawa coupling constants that determine the masses of the nine charged fermions (six quarks, three charged leptons).

- 50. The Parameters of the Standard Model Beyond the 18 parameters, however, there are a few more. First, Θ3Θ3, which would characterize the CP symmetry violation of the strong interaction. Experimentally, Θ3Θ3 is determined to be very small, its value consistent with zero. But why is Θ3Θ3 so small? One possible explanation involves a new hypothetical particle, the axion, which in turn would introduce a new parameter, the mass scale fa into the theory. Finally, the canonical form of the Standard Model includes massless neutrinos. We know that neutrinos must have mass, and also that they oscillate (turn into one another), which means that their mass eigenstates do not coincide with their eigenstates with respect to the weak interaction. Thus, another mixing matrix must be involved, which is called the Pontecorvo-Maki-Nakagawa-Sakata (PMNS) matrix. So we end up with three neutrino masses m1m1, m2m2 and m3m3, and the three angles θ12θ12, θ23θ23 and θ13θ13 (not to be confused with the CKM angles above) plus the CP- violating phase δCPδCP of the PMNS matrix. So this is potentially as many as 26 or 29 parameters in the Standard Model that need to be determined by experiment. This is quite a long way away from the “holy grail” of theoretical physics, a theory that combines all four interactions, all the particle content, and which preferably has no free parameters whatsoever. Nonetheless the theory, and the level of our understanding of Nature’s fundamental building blocks that it represents, is a remarkable intellectual achievement of our era.

- 51. The Parameters of the Standard Model There 29 total parameters (or fundamental constants) in nature that we've discovered • 3 gauge couplings • 2 Higgs parameters: the Higgs Mass and Higgs vacuum expectation value • 6 quark masses • 3 quark mixing angles • 1 imaginary quark phase • 3 lepton masses • 3 neutrino masses • 3 neutrino mixing angles • 3 imaginary neutrino phases • 1 strong CP parameter • 1 Cosmological constant

- 52. Renormalization Renormalization is a collection of techniques in quantum field theory, the statistical mechanics of fields, and the theory of self-similar geometric structures, that are used to treat infinities arising in calculated quantities by altering values of quantities to compensate for effects of their self-interactions. • When describing space and time as a continuum, certain statistical and quantum mechanical constructions are ill- defined. • Renormalization procedures are based on the requirement that certain physical quantities are equal to the observed values. • Initially viewed as a suspect provisional procedure even by some of its originators, renormalization eventually was embraced as an important and self-consistent actual mechanism of scale physics in several fields of physics and mathematics. Many great physicists criticized the renormalization as an incoherent method of neglecting infinities in an arbitrary way. Dirac in 1975 said as follows: “I must say that I am very dissatisfied with the situation, because, this so-called good theories does involve neglecting infinities which appear in its equations, neglecting them in an arbitrary way. This is just not sensible mathematics.” However, the agreement of the calculations with the observations is the best proof that the methods are correct and essential for all of quantum field theory. People's hostility against renormalization was tamed - or should have been tamed - by the discovery of the Renormalization Group.

- 53. Renormalization Many great physicists criticized the renormalization as an incoherent method of neglecting infinities in an arbitrary way. Dirac in 1975 said as follows: “I must say that I am very dissatisfied with the situation, because, this so-called good theories does involve neglecting infinities which appear in its equations, neglecting them in an arbitrary way. This is just not sensible mathematics. Sensible mathematics involves neglecting a quantity when it is small - not neglecting it just because it is infinitely great and you do not want it!” Criticism Another important critic was Feynman. Despite his crucial role in the development of quantum electrodynamics, he wrote the following in 1985. The shell game that we play ... is technically called 'renormalization'. But no matter how clever the word, it is still what I would call a dippy process! Having to resort to such hocus-pocus has prevented us from proving that the theory of quantum electrodynamics is mathematically self-consistent. It's surprising that the theory still hasn't been proved self-consistent one way or the other by now; I suspect that renormalization is not mathematically legitimate.

- 54. Renormalization Criticism The general unease with Renormalization was almost universal in texts up to the 1970s and 1980s. Beginning in the 1970s however things started to change. 1. The discovery of the Renormalization Group in the 1970s and formulation of a effective field theory . 2. Attitudes began to change, especially among younger theorists, despite the fact that Dirac and various others -- all of whom belonged to the older generation -- never withdrew their criticisms. 3. The agreement of the calculations with the observations is the best proof that the methods are correct and essential for all of quantum field theory. If a theory featuring renormalization (e.g. QED) can only be sensibly interpreted as an effective field theory, i.e. as an approximation reflecting human ignorance about the workings of nature, then the problem remains of discovering a more accurate theory that does not have these renormalization problems. As Lewis Ryder has put it, "In the Quantum Theory, these [classical] divergences do not disappear; on the contrary, they appear to get worse. And despite the comparative success of renormalization theory the feeling remains that there ought to be a more satisfactory way of doing things."

- 55. Regularization (physics) • In physics, especially quantum field theory, regularization is a method of modifying observables which have singularities in order to make them finite by the introduction of a suitable parameter called regulator. • The regulator, also known as a "cutoff", models our lack of knowledge about physics at unobserved scales (eg. scales of small size or large energy levels). • It compensates for (and requires) the possibility that "new physics" may be discovered at those scales which the present theory is unable to model, while enabling the current theory to give accurate predictions as an "effective theory" within its intended scale of use. • It is distinct from renormalization, another technique to control infinities without assuming new physics, by adjusting for self-interaction feedback. • Regularization was for many decades controversial even amongst its inventors, as it combines physical and epistemological claims into the same equations. • However it is now well-understood and has proven to yield useful, accurate predictions.

- 56. Regularization (physics) Overview • Regularization procedures deal with infinite, divergent, and non-sensical expressions by introducing an auxiliary concept of a regulator • Regularization is the first step towards obtaining a completely finite and meaningful result; in quantum field theory it must be usually followed by a related, but independent technique called renormalization. • The existence of a limit as ε goes to zero and the independence of the final result from the regulator are nontrivial facts. • Sometimes, taking the limit as ε goes to zero is not possible. • This is the case when we have a Landau pole and for nonrenormalizable couplings like the Fermi interaction. • However, even for these two examples, we still get pretty accurate approximations within certain scales. • The physical reason why we can't take the limit of ε going to zero is the existence of new physics below Λ. • It is not always possible to define a regularization such that the limit of ε going to zero is independent of the regularization. • In this case, one says that the theory contains an anomaly. Anomalous theories have been studied in great detail and are often founded on the celebrated Atiyah–Singer index theorem or variations thereof (see, for example, the chiral anomaly).

- 57. Regularization (physics) Criticism Dirac was persistently, extremely critical about procedures of renormalization. So in 1963 : “… in the renormalization theory we have a theory that has defied all the attempts of the mathematician to make it sound. I am inclined to suspect that the renormalization theory is something that will not survive in the future,…” P.A.M. Dirac. "The Evolution of the Physicist's Picture of Nature". Scientific American, May 1963: 45–53. “One can distinguish between two main procedures for a theoretical physicist. One of them is to work from the experimental basis . . . The other procedure is to work from the mathematical basis. One examines and criticizes the existing theory. One tries to pin-point the faults in it and then tries to remove them. The difficulty here is to remove the faults without destroying the very great successes of the existing theory.” The difficulty with a realistic regularization is that so far there is none, although nothing could be destroyed by its bottom-up approach; and there is no experimental basis for it.” P.A.M. Dirac (1968). "Methods in theoretical physics". Unification of Fundamental Forces by A. Salam (Cambridge University Press, Cambridge 1990): 125–143.

- 58. Regularization (physics) Criticism Salam remarked in 1972 "Field-theoretic infinities first encountered in Lorentz's computation of electron have persisted in classical electrodynamics for seventy and in quantum electrodynamics for some thirty-five years. These long years of frustration have left in the subject a curious affection for the infinities and a passionate belief that they are an inevitable part of nature; so much so that even the suggestion of a hope that they may after all be circumvented - and finite values for the renormalization constants computed - is considered irrational.“ C.J.Isham, A.Salam and J.Strathdee, `Infinity Suppression Gravity Modified Quantum Electrodynamics', Phys. Rev. D5, 2548 (1972). In ’t Hooft’s opinion “History tells us that if we hit upon some obstacle, even if it looks like a pure formality or just a technical complication, it should be carefully scrutinized. Nature might be telling us something, and we should find out what it is.” G. ’t Hooft, In Search of the Ultimate Building Blocks (Cambridge University Press, Cambridge 1997). • The need for regularization terms in any quantum field theory of quantum gravity is a major motivation for Physics beyond the standard model. • A. Zee (Quantum Field Theory in a Nutshell, 2003) considers this to be a benefit of the regularization framework - - theories can work well in their intended domains but also contain information about their own limitations and point clearly to where new physics is needed.

- 59. Bias Bias is an inclination towards something, or a predisposition, partiality, prejudice, preference, or predilection. Social Sciences • Confirmation bias, tendency of people to favor information that confirm their beliefs of hypothesis • Cognitive bias, any of a wide range of observer effects identified in cognitive science. • See List of cognitive biases for a comprehensive list • Cultural bias, interpreting and judging phenomena in terms particular to one's own culture • Funding bias, bias relative to the commercial interests of a study's financial sponsor • Infrastructure bias, the influence of existing social or scientific infrastructure on scientific observations • Media bias, the influence journalists and news producers have in selecting stories to report and how they are covered • Publication bias, bias towards publication of certain experimental results Mathematics and engineering • Bias (statistics), the systematic distortion of a statistic • Biased sample, a sample falsely taken to be typical of a population • Estimator bias, a bias from an estimator whose expectation differs from the true value of the parameter • Exponent bias, the constant offset of an exponent's value

- 60. Bias • Bias is an inclination towards something, or a predisposition, partiality, prejudice, preference, or predilection. • Biases can be learned implicitly within cultural contexts. • People may develop biases toward or against an individual, an ethnic group, a nation, a religion, a social class, a political party, theoretical paradigms and ideologies within academic domains, or a species. • Bias can come in many forms and is related to prejudice and intuition.

- 61. Bias1 Cognitive biases 1.1 Anchoring 1.2 Apophenia 1.3 Attribution bias 1.4 Confirmation bias 1.5 Framing 1.6 Halo effect 1.7 Self-serving bias 2 Conflicts of interest 2.1 Bribery 2.2 Favoritism 2.3 Funding bias 2.4 Insider trading 2.5 Lobbying 2.6 Match fixing 2.7 Regulatory issues 2.8 Shilling 3 Statistical biases 4 Contextual biases 4.1 Academic bias 4.2 Educational bias 4.3 Experimenter bias 4.4 Full text on net bias 4.5 Inductive bias 4.6 Media bias 4.7 Publication bias 4.8 Reporting bias & social desirability bias 5 Prejudices 5.1 Classism 5.2 Lookism 5.3 Racism 5.4 Sexism

- 62. Bias - Confirmation Bias • Confirmation bias, also called confirmatory bias or myside bias, is the tendency to search for, interpret, favor, and recall information in a way that confirms one's preexisting beliefs or hypotheses, while giving disproportionately less consideration to alternative possibilities. • It is a type of cognitive bias and a systematic error of inductive reasoning. People display this bias when they gather or remember information selectively, or when they interpret it in a biased way. Types 1. Biased search for information 2. Biased interpretation 3. Biased memory Related effects • Polarization of opinion • Persistence of discredited beliefs • Preference for early information • Illusory association between events • List of biases in judgment and decision making • List of memory biases • List of cognitive bias • Woozle effect • Cherry picking • Selective perception • Observer-expectancy effect

- 63. Bias - Confirmation Bias Affects 1. In finance 2. In physical and mental health 3. In politics and law 4. In the paranormal 5. In science 6. In self-image

- 64. Confirmation Bias and General Relativity • In November of 1919, at the age of 40, Albert Einstein became an overnight celebrity, thanks to a solar eclipse. • An experiment had confirmed that light rays from distant stars were deflected by the gravity of the sun in just the amount he had predicted in his theory of gravity, general relativity. • Sir Arthur Eddington reported that starlight had been deflected by the Sun’s gravity by an amount predicted by Albert Einstein’s general relativity after expeditions to Sobral and Principe, major international newspapers and scientific societies (The New York Times and the Royal Society, among others) congratulated Einstein. • General relativity was the first major new theory of gravity since Isaac Newton's more than 250 years earlier.

- 65. Confirmation Bias and General Relativity Eddington’s methods were anything but a cause for celebration. Since he let his personal and political bias influence his work and took advantage of his experiment’s interpretability and his status in the scientific community. Eddington’s motivations to undertake the experiment were not hidden; he was already a supporter of Einstein’s general theory of relativity. Eddington, a British astrophysicist and pacifist, performed this experiment wanting a victory for Einstein, a German physicist and pacifist, because he believed it would signify post-World War I political reconciliation between the UK and Germany. • The rift between Allied and German scholars began when well-known German scientists like Max Planck signed the Manifesto of German Professors and Men of Science, “defending German war policy and action.” • Allied scientists retaliated by condemning and severing their ties with German science. • In response, Eddington wrote that German scientists were simply supporting their country as the Allied ones did theirs, and thus he wrote to end British self-righteousness. In 1919, he came to believe that the eclipse experiments would jump-start reconciliation between the two groups, saying, “It is the best possible thing that could have happened between England and Germany.” He hoped that it would cause his colleagues to be more receptive to German ideas. His perspective is akin to Martin Luther King’s; in essence, he controversially proclaims, “I have a dream that one day English and German scientists will be able to sit down together at a scholarly meeting without nationalist prejudice.” Eddington, in his experiment, maintained he could show either Newton’s half deflection or Einstein’s full deflection. If the outcome was indeed half deflection, then Eddington would have a twofold problem. Newton was English, and his theory was published hundreds of years ago: Eddington and his Royal Astronomical Society hoped that “national prejudice did not prevent [them] from doing anything that [they] could to forward the progress of science” (maintaining the status quo, a two-hundred-year-old English theory, is presumably not scientific progress nor seminal work for which the Royal Society presented him a Fellowship). Hence, he was motivated by an ideological desire to end the boycott on German science (and even went as far as to ask for a Gold Medal of the Royal Astronomical Society for Einstein) and “was committed to the theory before the expeditions were proposed.” • Both Newton’s and Einstein’s theories predicted that light from the distant stars would be deflected by the Sun’s gravitational field, but Einstein’s theory predicted a gravitational displacement (1.7 second of arcs) twice that predicted by Newton’s theory of gravitation (0.8″). • However, in his calculations, Einstein introduced metrics that “caused confusion among those less adept than he at getting the right answer.” • Eddington fared no better in his text The Mathematical Theory of Relativity, making arguments and assumptions that were accepted only because of his 1919 experiment.

- 66. Confirmation Bias and General Relativity In a way, then, Eddington’s measurements did not independently verify Einstein’s predictions. Thus his experiment could only ever prove the predicted value, not the theory, because the derivation from theory to prediction was itself problematic. Yet Eddington never mentioned this; he continually presented a trichotomy of possible results to his expeditions: no deflection, Newton’s half deflection, and Einstein’s full deflection (confirming Einstein’s theory). This did not include other outcomes like too much deflection, strengthening the evidence for any one of his possibilities. Unfortunately, the Sobral and Principe expeditions’measurements were “not sufficiently accurate” to distinguish between the possibilities—there were too many chances for human error and the instrumentation was not advanced enough to definitively support a prediction. The data could have been widely interpreted in very different ways; for example, for the Sobral astrographic plates “if it is assumed that the scale has changed, then the […] deflection from the series of plates is 0.90″ [consistent with Newtonian theory]; if it is assumed that no real change of focus occurred, […] the result is 1.56″ [consistent with Einsteinian theory].” Out of the three sets of plates, Eddington discarded the one which yielded a measurement that would have confirmed Newton’s prediction due to “systematic error.” However, he neglected to apply this reasoning to the other two sets and gave no evidence as to why—an American contemporary fittingly wrote that “the logic of the situation does not seem entirely clear.” Nevertheless, he published his study, writing that it leaves “little doubt” that the deflection confirms Einstein’s general theory of relativity. Though Eddington’s study was not truly a conclusive one, why did contemporary scientists not raise their concerns in forums such as the Royal Society? They did, but Eddington savvily leveraged his reputation as Fellow of the Royal Society and the positions of his coauthors – for instance, the first author of the 1919 paper and Astronomer Royal, Sir Frank Dyson. According to historian John Waller, few disputed Dyson’s specialized background, which included astrometry and even eclipse expeditions, and thus the majority was not inclined to argue with his interpretation of the data (the majority could not hold their own eclipse expedition anyway).

- 67. Experimenter Bias The observer-expectancy effect (also called the experimenter-expectancy effect, expectancy bias, observer effect, or experimenter effect) is a form of reactivity in which a researcher's cognitive bias causes them to subconsciously influence the participants of an experiment. • The classic example of experimenter bias is that of "Clever Hans" (in German, der Kluge Hans), a Orlov Trotter horse claimed by his owner von Osten to be able to do arithmetic and other tasks. • As a result of the large public interest in Clever Hans, philosopher and psychologist Carl Stumpf, along with his assistant Oskar Pfungst, investigated these claims. • Ruling out simple fraud, Pfungst determined that the horse could answer correctly even when von Osten did not ask the questions. • However, the horse was unable to answer correctly when either it could not see the questioner, or if the questioner themselves was unaware of the correct answer. • When von Osten knew the answers to the questions, Hans answered correctly 89 percent of the time. However, when von Osten did not know the answers, Hans guessed only six percent of questions correctly.

- 68. Experimenter Bias - Clever Hans

- 69. Experimenter Bias - Clever Hans • Clever Hans was an Orlov Trotter horse that was claimed to have been able to perform arithmetic and other intellectual tasks. • Hans was a horse owned by Wilhelm von Osten, who was a gymnasium mathematics teacher, an amateur horse trainer, phrenologist, and something of a mystic. • Hans was said to have been taught to add, subtract, multiply, divide, work with fractions, tell time, keep track of the calendar, differentiate musical tones, and read, spell, and understand German. • Von Osten would ask Hans, "If the eighth day of the month comes on a Tuesday, what is the date of the following Friday?" Hans would answer by tapping his hoof. • Questions could be asked both orally, and in written form. • Von Osten exhibited Hans throughout Germany, and never charged admission. • Hans's abilities were reported in The New York Times in 1904. • After von Osten died in 1909, Hans was acquired by several owners. After 1916, there is no record of him and his fate remains unknown. • After a formal investigation in 1907, psychologist Oskar Pfungst demonstrated that the horse was not actually performing these mental tasks, but was watching the reactions of his human observers. • The horse was responding directly to involuntary cues in the body language of the human trainer, who had the faculties to solve each problem. • The trainer was entirely unaware that he was providing such cues. • The anomalous artifact has since been referred to as the Clever Hans effect and has continued to be important knowledge in the observer-expectancy effect and later studies in animal cognition.

- 70. Clever Hans EEfect Investigation • As a result of the large amount of public interest in Clever Hans, the German board of education appointed a commission to investigate von Osten's scientific claims. • Philosopher and psychologist Carl Stumpf formed a panel of 13 people, known as the Hans Commission. • This commission consisted of a veterinarian, a circus manager, a Cavalry officer, a number of school teachers, and the director of the Berlin zoological gardens. This commission concluded in September 1904 that no tricks were involved in Hans’s performance. The commission passed off the evaluation to Pfungst, who tested the basis for these claimed abilities by: 1.Isolating horse and questioner from spectators, so no cues could come from them 2.Using questioners other than the horse's master 3.By means of blinders, varying whether the horse could see the questioner 4.Varying whether the questioner knew the answer to the question in advance. The social communication systems of horses may depend on the detection of small postural changes, and this would explain why Hans so easily picked up on the cues given by von Osten, even if these cues were unconscious. Even after this official debunking, von Osten, who was never persuaded by Pfungst's findings, continued to show Hans around Germany, attracting large and enthusiastic crowds. After Pfungst had become adept at giving Hans performances himself, and was fully aware of the subtle cues which made them possible, he discovered that he would produce these cues involuntarily regardless of whether he wished to exhibit or suppress them. Recognition of this phenomenon has had a large effect on experimental design and methodology for all experiments whatsoever involving sentient subjects, including humans.

- 71. Clever Hans EEfect After Pfungst had become adept at giving Hans performances himself, and was fully aware of the subtle cues which made them possible, he discovered that he would produce these cues involuntarily regardless of whether he wished to exhibit or suppress them. Recognition of this phenomenon has had a large effect on experimental design and methodology for all experiments whatsoever involving sentient subjects, including humans. The risk of Clever Hans effects is one reason why comparative psychologists normally test animals in isolated apparatus, without interaction with them. However this creates problems of its own, because many of the most interesting phenomena in animal cognition are only likely to be demonstrated in a social context, and in order to train and demonstrate them, it is necessary to build up a social relationship between trainer and animal. This point of view has been strongly argued by Irene Pepperberg in relation to her studies of parrots (Alex), and by Allen and Beatrix Gardner in their study of the chimpanzee Washoe. If the results of such studies are to gain universal acceptance, it is necessary to find some way of testing the animals' achievements which eliminates the risk of Clever Hans effects. The Clever Hans Effect has also been observed in drug sniffing dogs. A study at University of California Davis revealed that cues can be telegraphed by the handler to the dogs, resulting in false positives. Related • Cholla the painting horse • Harass II, a dog used in criminal investigations • Ideomotor Effect • Lady Wonder, a horse with purported telepathic abilities. • Beautiful Jim Key • Pygmalion effect • Nazi talking dogs • Rom Houben • Jim the Wonder Dog • Facilitated communication

- 72. Ideomotor Phenomenon Ideomotor phenomenon is a psychological phenomenon wherein a subject makes motions unconsciously. Michael Faraday, Manchester surgeon James Braid,[8] the French chemist Michel Eugène Chevreul, and the American psychologists William James and Ray Hyman have demonstrated that many phenomena attributed to spiritual or paranormal forces, or to mysterious "energies," are actually due to ideomotor action. Furthermore, these tests demonstrate that "honest, intelligent people can unconsciously engage in muscular activity that is consistent with their expectations".They also show that suggestions that can guide behavior can be given by subtle clues. Some operators use ideomotor responses to communicate with a subject's "unconscious mind" using a system of physical signals (such as finger movements) for the unconscious mind to indicate "yes", "no","I don't know", or "I'm not ready to know that consciously". A simple experiment to demonstrate the ideomotor effect is to allow a hand-held pendulum to hover over a sheet of paper. The paper has keywords such as YES, NO and MAYBE printed on it. Small movements in the hand, in response to questions, can cause the pendulum to move towards key words on the paper. This technique has been used for experiments in extrasensory perception, lie detection, and ouija boards. This type of experiment was used by Kreskin and has also been used by illusionists such as Derren Brown. Related • Unconscious mind • Adaptive unconscious • Illusions of self-motion • Proprioception • Subconscious • Unconscious communication • Body language

- 73. Experimenter Bias Where bias can emerge A review of bias in clinical studies concluded that bias can occur at any or all of the seven stages of research. These include: 1. Selective background reading 2. Specifying and selecting the study sample 3. Executing the experimental manoeuvre (or exposure) 4. Measuring exposures and outcomes 5. Data analysis 6. Interpretation and discussion of results 7. Publishing the results (or not) (Publication bias - not to be confused with Reporting Bias or Media Bias) Reporting bias • In epidemiology, reporting bias is defined as "selective revealing or suppression of information" by subjects (for example about past medical history, smoking, sexual experiences). • In artificial intelligence research, the term reporting bias is used to refer to people's tendency to under-report all the information available. • In empirical research, the term may be used to refer to authors under- reporting unexpected or undesirable experimental results, attributing the results to sampling or measurement error, while being more trusting of expected or desirable results, though these may be subject to the same sources of error. Media bias • Media bias is the bias or perceived bias of journalists and news producers within the mass media in the selection of events and stories that are reported and how they are covered. • The term "media bias" implies a pervasive or widespread bias contravening the standards of journalism, rather than the perspective of an individual journalist or article.

- 74. Experimenter Bias - Classification Experimenter's bias was not well recognized until the 1950s and 60's, and then it was primarily in medical experiments and studies. Sackett (1979) catalogued 56 biases that can arise in sampling and measurement in clinical research, among the above-stated first six stages of research. 1. In reading-up on the field 1. the biases of rhetoric 2. the "all's well" literature bias 3. one-sided reference bias 4. positive results bias 5. hot stuff bias

- 75. Experimenter Bias - Classification 2. In specifying and selecting the study sample 1. popularity bias 2. centripetal bias 3. referral filter bias 4. diagnostic access bias 5. diagnostic suspicion bias 6. unmasking (detection signal) bias 7. mimicry bias 8. previous opinion bias 9. wrong sample size bias 10. admission rate (Berkson) bias 11. prevalence-incidence (Neyman) bias 12. diagnostic vogue bias 13. diagnostic purity bias 14. procedure selection bias 15. missing clinical data bias 16. non-contemporaneous control bias 17. starting time bias 18. unacceptable disease bias 19. migrator bias 20. membership bias 21. non-respondent bias 22. volunteer bias

- 76. Experimenter Bias - Classification Classification 3. In executing the experimental manoeuvre (or exposure) 1. contamination bias 2. withdrawal bias 3. compliance bias 4. therapeutic personality bias 5. bogus control bias

- 77. Experimenter Bias - Classification 3. In executing the experimental manoeuvre (or exposure) 1. contamination bias 2. withdrawal bias 3. compliance bias 4. therapeutic personality bias 5. bogus control bias 4. In measuring exposures and outcomes 1. insensitive measure bias 2. underlying cause bias (rumination bias) 3. end-digit preference bias 4. apprehension bias 5. unacceptability bias 6. obsequiousness bias 7. expectation bias 8. substitution game 9. family information bias 10. exposure suspicion bias 11. recall bias 12. attention bias 13. instrument bias

- 78. Experimenter Bias - Classification 5. In analyzing the data 1. post-hoc significance bias 2. data dredging bias (looking for the pony) 3. scale degradation bias 4. tidying-up bias 5. repeated peeks bias 6. In interpreting the analysis 1. mistaken identity bias 2. cognitive dissonance bias 3. magnitude bias 4. significance bias 5. correlation bias 6. under-exhaustion bias