Adversarial training Basics

- 4. Generative Adversarial Networks(GAN) Basic Architecture

- 6. Why Generative Models are Important

- 8. Yes.. This is possible

- 9. Cycle GAN - Realtime

- 10. Running Human

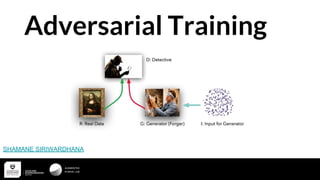

- 12. Min-Max Game ● Generator trying to Fool the discriminator ● Discriminator somehow needs to identify fake from real very well ● Something similar to min-max in game theory Since it’s Adversary

- 13. Somehow Generator needs to fool the Discriminator

- 15. Discriminator

- 16. Generator

- 17. After the two way Optimization ... Start End

- 19. Discriminator & Generator Yunjey Choi

- 20. Training the Discriminator Yunjey Choi

- 21. Training the Discriminator Yunjey Choi

- 22. Training the Generator D is Fixed ! Yunjey Choi

- 25. Visualization

- 26. Main Problem - Discriminator Saturation ● Discriminator is too Good :( ● There won’t be any chance for the generator to learn something Yunjey Choi

- 27. Summary of the training process

- 28. Why GAN Works ? No Magic

- 30. ● GAN's Task is to make the Generated Distribution(Pmodel) same as the Real data Distribution(Preal)

- 31. ● There are ways to measure similarity of two distributions Eg: ○ KL divergence ○ Jensen–Shannon divergence We can easily prove that Optimization of GAN’s loss function is similar to reducing Jensen–Shannon divergence between the two distributions

- 33. When we have an optimal discriminator Optimization of the Loss = Minimizing the Jensen Shannon Divergence

- 34. Yeah !

- 35. GAN is not easy to Train ! ● Non-convergence: the parameters oscillate, constantly destabilize and unlikely to arrive to converge (Issues with Nash Equality). ● Mode collapse: generator collapses, leading to produce limited varieties of samples.

- 37. Yes! there are more stable methods right now ! ❖ Wasserstein GAN WGAN vs GAN - Similar in terms of Formality & Functionality Only thing change is the Loss Function !

- 38. Now Loss Function is more of a Critic ! ❖ Previously the Discriminator and the Generator are working against each other ❖ But now discriminator is is trying to give the generator an Idea of how different it’s generated data is deviate from the actual data distribution. ❖ No Log probabilities - No Diminish Gradients ❖ Uses EM(Earth Mover's Distance) distance to model the loss function !

- 39. Wasserstein Distance or EM Distance This is a measurement about how much work that generator has to do to match the distribution of the real images This is why we call it a Critic!

- 40. Reducing the distance between generated samples and real samples Generator distribution Real distribution Critic

- 42. WGAN vs GAN

- 44. We need to clip the weights in the discriminator ● f has to be a 1-Lipschitz function. ● To enforce the constraint, WGAN applies a very simple clipping to restrict the maximum weight value in f ● The weights of the discriminator must be within a certain range controlled by the hyperparameters After every update we need to clip the weights 0f the discriminator

- 46. Solving the Vanishing Gradient Issues .. More stable training ...

- 49. Resources GAN - https://arxiv.org/abs/1406.2661 WGAN - https://arxiv.org/abs/1701.07875 Improved WGAN - https://arxiv.org/abs/1704.00028 Principal Method Of Training GAN - https://openreview.net/pdf?id=Hk4_qw5xe Amazing series of Article By Jonathan Hui https://medium.com/@jonathan_hui/gan-whats-generative-adversarial-networks-and-its-application-f39ed278ef09

- 50. What we are into this .. ❖ GANhas an amazing ability to enrich Reinforcement Learning such as… 1. Planning 2. Inverse Reinforcement

- 51. Imitation Learning ● Learning From Expert’s Demonstrations ● Something in between Supervised Learning and Deep Reinforcement Learning ● There is a clear connection between GAN and Imitation Learning

- 52. Generative Adversarial Imitation Learning

- 53. What is the difference ! 1. Instead of just Images we have expert’s trajectories which means states and action pairs 2. Now the Generator is an AI Agent

- 54. Generative Adversarial Imitation Learning

- 55. Target Driven Visual Navigation Extra

- 56. Target Driven Visual Navigation