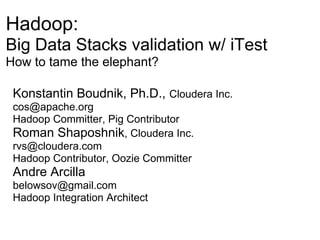

Hadoop: Big Data Stacks validation w/ iTest How to tame the elephant?

- 1. Hadoop: Big Data Stacks validation w/ iTest How to tame the elephant? Konstantin Boudnik, Ph.D., Cloudera Inc. cos@apache.org Hadoop Committer, Pig Contributor Roman Shaposhnik, Cloudera Inc. rvs@cloudera.com Hadoop Contributor, Oozie Committer Andre Arcilla belowsov@gmail.com Hadoop Integration Architect

- 2. This content is licensed under Creative Commons BY-NC-SA 3.0

- 3. Agenda ● Problem we are facing ○ Big Data Stacks ○ Why validation ● What is "Success" and the effort to achieve it ● Solutions ○ Ops testing ○ Platform certification ○ Application testing ● Stack on stack ○ Test artifacts are First Class Citizen ○ Assembling validation stack (vstack) ○ Tailoring vstack for target clusters ○ D3: Deployment/Dependencies/Determinism

- 4. Not on Agenda ● Development cycles ○ Features, fixes, commits, patch testing ● Release engineering ○ Release process ○ Package preparations ○ Release notes ○ RE deployments ○ Artifact management ○ Branching strategies ● Application deployment ○ Cluster update strategies (rolling vs. offline) ○ Cluster upgrades ○ Monitoring ○ Statistics collection ● We aren't done yet, but...

- 5. What's a Big Data Stack anyway? Just a base layer!

- 6. What is Big Data Stack? Guess again...

- 7. What is Big Data Stack? A bit more...

- 8. What is Big Data Stack? A bit or two more...

- 9. What is Big Data Stack? A bit or two more + a bit = HIVE

- 10. What is Big Data Stack?

- 11. What is Big Data Stack? And a Sqoop of flavor...

- 12. What is Big Data Stack? A Babylon tower?

- 13. What is the glue that holds the bricks? ● Packaging ○ RPM, DEB, YINST, EINST? ○ Not the best fit for Java ● Maven ○ Part of the Java ecosystem ○ Not the best tool for non-Java artifacts ● Common APIs we will assume ○ Versioned artifacts ○ Dependencies ○ BOMs

- 14. How can we possibly guarantee that everything is right?

- 15. Development & Deployment Discipline !

- 16. Is it enough really?

- 17. Of course not...

- 18. Components: ● I want Pig 0.7 ● You need Pig 0.8

- 19. Components: ● I want Pig 0.7 ● You need Pig 0.8 Configurations: ● Have I put in 5th data volume to DN's conf of my 6TB nodes? ● Have I _not_ copied it accidently to my 4TB nodes?

- 20. Components: ● I want Pig 0.7 ● You need Pig 0.8 Configurations: ● Have I put in 5th data volume to DN's conf of my 6TB nodes? ● Have I _not_ copied it accidently to my 4TB nodes? Auxiliary services: ● Does my Sqoop has Oracle connector?

- 21. Honestly: ● Can anyone remember all these details?

- 22. What if you've missed some?

- 23. How would you like your 10th re-spin of a release? Make it bloody this time, please...

- 24. Redeployments...

- 26. LOST REVENUE ;(

- 27. And don't you have anything better to do with that life of yours?

- 28. Who Needs A Stack Anyway? ● Developers ○ reference platform which is complete and works ○ less handholding for downstream groups ● QE ○ definitive testable artifact, something to explore and certify ○ free QE to do QE ● Operations ○ deploy a "Hadoop", not a "Hadoop erector set" ○ confidence in dev/QE effort ● Eliminate cross-team effort "bleed" ● Align teams on a common "axis"

- 29. Successful Stack Deployment System "Customers Are Happy!" ● Deploy stack configuration X to a cluster Y, for such X & Y: ○ satisfy devs, QE and Ops ○ variable enough to absorb all the crazy use cases

- 30. Who Needs Stack Testing Anyway? ● All stack customers ○ Yes QE for sure (integration, system, CI levels...), but also ○ Devs: did my latest patch break security auth or slowed HDFS to a crawl? ○ Ops: you ask for "striping". Did YOU test striping? Can WE test it as well? ○ Customers: did you test MY stack

- 31. Successful Integration Testing System "Customers Are Happy!" 1. QE: easy to deploy, configure, run, add tests 2. Stakeholders: provides relevant info about stack quality 1. requires plenty of relevant tests and datapoints 1. see 1)

- 32. Effort Required To Deploy/Test Stacks

- 33. The Parable of Garage ● Lots of stuff coexisting together ○ fancy, polished machines (mountain bike) ○ utility tools (hedge trimmer, shop vacuum) ○ ugly misfits (old paint pan, smelly dry mushrooms) ● Cannot be "simplified" due to complexity and evolving nature ● Need to manage complexity: use, change, check ● Keep objects inviolate: cannot expect a bucket to mount directly on a wall ● Reliance on external services: studs, drywall, garage door

- 34. The Complexity Management Solution ● Provide the Framework and the means for objects to plug into this framework ● Framework ○ holds everything together, enables management ○ sophisticated design, well engineered, sturdy ● "Glue"/"Bubblegum logic" ○ binds componets to the framework via minimally- invasive API ○ easy to design, engineering complexity is lower ○ follows well-documented process ● Components ○ actual participants of the garage ○ require no modifications

- 35. Engineering Effort Matrix Development Level of External Develop in Investment Effort Complexity Dependencies Parallel Framework Medium High Many One time No Few or none Component Low-Medium Incremental, Low-Medium provided by Yes Glue by example per component framework ● Framework - high complexity one time effort ● Components - per-component incremental effort, average complexity, code-by-example

- 36. Validation Stack for Big Data A Babylon tower vs Tower of Hanoi

- 37. Validation Stack (tailored) Or something like this...

- 38. Use accepted platform, tools, practices ● JVM is simply The Best ○ Disclaimer: not to start religious war ● Yet, Java isn't dynamic enough (as in JDK6) ○ But we don't care what's your implementation language ○ Groovy, Scala, Clojure, JPython (?) ● Everyone knows JUnit/TestNG ○ alas not everyone can use it effectively ● Dependency tracking and packaging ○ Maven ● Information radiators facilitate data comprehension and sharing ○ TeamCity ○ Jenkins

- 39. Few more pieces ● Tests/workloads have to be artifact'ed ○ It's not good to go fishing for test classes ● Artifacts have to be self-contained ○ Reading 20 pages to find a URL to copy a file from? "Forget about it" (C) ● Standard execution interface ○ JUnit's Runner is as good as any custom one ● A recognizable reporting format ○ XML sucks, but at least it has a structure

- 40. A test artifact (PigSmoke 0.9-SNAPSHOT) <project> <groupId>org.apache.pig</groupId> <artifactId>pigsmoke</artifactId> <packaging>jar</packaging> <version>0.9.0-SNAPSHOT</version> <dependencies> <dependency> <groupId>org.apache.pig</groupId> <artifactId>pigunit</artifactId> <version>0.9.0-SNAPSHOT</version> </dependency> <dependency> <groupId>org.apache.pig</groupId> <artifactId>pig</artifactId> <version>0.9.0-SNAPSHOT</version> </dependency> </dependencies> </project>

- 41. How do we write iTest artifacts $ cat TestHadoopTinySmoke.groovy class TestHadoopTinySmoke { .... @BeforeClass static void setUp() throws IOException { String pattern = null; //Let's unpack everything JarContent.unpackJarContainer(TestHadoopSmoke.class, '.' , pattern); ..... } @Test void testCacheArchive() { def conf = (new Configuration()).get("fs.default.name"); .... sh.exec("hadoop fs -rmr ${testDir}/cachefile/out", "hadoop ....

- 42. Add suitable dependencies (if desired) <project> ... <dependency> <groupId>org.apache.pig</groupId> <artifactId>pigsmoke</artifactId> <version>0.9-SNAPSHOT</version> <scope>test</scope> </dependency> <!-- OMG: Hadoop dependency _WAS_ missed --> <dependency> <groupId>org.apache.hadoop</groupId> <artifactId>hadoop-core</artifactId> <version>0.20.2-CDH3B4-SNAPSHOT</version> </dependency> ...

- 43. Unpack data (if needed) ... <execution> <id>unpack-testartifact-jar</id> <phase>generate-test-resources</phase> <goals> <goal>unpack</goal> </goals> <configuration> <artifactItems> <artifactItem> <groupId>org.apache.pig</groupId> <artifactId>pigsmoke</artifactId> <version>0.9-SNAPSHOT</version> <type>jar</type> <outputDirectory>${project.build.directory}</outputDirectory> <includes>test/data/**/*</includes> </artifactItem> </artifactItems> </configuration> </execution> ...

- 44. Find runtime libraries (if required) ... <execution> <id>find-lzo-jar</id> <phase>pre-integration-test</phase> <goals> <goal>execute</goal> </goals> <configuration> <source> try { project.properties['lzo.jar'] = new File("${HADOOP_HOME}/lib").list( [accept:{d, f-> f ==~ /hadoop.*lzo.*.jar/ }] as FilenameFilter ).toList().get(0); } catch (java.lang.IndexOutOfBoundsException ioob) { log.error "No lzo.jar has been found under ${HADOOP_HOME}/lib. Check your installation."; throw ioob; } </source> </configuration> </execution> </project>

- 45. Take it easy: iTest will do the rest ... <execution> <id>check-testslist</id> <phase>pre-integration-test</phase> <goals> <goal>execute</goal> </goals> <configuration> <source><![CDATA[ import org.apache.itest.* if (project.properties['org.codehaus.groovy.maven.destination'] && project.properties['org.codehaus.groovy.maven.jar']) { def prefix = project.properties['org.codehaus.groovy.maven.destination']; JarContent.listContent(project.properties['org.codehaus.groovy.maven.jar']). each { TestListUtils.touchTestFiles(prefix, it); }; }]]> </source> </configuration> </execution> ...

- 46. Tailored validation stack <project> <groupId>com.cloudera.itest</groupId> <artifactId>smoke-tests</artifactId> <packaging>pom</packaging> <version>1.0-SNAPSHOT</version> <name>hadoop-stack-validation</name> ... <!-- List of modules which should be executed as a part of stack testing run --> <modules> <module>pig</module> <module>hive</module> <module>hadoop</module> <module>hbase</module> <module>sqoop</module> </modules> ... </project>

- 47. Just let Jenkins do its job (results)

- 48. Just let Jenkins do its job (trending)

- 49. What else needs to be taken care of? ● Packaged deployment ○ packaged artifact verification ○ stack validation Little Puppet on top of JVM: static PackageManager pm; @BeforeClass static void setUp() { .... pm = PackageManager.getPackageManager() pm.addBinRepo("default", "http://archive.canonical.com/", key) pm.refresh() pm.install("hadoop-0.20")

- 50. The coolest thing about single platform: void commonPackageTest(String[] gpkgs, Closure smoke, ...) { pkgs.each { pm.install(it) } pkgs.each { assertTrue("package ${it.name} is notinstalled", pm.isInstalled(it)) } pkgs.each { pm.svc_do(it, "start")} smoke.call(args) } @Test void testHadoop() { commonPackageTest(["hadoop-0.20", ...], this.&commonSmokeTestsuiteRun, TestHadoopSmoke.class) commonPackageTest(["hadoop-0.20", ...], { sh.exec("hadoop fs -ls /") }) }

- 51. Fully automated unified reporting

- 52. iTest: current status ● Version 0.1 available at http://github.com/cloudera/iTest ● Apache2.0 licensed ● Contributors are welcome (free cookies to first 25)

- 53. Putting all technologies together: ● Puppet, iTest, Whirr: 1. Change hits a SCM repo 2. Hudson build produces Maven + Packaged artifacts 3. Automatic deployment of modified stacks 4. Automatic validation using corresponding stack of integration tests 5. Rinse and repeat ● Challenges: ○ Maven versions vs. packaged versions vs. source ○ Strict, draconian discipline in test creation ○ Battling combinatoric explosion of stacks ○ Size of the cluster (pseudo-distributed <-> 500 nodes) ○ Self contained dependencies (JDK to the rescue!) ○ Sure, but does it brew espresso?

- 54. Definition of Success ● Build powerful platform that allows to sustain a high rate of product innovation without accumulating the technical debt ● Tighten organizational feedback loop to accelerate product evolution ● Improve culture and processes to achieve Agility with Stability as the organization builds new technologies