Handout web technology.doc1

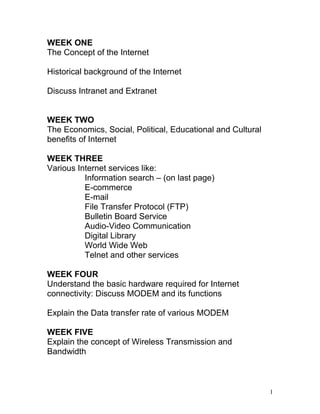

- 1. WEEK ONE The Concept of the Internet Historical background of the Internet Discuss Intranet and Extranet WEEK TWO The Economics, Social, Political, Educational and Cultural benefits of Internet WEEK THREE Various Internet services like: Information search – (on last page) E-commerce E-mail File Transfer Protocol (FTP) Bulletin Board Service Audio-Video Communication Digital Library World Wide Web Telnet and other services WEEK FOUR Understand the basic hardware required for Internet connectivity: Discuss MODEM and its functions Explain the Data transfer rate of various MODEM WEEK FIVE Explain the concept of Wireless Transmission and Bandwidth 1

- 2. Discuss various wireless transmission media: VSAT Radio etc WEEK SIX Discuss obstacle to effective Transmission Discuss the Steps required to connect a PC to the Internet WEEK SEVEN Discuss Problems of Telecommunication infrastructure in Nigeria: Technical know-how WEEK EIGHT Economic factors in Nigeria – Poverty level of the people; level of awareness WEEK NINE The government policies on Internet Access Explain the concept of ISP and the need for it WEEK TEN Explain the economic effect of using local or foreign ISP Describe Domain Name System (DNS) and its space WEEK ELEVEN Explain how to name servers in the DNS Revision WEEK TWELVE EXAMINATION 2

- 3. LECTURER: MR. M.A. ADEWUSI THE CONCEPT OF THE INTERNET Internet can be termed as the interconnection of the variety of networks and computers. Internet makes use of the internet protocol and the transmission Control protocol. Internet opened the doors of communication between the various stations. Internet facilitates storing and transmission of large volumes of data. The internet is one of the most powerful communication tools today. In the 1990’s internet gained popularity in the masses. People started becoming aware of the uses of internet. Internet helped the people to organize their information and files in a systematic order. Various researches were conducted on internet. Gopher was the first frequently used hypertext interface. In 1991, a network based implementation with respect to the hypertext was made. The technology was inspired by many people. With the advent of the World Wide Web search engine the popularity of internet grew on an extensive scale. Today, the usage of internet is seen in science, commerce and nearly all the fields There are various ways and means to access the internet. With the advancement in technology people can now access internet services through their cell phones, play stations and various gadgets. There are large numbers of internet service providers as well. With the development and the wide spread application of internet electronic mail people from all across the globe come together and communication has become much easier than ever before. Messages, in the form of Emails could be send in at any corner of the world within fractions of seconds. Emails also facilitated mass communication (one sender many receivers). 3

- 4. Emails, video conferencing, live telecast, music, news, e-commerce are some of the services made available due to internet. Entertainment has taken new dimensions with the increase of internet and all we see it's a continuous development and transformation. THE WEB2.0 CONCEPT "Web 2.0" refers to what is perceived as a second generation of web development and web design. It is characterized as facilitating communication, information sharing, interoperability, User-centered design[1] and collaboration on the World Wide Web. It has led to the development and evolution of web-based communities, hosted services, and web applications. Examples include social-networking sites, video-sharing sites, wikis, blogs, mashups and folksonomies. The term "Web 2.0" was coined by Darcy DiNucci in 1999. In her article "Fragmented Future," she writes[2] The Web we know now, which loads into a browser window in essentially static screenfuls, is only an embryo of the Web to come. The first glimmerings of Web 2.0 are beginning to appear, and we are just starting to see how that embryo might develop. ... The Web will be understood not as screenfuls of text and graphics but as a transport mechanism, the ether through which interactivity happens. It will [...] appear on your computer screen, [...] on your TV set [...] your car dashboard [...] your cell phone [...] hand-held game machines [...] and maybe even your microwave. Her arguments about Web 2.0 are nascent yet hint at the meaning that is associated with it today. The term is now closely associated with Tim O'Reilly because of the O'Reilly Media Web 2.0 conference in 2004.[3][4] Although the term suggests a new version of the World Wide Web, it does not refer to an update to any technical specifications, but rather to cumulative changes in the ways software developers and end-users utilize the Web. According to Tim O'Reilly: Web 2.0 is the business revolution in the computer industry caused by the move to the Internet as a platform, and an attempt to understand the rules for success on that new platform.[5] 4

- 5. However, whether it is qualitatively different from prior web technologies has been challenged. For example, World Wide Web inventor Tim Berners-Lee called the term a "piece of jargon"[6]. HISTORICAL BACKGROUNG OF THE INTERNET 1950 As early as 1950 work began on the defense system know as the Semi-Automatic Ground Environment, or SAGE. This defense system was thought to be required to protect the US mainland from the new Soviet long range bombers and missile carriers such as the Tupolev. IBM, MIT and Bell Labs all worked together to build the SAGE continental air defense network that became operational in 1959. SAGE became the most advanced network in the world at the time of its creation and consisted of early warning radar systems on land, sea, and even air courtesy of AWACS planes. The network technology lead to more advanced systems and protocols that would one day become the Internet, as well as common hardware items such as the mouse, magnetic memory (tape), computer graphics, and the modem. 1957 In 1957 the USSR launched the first earth-orbiting artificial satellite and kicked off the space race in a big way. The United States, suddenly fearful of Russian space platforms armed with nuclear weapons, needed an agency designed to combat this menace. ARPA, or Advanced Research Projects Agency, was founded in 1958 and was given the mission of making the US the leader in science and technology. In 1972 ARPA was renamed the Defense Advanced Research Projects Agency (DARPA). As of 1996 the agency is once again called ARPA. 5

- 6. ARPA hired J.C.R. Licklider in 1962 to become the Director of their Information Processing Techniques Office (IPTO). Licklider’s works eventually lead to the field of interactive computing, and is the foundation for the field of Computer Science in general. Lickliders work with computer timesharing helped usher in the practical use of the computer network. 1962 – Rise of the Modem and the Conceptual Internet AT&T released the first commercially available modem, the Bell 103. The modem was the first with full-duplex transmission capability and had a speed of 300 bits per second. The first real conceptual plan of the Internet was being seen in a series of memos released by J.C.R Licklider where he referred to a “Galactic Network” that connected all users and data in the world. These memos grew from his first paper on the subject Man-Computer Symbiosis released in 1960, although this early work covered human interaction with computers and less about human to human communication. In 1962 the aptly named On- Line Man Computer Communications was released and dealt with the concept of social interaction through computer networks. Birth of the World Wide Web 1992 – ISOC Founded in 1992, The Internet Society (ISOC) is a non-profit organization that assists in the development of Internet education, policy and standards. The organization has offices in the U.S and Switzerland and works toward an Internet evolution that will benefit the entire world. ISOC is the home of organizations responsible for Internet infrastructure standards, the Internet Engineering Task Force, and the Internet Architecture Board (IAB). ISOC is also a clearinghouse for Internet education and information and a facilitator for Internet activities all over the world. ISOC has run network training programs for developing countries and assisted in the connecting of almost every country to the Internet. 6

- 7. 1994 - The Web Browser is Born Jim Clark and Mark Andreessen founded Mosaic Communications in 1994. Andreessen had been the leader of a software project at the University of Illinois called Mosaic, the fist publically available web browser. Jim and Mark changed the name of Mosaic Communications to Netscape Communications and their web browser was soon released to a frantically growing market. Netscape was the largest browser firm in the world very quickly and dominated the market. Software releases seemed to come out monthly if not faster and it was these Netscape offerings that lead to the term “internet time”. Business was moving faster everyday. By 1995 Netscape had an 80% market share. 1995 - Windows 95 and the Browser Wars Windows 95 was released by Microsoft and took the world by storm. The software giant solidified its OS presence and began to make its way into homes across the world. A little know program included with the OS was Internet Explorer, a web browser. Microsoft wanted to challenge Netscape’s dominance in the browser market, and had the OS platform to do it with. Netscape pushed the boundaries of browser technology and made technological leaps forward on an almost daily basis. Netscape was considered the most advanced browser available, and Microsoft had years of catching up to do. One key difference however was Internet Explorer was free and Netscape was not. This was difficult to overcome but Netscape pushed forward in what was now being called The Browser Wars. Although the term Browser Wars generally refers to the competition in the marketplace of the various web browsers in the early and mid 90’s it is most commonly used in reference to Internet Explorer and Netscape Navigator. Microsoft eventually captured Netscape’s market share, and Netscape Navigator ceased to be. Firefox is now the primary browser competitor to Internet Explorer. 1998 – ICANN is Formed 7

- 8. ICANN is the Internet Corporation for Assigned Names and Numbers and is a not-for- profit public benefit corporation. ICANN helps coordinate the unique identifiers ever computer needs to be able to communicate via the Internet. It is by these identifiers computers can find each other, and without them no communication would be possible. ICAAN is dedicated to keeping the Internet the Internet secure, stable, and interoperable. 1999 – Y2K Looms Y2K was short for “the year 2000 software problem” and was also called The Millennium Bug. The problem has been the subject of many books and news reports, and was discussed by Usenet users as early as 1985. The heart of the problem was that it was thought that computer programs would produce erroneous information or simply stop working because they stored years using only two digits. This would mean the year 200 would be represented with 00 and appear to be the year 1900 to the computer. Government committees were set up to drive contingency plans around the Y2K issue that would help mitigate damages caused to crucial infrastructure such as utilities, telecommunication, banking and more. Although the failing of military systems was discussed in public forums, the danger there was minimal as the closed systems were all Y2K compliant. Public fears of the Y2K “disaster” grew as the date approached. Although there was no Y2K disaster either due to concerted efforts or simply because there never was a great danger, there were benefits to the issue such as the proliferation of data back up systems and power contingency systems that protect businesses today. One of the few Y2K incidents was the US timekeeper (USNO) reported the new year as 19100 on 01/01/2002 8

- 9. 2001 – HDTV over an IP Network Level 3 Communications Inc and the University of Southern California successfully demonstrate the first transmission of uncompressed real-time gigabit high-definition television over an Internet Protocol optic network. This demonstration proved the technology they were using would support high speed data steaming without packet differentiation. Without the need of data packets, HDTV anywhere begins to be discussed. INTRANET AND EXTRANET Introduction A key requirement in today's business environment is the ability to communicate more effectively, both internally with your employees and externally with your trading partners and customers. An intranet is a private - internal - business network that enables your employees to share information, collaborate, and improve communications. An extranet enables your business to communicate and collaborate more effectively with selected business partners, suppliers and customers. An extranet can play an important role in enhancing business relationships and improving supply chain management. An intranet is a business' own private website. It is a private business network that uses the same underlying structure and network protocols as the internet and is protected from unauthorised users by a firewall. 9

- 10. Intranets enhance existing communication between employees, and provide a common knowledge base and storage area for everyone in your business. They also provide users with easy access to company data, systems and email from their desktops. Because intranets are secure and easily accessible via the internet, they enable staff to do work from any location simply by using a web browser. This can help small businesses to be flexible and control office overheads by allowing employees to work from almost any location, including their home and customer sites. Other types of intranet are available that merge the regular features of intranets with those often found in software such as Microsoft Office. These are known as online offices or web offices. Creating a web office will allow you to organise and manage information and share documents and calendars using a familiar web browser function, which is accessible from anywhere in the world. Types of content found on intranets: • administrative - calendars, emergency procedures, meeting room bookings, procedure manuals and membership of internal committees and groups • corporate - business plans, client/customer lists, document templates, branding guidelines, mission statements, press coverage and staff newsletters • financial - annual reports and organisational performance • IT - virus alerts, tips on dealing with problems with hardware, software and networks, policies on corporate use of email and internet access and a list of online training courses and support • marketing - competitive intelligence, with links to competitor websites, corporate brochures, latest marketing initiatives, press releases, presentations • human resources - appraisal procedures and schedules, employee policies, expenses forms and annual leave requests, staff discount schemes, new vacancies • individual projects - current project details, team contact information, project management information, project documents, time and expense reporting 10

- 11. • external information resources - route planning and mapping sites, industry organisations, research sites and search engines. Benefits of intranets and extranets Your organization’s' efficiency can be improved by using your intranet for: • publishing - delivering information and business news as directories and web documents • document management - viewing, printing and working collaboratively on office documents such as spreadsheets • training - accessing and delivering various types of e-learning to the user's desktop • workflow - automating a range of administrative processes • front-end to corporate systems - providing a common interface to corporate databases and business information systems • email - integrating intranet content with email services so that information can be distributed effectively The main benefits of an intranet are: • better internal communications - corporate information can be stored centrally and accessed at any time • sharing of resources and best practice - a virtual community can be created to facilitate information sharing and collaborative working • improved customer service - better access to accurate and consistent information by your staff leads to enhanced levels of customer service • reduction in paperwork - forms can be accessed and completed on the desktop, and then forwarded as appropriate for approval, without ever having to be printed out, and with the benefit of an audit trail 11

- 12. It is a good idea to give your intranet a different image and structure from your customer- facing website. This will help to give your internal communications their own identity and prevent employees confusing internal and external information. BENEFITS OF INTERNET IN RELATION TO: ECONOMICS: The most often cited reasons for communities establishing their own local access networks are for reasons of economic development and the requirement for local businesses to have high-speed Internet services. The system requirements were that it must provide broadband virtual private networks (VPN) and high-speed Internet access for municipal facilities, emergency and public services, businesses and industrial spaces. One of the more recent studies on the economic impact of mass market broadband was conducted on a range of communities across the United States comparing indicators of economic activity that included employment wages and industry mix (Lehr, Osorio, Gillett and Sirbu, 2004). In those U.S. communities where broadband had been deployed since December 1999, the researchers found that between 1998 and 2002, these communities experienced more rapid growth in employment, number of businesses overall, and businesses in IT- intensive sectors. As noted by the authors, “… the early results presented here suggest that the assumed (and oft-touted) economic impacts of broadband are both real and measurable.”[7] The major reason that access to high-speed Internet access is believed to benefit local communities, particularly in rural areas, is the impact that it has on increasing the competitiveness of businesses by increasing productivity, extending their market reach and reducing costs. Until recently, there has been little research done on attempting to analyse and document the underlying reasons why businesses are positively impacted by high-speed Internet access. There are two studies, one from Cornwall, England and the other from British Columbia, Canada, which have shown remarkably similar results. 12

- 13. Cornwall is a relatively isolated area that is provided with high-speed Internet access by actnow, a public-private partnership that was established in 2002 by Cornwall Enterprise to promote economic development in the region. In April 2005, an online survey of companies that were served by actnow was undertaken. The main findings of the study were: “Their answers suggest that broadband is generally benefiting enterprises, individuals, the Cornish economy, society and the natural environment by, for example, extending market reach and impact, making organisational working practices more efficient, enabling staff to work flexibly, and substituting travel and meetings with electronic communication. In particular: • Over 94% of respondents report positive overall impacts from broadband, with 68% stating that they are highly positive. • A large majority of respondents feel that broadband has positive impacts on business performance (91%), relationships with customers (87%), and the job satisfaction and skills of staff (74%). • 90% of respondents expect to get continued benefits – and 45% considerable benefits - from broadband.” [8] The Peace River and South Silmilkameen regions are located in remote parts of British Columbia, Canada. The area is served by the Peace Region Internet Society which is a not for profit organisation established in 1994 to provide affordable access to the Internet for individuals, businesses, and organizations in the Peace Region of Northern British Columbia. In 2005, an online survey of customers was undertaken to measure the economic impact of high-speed Internet access in these communities. The major findings were: “For most businesses in the communities we studied, broadband is an important factor in remaining competitive. Broadband allows businesses to be more productive, to identify and respond to opportunities faster, and to meet the expectations of customers, partners, and suppliers. • Over 80 per cent of business respondents reported that broadband positively affected their businesses. Over 18 per cent stated they could not operate without broadband; • 62 per cent of businesses say productivity has gone up with a majority citing an increase of more than 10 per cent; 13

- 14. • Many businesses reported increased revenue and/or decreased costs due to broadband connectivity.”[9] The two studies show a high degree of similarity in the responses with businesses in both areas demonstrating that high-speed Internet access has positively impacted their businesses, through increased productivity and overall efficiencies. The research demonstrates that high-speed Internet connectivity is important to ensure that businesses in rural and remote areas remain competitive and without it, they would be at a severe competitive disadvantage. POLITICAL: Theoretical Background: Inclusion in or Exclusion from the Political System? Some years ago Dick Morris, one of Bill Clintons spin doctors, saw the United States on the way from James Madison towards Thomas Jefferson, from representative democracy to direct democracy, with the Internet serving as a catalyst (Morris 1999). Al Gore and others tried to "re-invent governance" by means of the Internet, thus creating some sort of "new Athenian age" of democracy (Gore 1995). Today such enthusiasm seems somehow exaggerated. The Internet fell short of many expectations not only economically. Now we have the chance to take a restrained look at the Internet to calculate costs and benefits from online communication - even in the field of politics. First we want to specify the three levels on which an impact of the Internet in this field may be considered: • a macro-level approach considers the use of the Internet by states ("e-governance"). • a meso-level approach considers the use of the Internet by political organizations ("virtual parties") • a micro-level approach considers the use of the Internet by individual citizens ("e- democracy") In our presentation we deal only with micro-level consequences of Internet usage. Our focus is on the individual activities people take to communicate politically. This includes reception of political media as well as talks about politics and showing of ones own opinion in public (in a demonstration for example). What are the common assumptions about consequences of internet usage on political communication? The literature provides a first position that ascribes a "mobilizing function" to the Internet (Schwartz 1996; Rheingold 1994, Grossman 1995). This position expects a higher frequency and a higher intensity in political communication among Internet users than among non-users. It expects also that the Internet may include even those parts of the population who can’t be reached by 14

- 15. traditional channels of political communication. The public and scientific discussion is dominated by this "hypothesis of inclusion": The Internet intensifies political communication and thus leads to a growing integration of citizens into the political system. EDUCATIONAL: The internet provides a powerful resource for learning, as well as an efficient means of communication. Its use in education can provide a number of specific learning benefits, including the development of: • independent learning and research skills, such as improved access to subject learning across a wide range of learning areas, as well as in integrated or cross-curricular studies; and • communication and collaboration, such as the ability to use learning technologies to access resources, create resources and communicate with others. Access to resources The internet is a huge repository of learning material. As a result, it significantly expands the resources available to students beyond the standard print materials found in school libraries. It gives students access to the latest reports on government and non-government websites, including research results, scientific and artistic resources in museums and art galleries, and other organisations with information applicable to student learning. At secondary schooling levels, the internet can be used for undertaking reasonably sophisticated research projects. The internet is also a time-efficient tool for a teacher that expands the possibilities for curriculum development. Learning depends on the ability to find relevant and reliable information quickly and easily, and to select, interpret and evaluate that information. Searching for information on the internet can help to develop these skills. Classroom exercises and take-home assessment tasks, where students are required to compare and contrast website content, are ideal for alerting students to the requirements of writing for different audiences, the purpose of particular content, identifying bias and judging accuracy and reliability. Since many sites adopt particular views about issues, the internet is a useful mechanism for 15

- 16. developing the skills of distinguishing fact from opinion and exploring subjectivity and objectivity. Communication and collaboration The internet is a powerful tool for developing students’ communication and collaboration skills. Above all, the internet is an effective means of building language skills. Through email, chat rooms and discussion groups, students learn the basic principles of communication in the written form. This gives teachers the opportunity to incorporate internet-based activities into mainstream literacy programs and bring diversity to their repertoires of teaching strategies. For example, website publishing can be a powerful means of generating enthusiasm for literacy units, since most students are motivated by the prospect of having their work posted on a website for public access. Collaborative projects can be designed to enhance students’ literacy skills, mainly through email messaging with their peers from other schools or even other countries. Collaborative projects are also useful for engaging students and providing meaningful learning experiences. In this way, the internet becomes an effective means of advancing intercultural understanding. Moderated chat rooms and group projects can also provide students with opportunities for collaborative learning. Numerous protocols govern use of the internet. Learning these protocols and how to adhere to them helps students understand the rule-based society in which they live and to treat others with respect and decency. The internet also contributes to students’ broader understanding of information and communication technologies (ICT) and its centrality to the information economy and society as a whole. Culture: Two definitions 1: High culture The best literature, art, music, film that exists. Societies are often ‘defined’ by their high culture. Problem: who decides what is best – are there any timeless rules? 2: Culture as lived experience Culture is the ordinary, everyday social world around us. Is there a single culture? • In the early part of the 20th century people would talk about “national culture” 16

- 17. • With the rise of global media and the deregulation of information flows people are more likely to talk about “cultures” within a nation based on age, ethnicity, interests, gender. • There are many cultures and an individual may belong to more than one…. • A key issue is that some groups may have more “power” than others to effect people’s lives... HARDWARE REQUIREMENT FOR INTERNET CONNECTIVITY The term modem is an acronym of “modulator-demodulator”. In simple words, a modem enables users to connect their computer to internet. It also enables to transmit and receive data across the internet. It is the key that unlocks doors of the world of the Internet for the user. The modems can be of various types. They may be hardware, software or controller less. The most widely used modems are hardware modems. There are mainly three types of hardware modems. All three types of modems work the same way. However, each has its advantages and disadvantages. Here, we discuss them… The oldest and simplest type of hardware modems are external modems. In fact, they are the earliest modems that have been in use for more then 25 years. As the name suggest, they reside outside the main computer. Therefore, they are easy to install, as the computer need not be opened. They connect to a computer's serial port through a cable. On other hand, the telephone line is plugged in a socket on the modem. Advantage of an external modem is that the external modems have their own power supply. The modem can be turned off to break an online connection quickly without powering down the computer. Another advantage is that by having a separate power supply is that it does not drain any power from the computer. The Main disadvantage is that the external modems are more expensive then the internal modems. On other hand, Internal modems come installed in the computer you buy. Internal modems are integrated into the computer system mostly installed in the motherboard. Hence, do not need any special attention. Internal modems are activated when you run a dialer program to access internet. The internal modem can be turned off when you exit the program. This can be very convenient. The major disadvantage with internal modems 17

- 18. is their location. When you want to replace an internal modem you have to go inside the computer case to change it. Internal modems usually cost less than external modems, but the price difference is usually small. The third significant type of hardware modem is the PC Card modem. These modems are mainly designed for portable computers. They come in size of a credit card and could be fitted into the PC Card slot on notebook and handheld computers. These modem cards are removable when the modem is no longer needed. Most notably, except for their size, PC Card modems combine the characteristics of both external and internal modems. The PC card modems are plugged directly into an external slot in the portable computer. There is no cable requirement like external modems. The only other requirement is the telephone line connection. The only disadvantage is that running a PC Card modem while the portable computer is operating on battery power drastically decreases the life of your batteries. The portable computer need tp power the PC card modems. This is fine unless the computer is battery operated. Overall, you may have an external, an internal modem or a PC modem card as per your requirements. The modem you choose should be depend upon how your computer is configured and your preferences on accessing internet. History News wire services in 1920s used multiplex equipment that met the definition, but the modem function was incidental to the multiplexing function, so they are not commonly included in the history of modems. Modems grew out of the need to connect teletype machines over ordinary phone lines instead of more expensive leased lines which had previously been used for current loop- based teleprinters and automated telegraphs. George Stibitz connected a New Hampshire teletype to a computer in New York City by a subscriber telephone line in 1940. 18

- 19. Anderson-Jacobsen brand Bell 101 dataset 110 baud RS-232 modem, in original wood chassis Mass-produced modems in the United States began as part of the SAGE air-defense system in 1958, connecting terminals at various airbases, radar sites, and command-and- control centers to the SAGE director centers scattered around the U.S. and Canada. SAGE modems were described by AT&T's Bell Labs as conforming to their newly published Bell 101 dataset standard. While they ran on dedicated telephone lines, the devices at each end were no different from commercial acoustically coupled Bell 101, 110 baud modems. In the summer of 1960, the name Data-Phone was introduced to replace the earlier term digital subset. The 202 Data-Phone was a half-duplex asynchronous service that was marketed extensively in late 1960. In 1962, the 201A and 201B Data-Phones were introduced. They were synchronous modems using two-bit-per-baud phase-shift keying (PSK). The 201A operated half-duplex at 2000 bit/s over normal phone lines, while the 201B provided full duplex 2400 bit/s service on four-wire leased lines, the send and receive channels running on their own set of two wires each. The famous Bell 103A dataset standard was also introduced by Bell Labs in 1962. It provided full-duplex service at 300 baud over normal phone lines. Frequency-shift keying was used with the call originator transmitting at 1070 or 1270 Hz and the answering modem transmitting at 2025 or 2225 Hz. The readily available 103A2 gave an important boost to the use of remote low-speed terminals such as the KSR33, the ASR33, and the IBM 2741. AT&T reduced modem costs by introducing the originate-only 113D and the answer-only 113B/C modems. The Carterfone decision The Novation CAT acoustically coupled modem 19

- 20. For many years, the Bell System (AT&T) maintained a monopoly in the United States on the use of its phone lines, allowing only Bell-supplied devices to be attached to its network. Before 1968, AT&T maintained a monopoly on what devices could be electrically connected to its phone lines. This led to a market for 103A-compatible modems that were mechanically connected to the phone, through the handset, known as acoustically coupled modems. Particularly common models from the 1970s were the Novation CAT and the Anderson-Jacobson, spun off from an in-house project at the Lawrence Livermore National Laboratory. Hush-a-Phone v. FCC was a seminal ruling in United States telecommunications law decided by the DC Circuit Court of Appeals on November 8, 1956. The District Court found that it was within the FCC's authority to regulate the terms of use of AT&T's equipment. Subsequently, the FCC examiner found that as long as the device was not physically attached it would not threaten to degenerate the system. Later, in the Carterfone decision, the FCC passed a rule setting stringent AT&T-designed tests for electronically coupling a device to the phone lines. AT&T made these tests complex and expensive, so acoustically coupled modems remained common into the early 1980s. In December 1972, Vadic introduced the VA3400. This device was remarkable because it provided full duplex operation at 1200 bit/s over the dial network, using methods similar to those of the 103A in that it used different frequency bands for transmit and receive. In November 1976, AT&T introduced the 212A modem to compete with Vadic. It was similar in design to Vadic's model, but used the lower frequency set for transmission. It was also possible to use the 212A with a 103A modem at 300 bit/s. According to Vadic, the change in frequency assignments made the 212 intentionally incompatible with acoustic coupling, thereby locking out many potential modem manufacturers. In 1977, Vadic responded with the VA3467 triple modem, an answer-only modem sold to computer center operators that supported Vadic's 1200-bit/s mode, AT&T's 212A mode, and 103A operation. 20

- 21. The Smartmodem and the rise of BBSes US Robotics Sportster 14, 400 Fax modem (1994) The next major advance in modems was the Smartmodem, introduced in 1981 by Hayes Communications. The Smartmodem was an otherwise standard 103A 300-bit/s modem, but was attached to a small controller that let the computer send commands to it and enable it to operate the phone line. The command set included instructions for picking up and hanging up the phone, dialing numbers, and answering calls. The basic Hayes command set remains the basis for computer control of most modern modems. Prior to the Hayes Smartmodem, modems almost universally required a two-step process to activate a connection: first, the user had to manually dial the remote number on a standard phone handset, and then secondly, plug the handset into an acoustic coupler. Hardware add-ons, known simply as dialers, were used in special circumstances, and generally operated by emulating someone dialing a handset. With the Smartmodem, the computer could dial the phone directly by sending the modem a command, thus eliminating the need for an associated phone for dialing and the need for an acoustic coupler. The Smartmodem instead plugged directly into the phone line. This greatly simplified setup and operation. Terminal programs that maintained lists of phone numbers and sent the dialing commands became common. The Smartmodem and its clones also aided the spread of bulletin board systems (BBSs). Modems had previously been typically either the call-only, acoustically coupled models used on the client side, or the much more expensive, answer-only models used on the server side. The Smartmodem could operate in either mode depending on the commands sent from the computer. There was now a low-cost server-side modem on the market, and the BBSs flourished. 21

- 22. Softmodem (dumb modem) Main article: Softmodem Apple's GeoPort modems from the second half of the 1990s were similar. Although a clever idea in theory, enabling the creation of more-powerful telephony applications, in practice the only programs created were simple answering-machine and fax software, hardly more advanced than their physical-world counterparts, and certainly more error- prone and cumbersome. The software was finicky and ate up significant processor time, and no longer functions in current operating system versions. Almost all modern modems also do double-duty as a fax machine as well. Digital faxes, introduced in the 1980s, are simply a particular image format sent over a high-speed (commonly 14.4 kbit/s) modem. Software running on the host computer can convert any image into fax-format, which can then be sent using the modem. Such software was at one time an add-on, but since has become largely universal. A PCI Winmodem/Softmodem (on the left) next to a traditional ISA modem (on the right). Notice the less complex circuitry of the modem on the left. A Winmodem or Softmodem is a stripped-down modem that replaces tasks traditionally handled in hardware with software. In this case the modem is a simple digital signal processor designed to create sounds, or voltage variations, on the telephone line. Softmodems are cheaper than traditional modems, since they have fewer hardware components. One downside is that the software generating the modem tones is not simple, and the performance of the computer as a whole often suffers when it is being used. For online gaming this can be a real concern. Another problem is lack of portability such that other OSes (such as Linux) may not have an equivalent driver to operate the modem. A Winmodem might not work with a later version of Microsoft Windows, if its driver turns out to be incompatible with that later version of the operating system. 22

- 23. Narrowband/phone-line dialup modems A standard modem of today contains two functional parts: an analog section for generating the signals and operating the phone, and a digital section for setup and control. This functionality is actually incorporated into a single chip, but the division remains in theory. In operation the modem can be in one of two "modes", data mode in which data is sent to and from the computer over the phone lines, and command mode in which the modem listens to the data from the computer for commands, and carries them out. A typical session consists of powering up the modem (often inside the computer itself) which automatically assumes command mode, then sending it the command for dialing a number. After the connection is established to the remote modem, the modem automatically goes into data mode, and the user can send and receive data. When the user is finished, the escape sequence, "+++" followed by a pause of about a second, is sent to the modem to return it to command mode, and the command ATH to hang up the phone is sent. The commands themselves are typically from the Hayes command set, although that term is somewhat misleading. The original Hayes commands were useful for 300 bit/s operation only, and then extended for their 1200 bit/s modems. Faster speeds required new commands, leading to a proliferation of command sets in the early 1990s. Things became considerably more standardized in the second half of the 1990s, when most modems were built from one of a very small number of "chip sets". We call this the Hayes command set even today, although it has three or four times the numbers of commands as the actual standard. 23

- 24. Increasing speeds (V.21 V.22 V.22bis) A 2400 bit/s modem for a laptop. The 300 bit/s modems used frequency-shift keying to send data. In this system the stream of 1s and 0s in computer data is translated into sounds which can be easily sent on the phone lines. In the Bell 103 system the originating modem sends 0s by playing a 1070 Hz tone, and 1s at 1270 Hz, with the answering modem putting its 0s on 2025 Hz and 1s on 2225 Hz. These frequencies were chosen carefully, they are in the range that suffer minimum distortion on the phone system, and also are not harmonics of each other. In the 1200 bit/s and faster systems, phase-shift keying was used. In this system the two tones for any one side of the connection are sent at the similar frequencies as in the 300 bit/s systems, but slightly out of phase. By comparing the phase of the two signals, 1s and 0s could be pulled back out, for instance if the signals were 90 degrees out of phase, this represented two digits, "1, 0", at 180 degrees it was "1, 1". In this way each cycle of the signal represents two digits instead of one. 1200 bit/s modems were, in effect, 600 symbols per second modems (600 baud modems) with 2 bits per symbol. Voiceband modems generally remained at 300 and 1200 bit/s (V.21 and V.22) into the mid 1980s. A V.22bis 2400-bit/s system similar in concept to the 1200-bit/s Bell 212 signalling was introduced in the U.S., and a slightly different one in Europe. By the late 1980s, most modems could support all of these standards and 2400-bit/s operation was becoming common. 24

- 25. For more information on baud rates versus bit rates, see the companion article List of device bandwidths. Increasing speeds (one-way proprietary standards) Many other standards were also introduced for special purposes, commonly using a high- speed channel for receiving, and a lower-speed channel for sending. One typical example was used in the French Minitel system, in which the user's terminals spent the majority of their time receiving information. The modem in the Minitel terminal thus operated at 1200 bit/s for reception, and 75 bit/s for sending commands back to the servers. Three U.S. companies became famous for high-speed versions of the same concept. Telebit introduced its Trailblazer modem in 1984, which used a large number of 36 bit/s channels to send data one-way at rates up to 18,432 bit/s. A single additional channel in the reverse direction allowed the two modems to communicate how much data was waiting at either end of the link, and the modems could change direction on the fly. The Trailblazer modems also supported a feature that allowed them to "spoof" the UUCP "g" protocol, commonly used on Unix systems to send e-mail, and thereby speed UUCP up by a tremendous amount. Trailblazers thus became extremely common on Unix systems, and maintained their dominance in this market well into the 1990s. U.S. Robotics (USR) introduced a similar system, known as HST, although this supplied only 9600 bit/s (in early versions at least) and provided for a larger backchannel. Rather than offer spoofing, USR instead created a large market among Fidonet users by offering its modems to BBS sysops at a much lower price, resulting in sales to end users who wanted faster file transfers. Hayes was forced to compete, and introduced its own 9600- bit/s standard, Express 96 (also known as "Ping-Pong"), which was generally similar to Telebit's PEP. Hayes, however, offered neither protocol spoofing nor sysop discounts, and its high-speed modems remained rare. 4800 and 9600 (V.27ter, V.32) Echo cancellation was the next major advance in modem design. Local telephone lines use the same wires to send and receive, which results in a small amount of the outgoing signal bouncing back. This signal can confuse the modem. Is the signal it is "hearing" a data transmission from the remote modem, or its own transmission bouncing back? This was why earlier modems split the signal frequencies into answer and originate; each 25

- 26. modem simply didn't listen to its own transmitting frequencies. Even with improvements to the phone system allowing higher speeds, this splitting of available phone signal bandwidth still imposed a half-speed limit on modems. Echo cancellation got around this problem. Measuring the echo delays and magnitudes allowed the modem to tell if the received signal was from itself or the remote modem, and create an equal and opposite signal to cancel its own. Modems were then able to send at "full speed" in both directions at the same time, leading to the development of 4800 and 9600 bit/s modems. Increases in speed have used increasingly complicated communications theory. 1200 and 2400 bit/s modems used the phase shift key (PSK) concept. This could transmit two or three bits per symbol. The next major advance encoded four bits into a combination of amplitude and phase, known as Quadrature Amplitude Modulation (QAM). Best visualized as a constellation diagram, the bits are mapped onto points on a graph with the x (real) and y (quadrature) coordinates transmitted over a single carrier. The new V.27ter and V.32 standards were able to transmit 4 bits per symbol, at a rate of 1200 or 2400 baud, giving an effective bit rate of 4800 or 9600 bits per second. The carrier frequency was 1650 Hz. For many years, most engineers considered this rate to be the limit of data communications over telephone networks. Error correction and compression Operations at these speeds pushed the limits of the phone lines, resulting in high error rates. This led to the introduction of error-correction systems built into the modems, made most famous with Microcom's MNP systems. A string of MNP standards came out in the 1980s, each increasing the effective data rate by minimizing overhead, from about 75% theoretical maximum in MNP 1, to 95% in MNP 4. The new method called MNP 5 took this a step further, adding data compression to the system, thereby increasing the data rate above the modem's rating. Generally the user could expect an MNP5 modem to transfer at about 130% the normal data rate of the modem. MNP was later "opened" and became popular on a series of 2400-bit/s modems, and ultimately led to the development of V.42 and V.42bis ITU standards. V.42 and V.42bis were non-compatible with MNP but were similar in concept: Error correction and compression. 26

- 27. Another common feature of these high-speed modems was the concept of fallback, allowing them to talk to less-capable modems. During the call initiation the modem would play a series of signals into the line and wait for the remote modem to "answer" them. They would start at high speeds and progressively get slower and slower until they heard an answer. Thus, two USR modems would be able to connect at 9600 bit/s, but, when a user with a 2400-bit/s modem called in, the USR would "fall back" to the common 2400-bit/s speed. This would also happen if a V.32 modem and a HST modem were connected. Because they used a different standard at 9600 bit/s, they would fall back to their highest commonly supported standard at 2400 bit/s. The same applies to V.32bis and 14400 bit/s HST modem, which would still be able to communicate with each other at only 2400 bit/s. Breaking the 9.6k barrier In 1980 Gottfried Ungerboeck from IBM Zurich Research Laboratory applied powerful channel coding techniques to search for new ways to increase the speed of modems. His results were astonishing but only conveyed to a few colleagues. Finally in 1982, he agreed to publish what is now a landmark paper in the theory of information coding. By applying powerful parity check coding to the bits in each symbol, and mapping the encoded bits into a two dimensional "diamond pattern", Ungerboeck showed that it was possible to increase the speed by a factor of two with the same error rate. The new technique was called "mapping by set partitions" (now known as trellis modulation). Error correcting codes, which encode code words (sets of bits) in such a way that they are far from each other, so that in case of error they are still closest to the original word (and not confused with another) can be thought of as analogous to sphere packing or packing pennies on a surface: the greater two bit sequences are from one another, the easier it is to correct minor errors. The industry was galvanized into new research and development. More powerful coding techniques were developed, commercial firms rolled out new product lines, and the standards organizations rapidly adopted to new technology. The "tipping point" occurred with the introduction of the SupraFAXModem 14400 in 1991. Rockwell had introduced a new chipset supporting not only V.32 and MNP, but the newer 14, 400 bit/s V.32bis and the higher-compression V.42bis as well, and even included 9600 bit/s fax capability. 27

- 28. Supra, then known primarily for their hard drive systems, used this chipset to build a low- priced 14, 400 bit/s modem which cost the same as a 2400 bit/s modem from a year or two earlier (about US$300). The product was a runaway best-seller, and it was months before the company could keep up with demand. V.32bis was so successful that the older high-speed standards had little to recommend them. USR fought back with a 16, 800 bit/s version of HST, while AT&T introduced a one-off 19, 200 bit/s method they referred to as V.32ter (also known as V.32 terbo), but neither non-standard modem sold well. V.34 / 28.8k and 33.6k An ISA modem manufactured to conform to the V.34 protocol. Any interest in these systems was destroyed during the lengthy introduction of the 28, 800 bit/s V.34 standard. While waiting, several companies decided to "jump the gun" and introduced modems they referred to as "V.FAST". In order to guarantee compatibility with V.34 modems once the standard was ratified (1994), the manufacturers were forced to use more "flexible" parts, generally a DSP and microcontroller, as opposed to purpose- designed "modem chips". Today the ITU standard V.34 represents the culmination of the joint efforts. It employs the most powerful coding techniques including channel encoding and shape encoding. From the mere 4 bits per symbol (9.6 kbit/s), the new standards used the functional equivalent of 6 to 10 bits per symbol, plus increasing baud rates from 2400 to 3429, to create 14.4, 28.8, and 33.6 kbit/s modems. This rate is near the theoretical Shannon limit. 28

- 29. When calculated, the Shannon capacity of a narrowband line is Bandwidth * log2(1 + Pu / Pn), with Pu / Pn the signal-to-noise ratio. Narrowband phone lines have a bandwidth from 300-3100 Hz, so using Pu / Pn = 10,000: capacity is approximately 36 kbit/s. Without the discovery and eventual application of trellis modulation, maximum telephone rates would have been limited to 3429 baud * 4 bit/symbol == approximately 14 kilobits per second using traditional QAM. Using digital lines and PCM (V.90/92) In the late 1990s Rockwell and U.S. Robotics introduced new technology based upon the digital transmission used in modern telephony networks. The standard digital transmission in modern networks is 64 kbit/s but some networks use a part of the bandwidth for remote office signaling (eg to hang up the phone), limiting the effective rate to 56 kbit/s DS0. This new technology was adopted into ITU standards V.90 and is common in modern computers. The 56 kbit/s rate is only possible from the central office to the user site (downlink) and in the United States, government regulation limits the maximum power output to only 53.3 kbit/s. The uplink (from the user to the central office) still uses V.34 technology at 33.6k. Later in V.92, the digital PCM technique was applied to increase the upload speed to a maximum of 48 kbit/s, but at the expense of download rates. For example a 48 kbit/s upstream rate would reduce the downstream as low as 40 kbit/s, due to echo on the telephone line. To avoid this problem, V.92 modems offer the option to turn off the digital upstream and instead use a 33.6 kbit/s analog connection, in order to maintain a high digital downstream of 50 kbit/s or higher. (See November and October 2000 update at http://www.modemsite.com/56k/v92s.asp ) V.92 also adds two other features. The first is the ability for users who have call waiting to put their dial-up Internet connection on hold for extended periods of time while they answer a call. The second feature is the ability to "quick connect" to one's ISP. This is achieved by remembering the analog and digital characteristics of the telephone line, and using this saved information to reconnect at a fast pace. 29

- 30. Using compression to exceed 56k Today's V.42, V.42bis and V.44 standards allow the modem to transmit data faster than its basic rate would imply. For instance, a 53.3 kbit/s connection with V.44 can transmit up to 53.3*6 == 320 kbit/s using pure text. However, the compression ratio tends to vary due to noise on the line, or due to the transfer of already-compressed files (ZIP files, [2] JPEG images, MP3 audio, MPEG video). At some points the modem will be sending compressed files at approximately 50 kbit/s, uncompressed files at 160 kbit/s, and pure text at 320 kbit/s, or any value in between. [3] In such situations a small amount of memory in the modem, a buffer, is used to hold the data while it is being compressed and sent across the phone line, but in order to prevent overflow of the buffer, it sometimes becomes necessary to tell the computer to pause the datastream. This is accomplished through hardware flow control using extra lines on the modem–computer connection. The computer is then set to supply the modem at some higher rate, such as 320 kbit/s, and the modem will tell the computer when to start or stop sending data. Compression by the ISP As telephone-based 56k modems began losing popularity, some Internet Service Providers such as Netzero and Juno started using pre-compression to increase the throughput & maintain their customer base. As example, the Netscape ISP uses a compression program that squeezes images, text, and other objects at the server, just prior to sending them across the phone line. The server-side compression operates much more efficiently than the "on-the-fly" compression of V.44-enabled modems. Typically website text is compacted to 4% thus increasing effective throughput to approximately 1300 kbit/s. The accelerator also pre-compresses Flash executables and images to approximately 30% and 12%, respectively. The drawback of this approach is a loss in quality, where the graphics become heavily compacted and smeared, but the speed is dramatically improved such that web pages load in less than 5 seconds, and the user can manually choose to view the uncompressed images at any time. The ISPs employing this approach advertise it as "DSL speeds over regular phone lines" or simply "high speed dial-up". 30

- 31. FUNCTIONS In addition to converting digital signals into analog signals, the modems carry out many other tasks. Modems minimize the errors that occur while the transmission of signals. They also have the functionality of compressing the data sent via signals. Modems also do the task of regulating the information sent over a network. • Error Correction: In this process the modem checks if the information they receive is undamaged. The modems involved in error correction divide the information into packets called frames. Before sending this information, the modems tag each of the frames with checksums. Checksum is a method of checking redundancy in the data present on the computer. The modems that receive the information, verify if the information matches with checksums, sent by the error-correcting modem. If it fails to match with the checksum, the information is sent back. • Compressing the Data: For compressing the data, it is sent together in many bits. The bits are grouped together by the modem, in order to compress them. • Flow Control: Different modems vary in their speed of sending signals. Thus, it creates problems in receiving the signals if either one of the modems is slow. In the flow control mechanism, the slower modem signals the faster one to pause, by sending a 'character'. When it is ready to catch up with the faster modem, a different character is sent, which in turn resumes the flow of signals. WiFi and WiMax Wireless data modems are used in the WiFi and WiMax standards, operating at microwave frequencies. WiFi is principally used in laptops for Internet connections (wireless access point) and wireless application protocol (WAP). Mobile modems and routers Modems which use mobile phone lines (GPRS,UMTS,HSPA,EVDO,WiMax,etc.), are known as Cellular Modems. Cellular modems can be embedded inside a laptop or appliance, or they can be external to it. External cellular modems are datacards and 31

- 32. cellular routers. The datacard is a PC card or ExpressCard which slides into a PCMCIA/PC card/ExpressCard slot on a computer. The most famous brand of cellular modem datacards is the AirCard made by Sierra Wireless. (Many people just refer to all makes and models as "AirCards", when in fact this is a trademarked brand name.) Nowadays, there are USB cellular modems as well that use a USB port on the laptop instead of a PC card or ExpressCard slot. A cellular router may or may not have an external datacard ("AirCard") that slides into it. Most cellular routers do allow such datacards or USB modems, except for the WAAV, Inc. CM3 mobile broadband cellular router. Cellular Routers may not be modems per se, but they contain modems or allow modems to be slid into them. The difference between a cellular router and a cellular modem is that a cellular router normally allows multiple people to connect to it (since it can "route"), while the modem is made for one connection. Most of the GSM cellular modems come with an integrated SIM cardholder (i.e, Huawei E220, Sierra 881, etc.) The CDMA (EVDO) versions do not use SIM cards, but use Electronic Serial Number (ESN) instead. The cost of using a cellular modem varies from country to country. Some carriers implement "flat rate" plans for unlimited data transfers. Some have caps (or maximum limits) on the amount of data that can be transferred per month. Other countries have "per Megabyte" or even "per Kilobyte" plans that charge a fixed rate per Megabyte or Kilobyte of data downloaded; this tends to add up quickly in today's content-filled world, which is why many people are pushing for flat data rates. See : flat rate. The faster data rates of the newest cellular modem technologies (UMTS,HSPA,EVDO,WiMax) are also considered to be "Broadband Cellular Modems" and compete with other Broadband modems below. DATA RATE TRANSFER The speed at which a modem can transfer information, usually given in bits per second (bps). The higher the data transfer rate, the faster the modem, but the more it costs. A faster modem can save you money by cutting down on your long-distance charges. Three common speeds for modems are 9,600 bps, 14,400 bps, and 28,800 bps. 32

- 33. Modems are distinguished primarily by the maximum data rate they support. Data rates can range from 75 bits per second up to 56000 and beyond. Data from the user (i.e. flowing from the local terminal or computer via the modem to the telephone line) is sometimes at a lower rate than the other direction, on the assumption that the user cannot type more than a few characters per second. Various data compression and error correction algorithms are required to support the highest speeds. Other optional features are auto-dial (auto-call) and auto-answer which allow the computer to initiate and accept calls without human intervention. Most modern modems support a number of different protocols, and two modems, when first connected, will automatically negotiate to find a common protocol (this process may be audible through the modem or computer's loudspeakers). Some modem protocols allow the two modems to renegotiate ("retrain") if the initial choice of data rate is too high and gives too many transmission errors. A modem may either be internal (connected to the computer's bus) or external ("stand-alone", connected to one of the computer's serial ports). The actual speed of transmission in characters per second depends not just the modem-to-modem data rate, but also on the speed with which the processor can transfer data to and from the modem, the kind of compression used and whether the data is compressed by the processor or the modem, the amount of noise on the telephone line (which causes retransmissions), the serial character format (typically 8N1: one start bit, eight data bits, no parity, one stop bit). WIRELESS TRANSMISSION AND BANDWIDTH The term "wireless" has become a generic and all-encompassing word used to describe communications in which electromagnetic waves or RF (rather than some form of wire) carry a signal over part or the entire communication path. Common examples of wireless equipment in use today include: 33

- 34. • Professional LMR (Land Mobile Radio) and SMR (Specialized Mobile Radio) typically used by business, industrial and Public Safety entities • Consumer Two Way Radio including FRS (Family Radio Service), GMRS (General Mobile Radio Service) and Citizens band ("CB") radios • The Amateur Radio Service (Ham radio) • Consumer and professional Marine VHF radios • Cellular telephones and pagers: provide connectivity for portable and mobile applications, both personal and business. • Global Positioning System (GPS): allows drivers of cars and trucks, captains of boats and ships, and pilots of aircraft to ascertain their location anywhere on earth. • Cordless computer peripherals: the cordless mouse is a common example; keyboards and printers can also be linked to a computer via wireless. • Cordless telephone sets: these are limited-range devices, not to be confused with cell phones. • Satellite television: allows viewers in almost any location to select from hundreds of channels. • Wireless gaming: new gaming consoles allow players to interact and play in the same game regardless of whether they are playing on different consoles. Players can chat, send text messages as well as record sound and send it to their friends. Controllers also use wireless technology. They do not have any cords but they can send the information from what is being pressed on the controller to the main console which then processes this information and makes it happen in the game. All of these steps are completed in milliseconds. Wireless networking (i.e. the various types of unlicensed 2.4 GHz WiFi devices) is used to meet many needs. Perhaps the most common use is to connect laptop users who travel from location to location. Another common use is for mobile networks that connect via satellite. A wireless transmission method is a logical choice to network a LAN segment that must frequently change locations. The following situations justify the use of wireless technology: • To span a distance beyond the capabilities of typical cabling, 34

- 35. • To avoid obstacles such as physical structures, EMI, or RFI, • To provide a backup communications link in case of normal network failure, • To link portable or temporary workstations, • To overcome situations where normal cabling is difficult or financially impractical, or • To remotely connect mobile users or networks. Wireless communication can be via: • radio frequency communication, • microwave communication, for example long-range line-of-sight via highly directional antennas, or short-range communication, or • infrared (IR) short-range communication, for example from remote controls or via IRDA. Applications may involve point-to-point communication, point-to-multipoint communication, broadcasting, cellular networks and other wireless networks. The term "wireless" should not be confused with the term "cordless", which is generally used to refer to powered electrical or electronic devices that are able to operate from a portable power source (e.g. a battery pack) without any cable or cord to limit the mobility of the cordless device through a connection to the mains power supply. Some cordless devices, such as cordless telephones, are also wireless in the sense that information is transferred from the cordless telephone to the telephone's base unit via some type of wireless communications link. This has caused some disparity in the usage of the term "cordless", for example in Digital Enhanced Cordless Telecommunications. In the last fifty years, wireless communications industry experienced drastic changes driven by many technology innovations. Early wireless work David E. Hughes, eight years before Hertz's experiments, induced electromagnetic waves in a signaling system. Hughes transmitted Morse code by an induction apparatus. In 1878, Hughes's induction transmission method utilized a "clockwork transmitter" to transmit signals. In 1885, T. A. Edison used a vibrator magnet for induction transmission. In 1888, Edison deploys a system of signaling on the Lehigh Valley Railroad. In 1891, 35

- 36. Edison obtained the wireless patent for this method using inductance (U.S. Patent 465,971). The demonstration of the theory of electromagnetic waves by Heinrich Rudolf Hertz in 1888 was important. The theory of electromagnetic waves were predicted from the research of James Clerk Maxwell and Michael Faraday. Hertz demonstrated that electromagnetic waves could be transmitted and caused to travel through space at straight lines and that they were able to be received by an experimental apparatus. The experiments were not followed up by Hertz. The practical applications of the wireless communication and remote control technology were implemented by Nikola Tesla. Bandwidth (Speed) Wireless Network uses Transmitted signal rather then Wire (CAT5e/CAT6). There are few parameters that are affecting Wireless Network communication that are not an issue when using Wired Network. The two most important variables are Signal Strength, Signal Stability. Signal Strength is mainly depending on the Distance and the number and nature of obstructions. Stability is affected by the presence of other signals in the air + temporal changes in the environment. As an example Computer movement and orientation, people movement, electrical appliances “kinking in and out”, and other interferences are constantly changing and affecting the signal in a temporal manner. The general results are that Wireless Bandwidth (Speed) and Latency becomes “None Stop” changeable variables. Wireless Bandwidth (or “Speed”) of Wireless depends on what standard in use (802.11b. 802.11g, etc.) and how much of the signal is available for processing. The smaller the signal the less bandwidth. - VSAT Short for very small aperture terminal, an earthbound station used in satellite communications of data, voice and video signals, excluding broadcast television. A VSAT consists of two parts, a transceiver that is placed outdoors in direct line of sight to the satellite and a device that is placed indoors to interface the transceiver with the end user's communications device, such as a PC. The transceiver receives or sends a signal to 36

- 37. a satellite transponder in the sky. The satellite sends and receives signals from a ground station computer that acts as a hub for the system. Each end user is interconnected with the hub station via the satellite, forming a star topology. The hub controls the entire operation of the network. For one end user to communicate with another, each transmission has to first go to the hub station that then retransmits it via the satellite to the other end user's VSAT. VSAT can handle up to 56 Kbps. - RADIO Direct broadcast satellite, WiFi, and mobile phones all use modems to communicate, as do most other wireless services today. Modern telecommunications and data networks also make extensive use of radio modems where long distance data links are required. Such systems are an important part of the PSTN, and are also in common use for high- speed computer network links to outlying areas where fibre is not economical. Even where a cable is installed, it is often possible to get better performance or make other parts of the system simpler by using radio frequencies and modulation techniques through a cable. Coaxial cable has a very large bandwidth, however signal attenuation becomes a major problem at high data rates if a digital signal is used. By using a modem, a much larger amount of digital data can be transmitted through a single piece of wire. Digital cable television and cable Internet services use radio frequency modems to provide the increasing bandwidth needs of modern households. Using a modem also allows for frequency-division multiple access to be used, making full-duplex digital communication with many users possible using a single wire. Wireless modems come in a variety of types, bandwidths, and speeds. Wireless modems are often referred to as transparent or smart. They transmit information that is modulated onto a carrier frequency to allow many simultaneous wireless communication links to work simultaneously on different frequencies. Transparent modems operate in a manner similar to their phone line modem cousins. Typically, they were half duplex, meaning that they could not send and receive data at the same time. Typically transparent modems are polled in a round robin manner to collect small amounts of data from scattered locations that do not have easy access to wired 37

- 38. infrastructure. Transparent modems are most commonly used by utility companies for data collection. Smart modems come with a media access controller inside which prevents random data from colliding and resends data that is not correctly received. Smart modems typically require more bandwidth than transparent modems, and typically achieve higher data rates. The IEEE 802.11 standard defines a short range modulation scheme that is used on a large scale throughout the world. OBSTACLES TO EFFECTIVE TRANSMISSION While a message source may be able to deliver a message through a transmission medium, there are many potential obstacles to the message successfully reaching the receiver the way the sender intends. The potential obstacles that may affect good communication include: • Poor Encoding – This occurs when the message source fails to create the right sensory stimuli to meet the objectives of the message. For instance, in person-to- person communication, verbally phrasing words poorly so the intended communication is not what is actually meant, is the result of poor encoding. Poor encoding is also seen in advertisements that are difficult for the intended audience to understand, such as words or symbols that lack meaning or, worse, have totally different meaning within a certain cultural groups. This often occurs when marketers use the same advertising message across many different countries. Differences due to translation or cultural understanding can result in the message receiver having a different frame of reference for how to interpret words, symbols, sounds, etc. This may lead the message receiver to decode the meaning of the message in a different way than was intended by the message sender. • Poor Decoding – This refers to a message receiver’s error in processing the message so that the meaning given to the received message is not what the source intended. This differs from poor encoding when it is clear, through comparative analysis with other receivers, that a particular receiver perceived a message differently from others and from what the message source intended. Clearly, as we noted above, if the receiver’s frame of reference is different (e.g., meaning of 38

- 39. words are different) then decoding problems can occur. More likely, when it comes to marketing promotions, decoding errors occur due to personal or psychological factors, such as not paying attention to a full television advertisement, driving too quickly past a billboard, or allowing one’s mind to wonder while talking to a salesperson. • Medium Failure – Sometimes communication channels break down and end up sending out weak or faltering signals. Other times the wrong medium is used to communicate the message. For instance, trying to educate doctors about a new treatment for heart disease using television commercials that quickly flash highly detailed information is not going to be as effective as presenting this information in a print ad where doctors can take their time evaluating the information. • Communication Noise – Noise in communication occurs when an outside force in someway affects delivery of the message. The most obvious example is when loud sounds block the receiver’s ability to hear a message. Nearly any distraction to the sender or the receiver can lead to communication noise. In advertising, many customers are overwhelmed (i.e., distracted) by the large number of advertisements they encountered each day. Such advertising clutter (i.e., noise) makes it difficult for advertisers to get their message through to desired customers. STEPS REQUIRED TO CONNECT PC TO INTERNET General Guidelines Use a Firewall The most important step when setting up a new computer is to install a firewall BEFORE you connect it to the Internet. Whether this is a hardware router/firewall or a software firewall it is important that you have immediate protection when you are connecting to the Internet. This is because the minute you connect your computer to the Internet there will be remote computers or worms scanning large blocks of IP addresses looking for computers with security holes. When you connect your computer, if one of these scans find you, it will be able to infect your computer as you do not have the latest security updates. You may be thinking, what are the chances of my computer getting scanned with all the millions of computers active on the Internet. The truth is that your 39

- 40. chances are extremely high as there are thousands, if not more, computers scanning at any given time. The best scolution is if you have a hardware router/firewall installed.. This is because you will be behind that device immediately on turning on your computer and there will be no lapse of time between your connecting to the Internet and being secure. If a hardware based firewall is not available then you should use a software based firewall. Many of the newer operating systems contain a built-in firewall that you should immediately turn on. If your operating system does not contain a built-in firewall then you should download and install a free firewall as there are many available. If you have a friend or another computer with a cd rom burner, download the firewall and burn it onto a CD so that you can install it before you even connect your computer to the Internet. We have put together a tutorial on firewalls that you can read by clicking on the link below: Disable services that you do not immediately need Disable any non-essential services or applications that are running on your computer before you connect to the Internet. When an operating system is not patched to the latest security updates there are generally a few applications that have security holes in them. By disabling services that you do not immediately need or plan to use you minimize the risk of these security holes being used by a malicious user or piece of software. Download the latest security updates Now that you have a firewall and non-essential services disabled, it is time to connect your computer to the Internet and download all the available security updates for your operating system. By downloading these updates you will ensure that your computer is up to date with all the latest available security patches released for your particular operating system and therefore making it much more difficult for you to get infected with a piece of malware. Use an Antivirus Software Many of the programs that will automatically attempt to infect your computer are worms, trojans, and viruses. By using a good and up to date antivirus software you will be able to catch these programs before they can do much harm. You can find a listing of some free antivirus programs at the below link: 40

- 41. Browse through the various free antivirus programs at the above list and install one before you connect to the Internet. Download it from another computer and burn it onto a CD so that it is installed before you connect. Specific Steps for Windows 2000 Windows 2000 does not contain a full featured firewall, but does contain a way for you to get limited security until you update the computer and install a true firewall. Windows 2000 comes with a feature called TCP filtering that we can use as a temporary measure. To set this up follow these steps: 1. Click on Start, then Settings and then Control Panel to enter the control panel. 2. Double-click on the Network and Dial-up Connections control panel icon. 3. Right-click on the connection icon that is currently being used for Internet access and click on properties. The connection icon is usually the one labeled Local Area Connection 4. Double-click on Internet Protocol (TCP/IP) and then click on the Advanced button. 5. Select the Options tab 6. Double-click on TCP/IP Filtering. 7. Put a checkmark in the box labeled Enable TCP/IP Filtering (All Adapters) and change all the radio dial options to Permit Only. 8. Press the OK button. 9. If it asks to reboot, please do so. After it reboots your computer will now be protected from the majority of attacks from the Internet. Now immediately go to http://www.windowsupdate.com/ and download and install all critical updates and service packs available for your operating system. Keep going back and visiting this page until all the updates have been installed. Once that is completed install an antivirus software and free firewall, and disable the filtering we set up previously. Specific Steps for Microsoft Windows XP 41